The New Era of Visual Storytelling

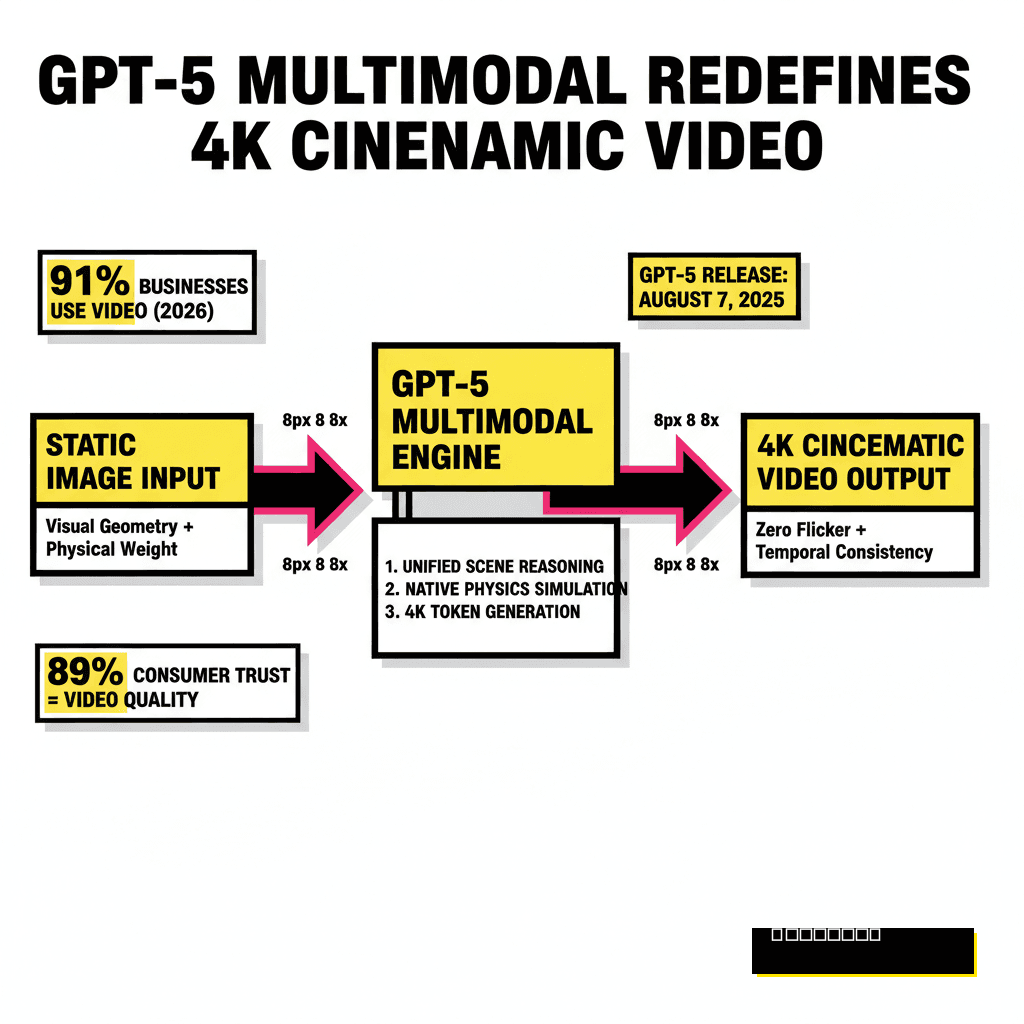

OpenAI changed everything when they released GPT-5 on August 7, 2025. Creators quickly moved past simple text generation to something much more ambitious. We no longer just ask for words, as we now use the model to interpret visual geometry and physical weight. Static photos used to be dead ends for high-end production, but the multimodal core of GPT-5 acts as a bridge to cinematic motion.

Instead of flat animations, we now see true spatial reasoning. Professional creators are seeing a massive shift in how they build assets. According to the 2026 Wyzowl Video Marketing Report, 91% of businesses now use video as a primary marketing tool. Quality expectations have scaled alongside this adoption. Consumers are becoming more discerning, with 89% stating that video quality directly impacts their trust in a brand. If your AI-generated video looks like a flickering mess, you're losing authority.

Recent developments in multimodal routing mean GPT-5 can handle image analysis and video physics in a single interaction. You're not just tacking on motion, because you're actually teaching the AI to understand the scene. This approach yields results that rival traditional cinematography. If you are looking for alternatives to the standard OpenAI pipeline, our guide on the 5 Best Sora Alternatives for Marketing Pros offers a comprehensive look at the 2026 competitive landscape.

GPT-5 Architecture vs. Legacy Video AI

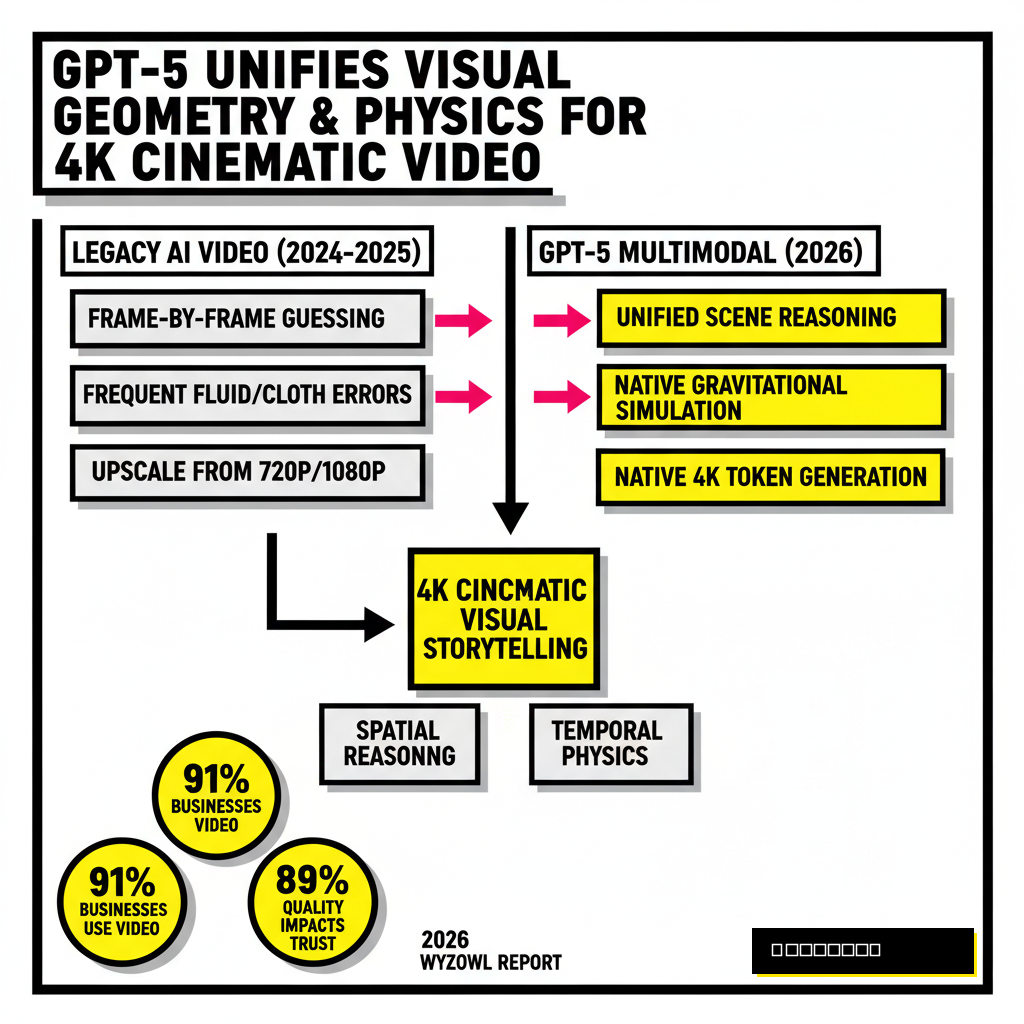

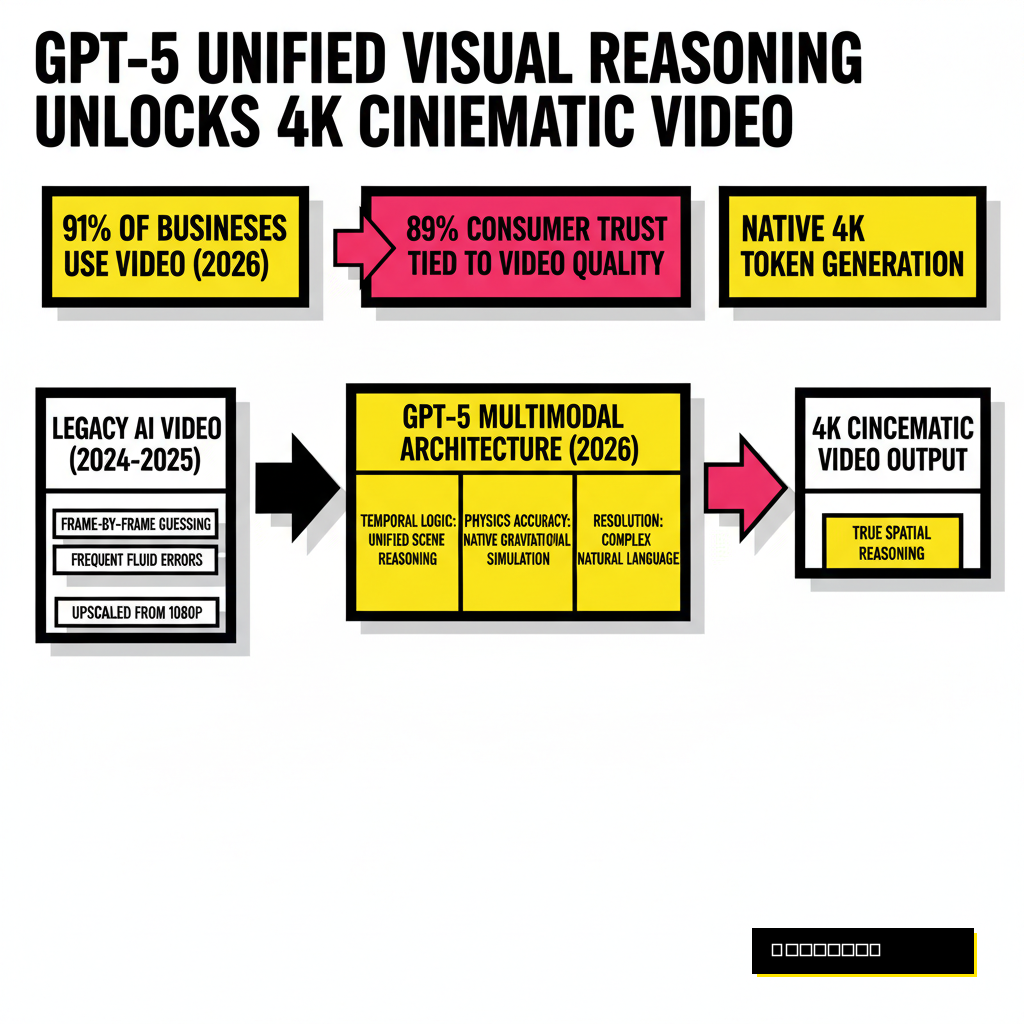

Older models struggled with temporal consistency. Frames would drift, textures would warp, and the internal logic of a scene often collapsed. GPT-5 solves this by unifying its reasoning engine with visual tokens. It treats every frame as a logical extension of the previous one, governed by the physics parameters you define in your prompt.

| Feature | Legacy AI Video (2024-2025) | GPT-5 Multimodal (2026) |

|---|---|---|

| Temporal Logic | Frame-by-frame guessing | Unified scene reasoning |

| Physics Accuracy | Frequent fluid/cloth errors | Native gravitational simulation |

| Resolution Control | Upscaled from 720p/1080p | Native 4K token generation |

| Prompt Control | Keyword soup | Complex natural language directives |

Most professionals now realize that prompt engineering is more about physics than aesthetics. Using the right terminology for camera movement ensures the AI doesn't just 'animate' the image, but actually 'films' it. Logic dictates that if you describe the lens type, the AI will simulate the appropriate depth of field. This is how we move from AI-looking clips to true cinema.

10 Master Prompts for Cinematic Motion

Crafting the perfect prompt requires a mix of technical camera jargon and sensory descriptions. These prompts are designed to be used with the GPT-5 Vision-to-Video API. Copy and adapt these to your specific images to see the difference in 4K output.

The Dolly Zoom

- Analyze the depth of the central subject.

- Execute a reverse dolly movement while zooming in digitally.

- Maintain fixed subject proportions while the background distorts.

- Ensure 4K grain consistency throughout the shift.

The Macro Bloom

- Identify micro-textures on the surface.

- Shift focus from the foreground grain to the background light source.

- Simulate an f/1.4 aperture bokeh expansion.

- Apply 4K luminance tracking to the highlights.

Beyond these, you should explore prompts like 'The Anamorphic Flare Shift' and 'The Atmospheric Particle Swirl'. These focus on environmental details that sell the realism. Another powerful option is 'The Time-Remapped Sweep', which allows you to start in slow motion and ramp up speed as the camera rotates. Each of these requires the AI to understand the 3D volume of your 2D photo.

For those automating large-scale content pipelines, these prompts can be integrated into broader workflows. Our recent breakdown of Multimodal AI for Support Automation highlights how similar logic is being used to generate dynamic video documentation for global brands. Consistency is the goal across all these applications.

Physics-Engine Alignment and Lighting

Successful video generation depends on how the AI handles light. GPT-5 uses a latent diffusion process that respects the light sources in your original photo. If your photo has a sunset, the AI calculates how shadows should stretch as the camera moves. This isn't just a filter, as it is a full reconstruction of the scene's lighting environment.

McKinsey's Global AI Survey 2026 notes that companies using AI for creative production are seeing a 3.2x ROI on content drafting. The high return comes from the speed of iteration. A director can now test ten different lighting setups on a single still image in minutes. Earlier versions of AI video couldn't maintain this level of detail, but the unified routing in GPT-5 ensures that every ray of light follows the laws of physics.

The Workflow from Still to 4K Sequence

Efficiency in 2026 is built on structured workflows. You cannot simply throw a prompt at an image and expect Hollywood results every time. A multi-step process ensures that the AI understands the context before it starts the compute-heavy task of 4K rendering. This saves both time and API credits.

Start with the 'gpt-5-mini' model for your initial motion tests. It is significantly cheaper, costing only $0.25 per million input tokens compared to the flagship's $1.25. Once the motion path is locked in, switch to the flagship model for the final 4K render. This tiered approach is how elite creators manage their production budgets while maintaining high output quality.

Navigating the 2026 Regulatory Landscape

Every creator must keep the legal environment in mind. The EU AI Act reaches its full enforcement on August 2, 2026. Article 50 of this regulation is particularly important for anyone generating cinematic video. It mandates that any AI-generated or substantially manipulated content must be clearly labeled. This applies to both machine-readable metadata and human-readable disclosures.

Compliance isn't just about avoiding fines. It is about building a sustainable creative business. Many platforms now require digital signatures, such as C2PA, to be embedded in the file metadata. If you are unsure about your current strategy, reviewing Credo AI vs Vanta can help you decide on the right governance framework. Failure to comply can lead to content being suppressed by major social algorithms that now prioritize authenticated media.

Transparency is becoming a competitive advantage. Brands that openly state their use of AI while maintaining high artistic standards are winning over audiences. People value the creativity behind the prompt as much as the technical execution. Using GPT-5 to turn your photos into cinema is a powerful skill, but doing it ethically ensures you'll still be in business when the next model arrives.