The Compliance Cliff of August 2026

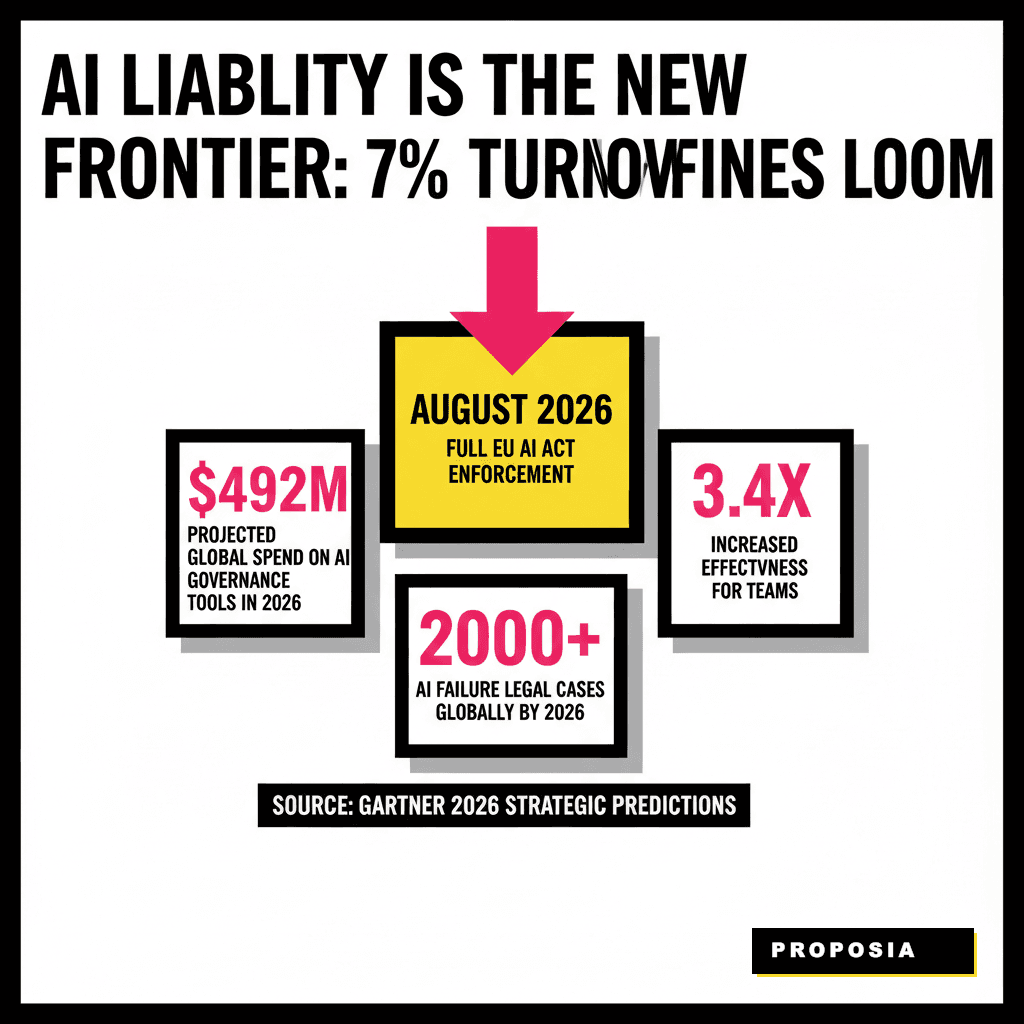

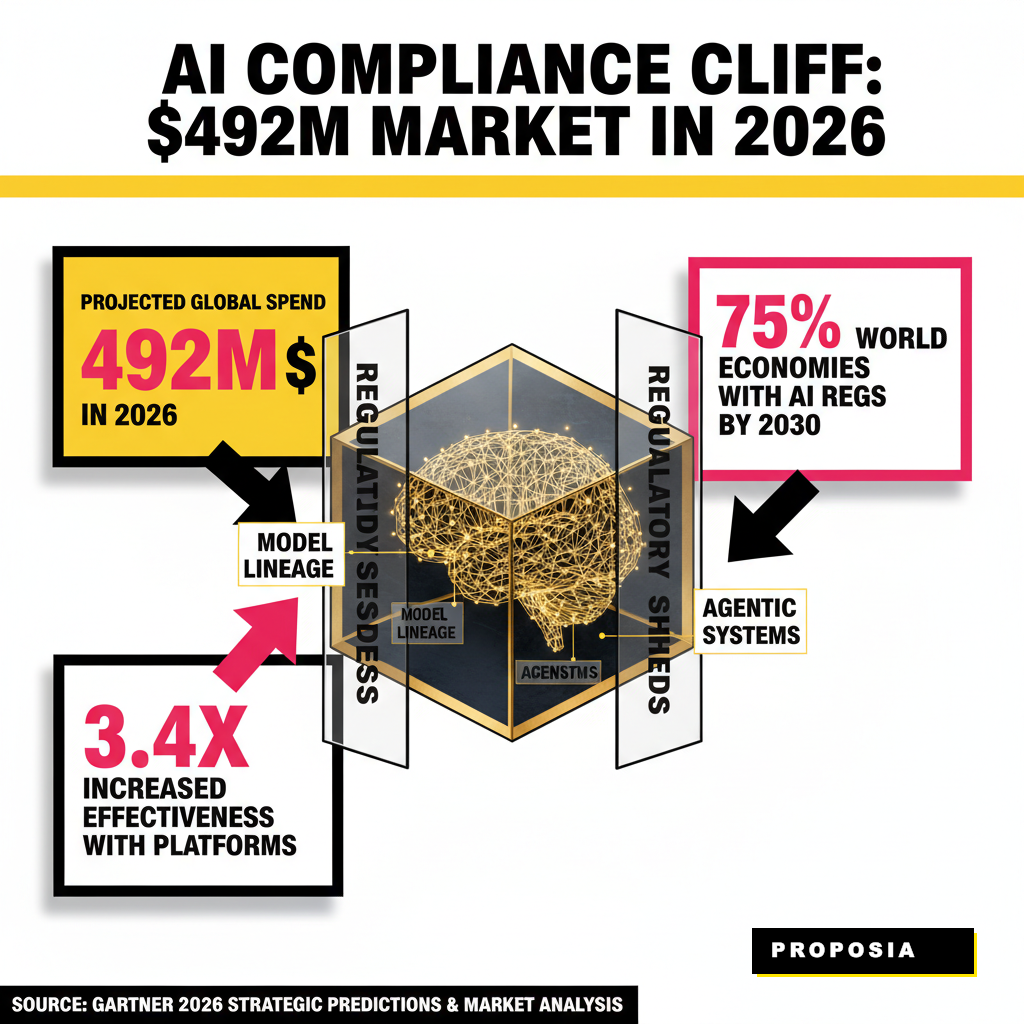

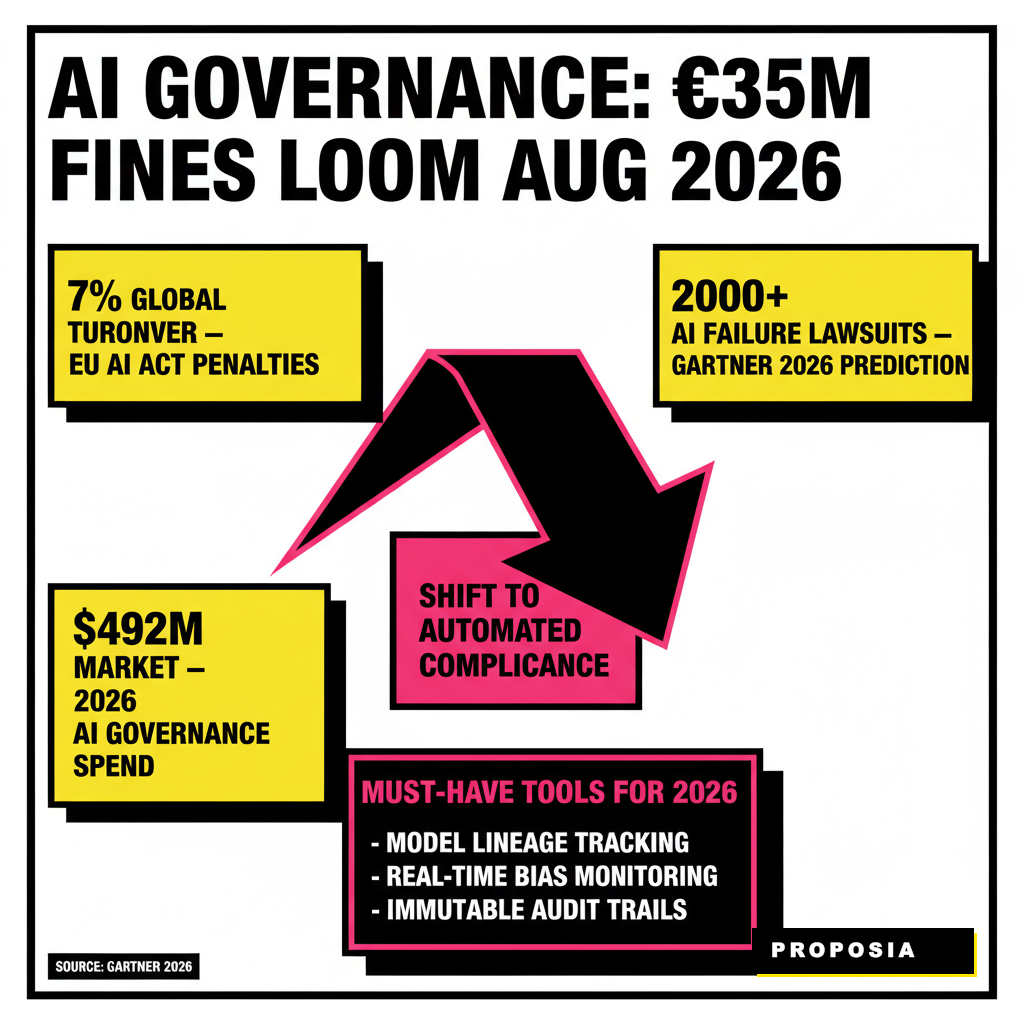

August 2, 2026, marks a pivotal shift for every organization operating in the global market. This specific date represents the full enforcement of high-risk requirements under the EU AI Act, a regulation that carries penalties of up to €35 million or 7% of global annual turnover. Boards of directors no longer view artificial intelligence as a experimental playground. Instead, they see a complex liability that requires rigorous oversight, especially as Gartner predicts that by the end of 2026, legal claims related to AI failures will exceed 2,000 cases worldwide.

Managing this risk manually has become impossible. Traditional governance, risk, and compliance (GRC) frameworks often fail to account for the dynamic, non-deterministic nature of large language models and agentic systems. Senior leaders now require specialized infrastructure to track model lineage, monitor for bias in real-time, and generate immutable audit trails. Effective governance is shifting from a periodic checkbox exercise to a continuous, automated function embedded directly into the development lifecycle.

Source data for these projections comes from official Gartner 2026 Strategic Predictions and updated market analysis. Organizations that fail to implement these tools risk more than just fines. They face the prospect of "pilot purgatory," where promising initiatives are blocked by legal teams because the underlying risks remain unquantified. As discussed in our previous analysis of navigating the 2026 AI audit, early preparation can save millions in operational delays.

Comprehensive Platforms for Enterprise Oversight

Choosing the right platform depends heavily on your existing technical stack and the maturity of your AI portfolio. Enterprises with hundreds of models across different business units typically gravitate toward centralized hubs that offer broad visibility. These platforms act as a single source of truth, linking technical performance metrics with legal policy requirements. Modern tools now include "policy packs" that automatically map your model's behavior to specific articles in the EU AI Act or the NIST AI Risk Management Framework.

Credo AI has emerged as a frontrunner for organizations prioritizing regulatory alignment. Their platform provides end-to-end lifecycle governance, allowing cross-functional teams to collaborate on risk assessments and impact reports. Meanwhile, IBM watsonx.governance targets the large-scale enterprise, offering deep integration with existing data pipelines and automated documentation features. Holistic AI focuses specifically on the European landscape, providing specialized audits for high-risk use cases like recruitment and financial services. OneTrust remains the favorite for firms where AI governance is an extension of their broader privacy and data protection strategy.

| Platform | Primary Strength | Best For |

|---|---|---|

| Credo AI | Policy intelligence and regulatory mapping | Multi-jurisdictional compliance |

| IBM watsonx.governance | Lifecycle automation and model inventory | Large-scale enterprise operations |

| Holistic AI | Specialized risk auditing and red teaming | High-risk EU AI Act classification |

| OneTrust | Privacy and GRC integration | Data-centric governance teams |

Lauren Kornutick, a Director Analyst at Gartner, suggests that specialized AI governance platforms provide oversight that traditional GRC tools cannot match. She notes that these technologies can reduce regulatory expenses by roughly 20%, allowing those funds to be redirected toward innovation. Without these tools, legal teams often become bottlenecks, slowing down the deployment of competitive advantages like Sovereign AI infrastructure.

Specialized Tools for Model Observability and Audit Trails

Monitoring the runtime behavior of an AI system is just as critical as the initial policy setup. LLMs are prone to drift and hallucinations that can occur long after the initial audit is complete. Fiddler AI excels in this area by providing real-time observability and bias detection. Their system allows engineers to explain model decisions on a granular level, satisfying the growing demand for explainable AI (XAI) in regulated sectors. If a model starts favoring one demographic over another in a loan application process, Fiddler can trigger an immediate alert and pause the system.

Transparency requires more than just performance charts. Monitaur offers a unique focus on the "policy-to-proof" pipeline, automating the creation of detailed audit trails. This is particularly valuable for industries like insurance and healthcare, where every decision must be defensible to external regulators. By capturing model metadata, governance decisions, and testing results in an immutable format, Monitaur ensures that your organization is always in a state of audit readiness.

AuditBoard has also expanded its capabilities to bridge the gap between internal audit teams and machine learning engineers. Their platform connects risk silos, ensuring that the CTO and the Chief Compliance Officer are looking at the same data points. This collaborative environment is essential for managing the growing complexity of agentic systems, where AI agents might perform multi-step tasks across different enterprise systems without direct human supervision. Ensuring these agents operate within defined guardrails is the new frontier of 2026 compliance.

Automating the Evidence Collection Workflow

Auditing is traditionally a labor-intensive process involving endless spreadsheets and manual document verification. DataSnipper and Trullion are changing this dynamic by applying AI to the audit process itself. DataSnipper integrates directly into Excel, using intelligent automation to match evidence and verify documents at scale. This allows external and internal auditors to focus on high-level analysis rather than repetitive data entry. For financial departments, this shift means that a 100% data sampling approach is finally feasible, replacing the old method of testing small, potentially unrepresentative batches.

Trullion focuses on the end-to-end accounting workflow, automating the verification of lease agreements and financial contracts. By using natural language processing to extract key terms from complex legal documents, Trullion reduces the risk of human error during the compliance review. These tools are no longer just about efficiency. They provide a level of accuracy that is required to meet the stringent transparency standards set by the official EU AI Act documentation.

Microsoft Purview rounds out the list for organizations heavily invested in the Azure ecosystem. Purview embeds governance directly into the data flow, providing sensitive information protection for AI prompts and responses. This is a vital tool for preventing intellectual property leakage, a major concern as more employees use tools like Copilot for daily tasks. By 2026, Purview's "Copilot Control System" will allow administrators to manage AI assistant behavior across the entire Microsoft 365 suite, ensuring that every interaction remains within the company's ethical guidelines.

Bridging the Gap Between Governance and Performance

There is a common misconception that governance slows down innovation. In reality, a robust governance framework provides the psychological safety required for engineers to experiment with more powerful models. When the boundaries are clear and the monitoring is automated, teams can deploy updates with higher frequency. The shift from periodic audits to continuous monitoring is what separates leaders from laggards in the current economy. Leading firms are now implementing "compliance-as-code," where regulatory rules are written directly into the deployment scripts.

Navrina Singh, the CEO of Credo AI, has often argued that the future of AI is about alignment. Making these tools beneficial at every level requires a deep integration of human intent and machine execution. This alignment is only possible when you have the tools to measure it accurately. Without a dedicated governance stack, you are essentially flying blind in a storm of increasing regulatory scrutiny.

Periodic Audits (The Old Way)

- Manual evidence collection every 6-12 months

- Small data samples that may miss edge cases

- Reactive response to bias or model drift

- High risk of non-compliance between cycles

Continuous Governance (The 2026 Standard)

- Real-time monitoring and automated alerts

- 100% data analysis for full transparency

- Proactive guardrails and runtime enforcement

- Constant state of audit readiness for regulators

Infrastructure decisions in 2026 are increasingly driven by survivability under regulatory pressure. Every layer of the AI architecture, from the data pipelines to the final user interface, must demonstrate accountability. This is especially true for companies utilizing Generative UI frameworks, where the content shown to the user is created on the fly. Ensuring that these dynamic interfaces don't inadvertently violate fairness standards requires a governance layer that can keep pace with the generation speed.

Future-Proofing Your Audit Readiness

Preparation for a 2026 audit should have started yesterday, but it is not too late to course-correct. The first step is conducting a comprehensive inventory of every AI system currently in use, including "shadow AI" tools that employees may have adopted without IT approval. Platforms like Holistic AI and Credo AI offer discovery features that can help identify these hidden risks. Once your inventory is clear, you can begin the process of classifying each system based on its risk level under the new global standards.

Investing in the right tools is only half the battle. You also need to foster a culture where compliance and performance are seen as two sides of the same coin. This involves training for both developers and business leaders on the ethical implications of the systems they build and deploy. As AI transitions from a standalone tool to the core economic infrastructure of the enterprise, the ability to audit and govern these systems will become your most important competitive moat.

Focusing on the long-term ROI of governance is the best way to secure board-level buy-in. While the upfront costs of these platforms can be significant, the cost of a single major compliance failure or a public bias scandal is far higher. In the high-stakes environment of 2026, the winners will be the organizations that can prove their AI is not just intelligent, but also responsible and fully auditable.