The Architecture of Deliberation in Claude 4

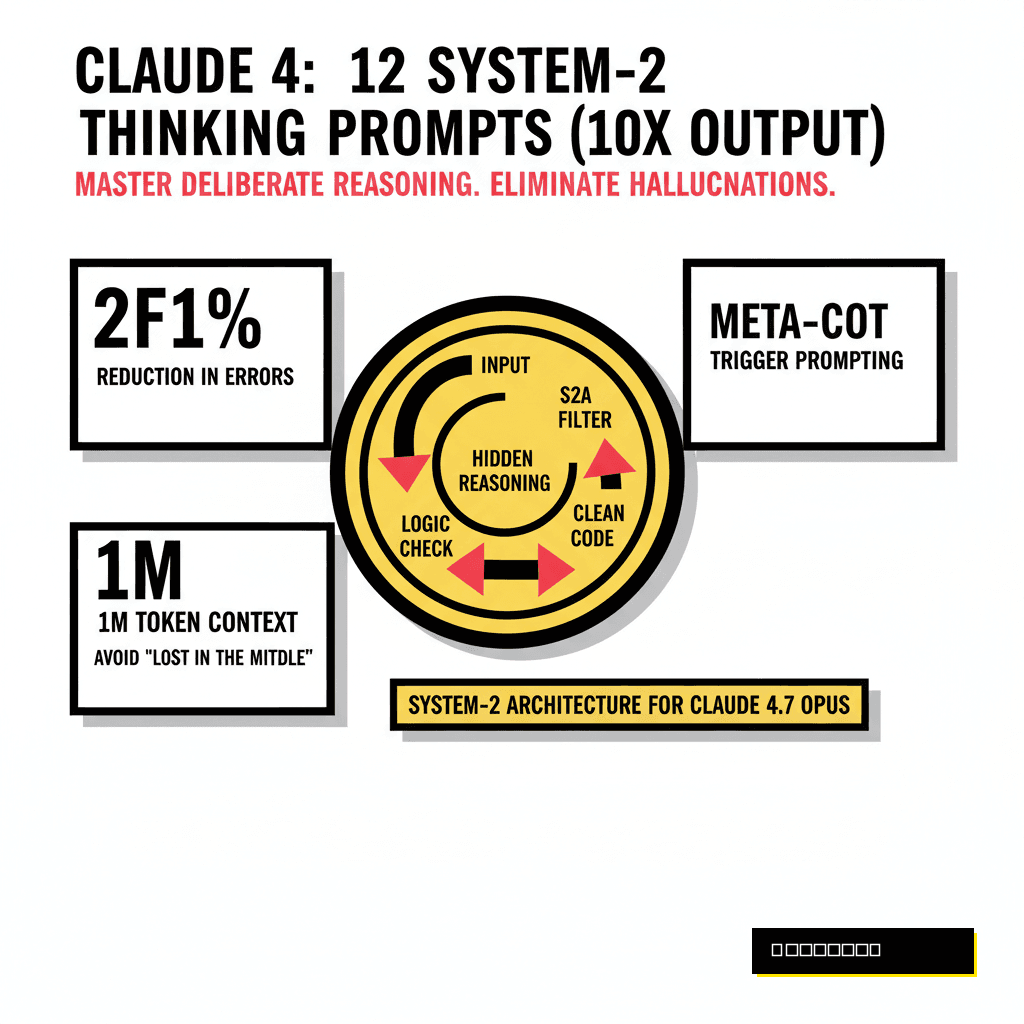

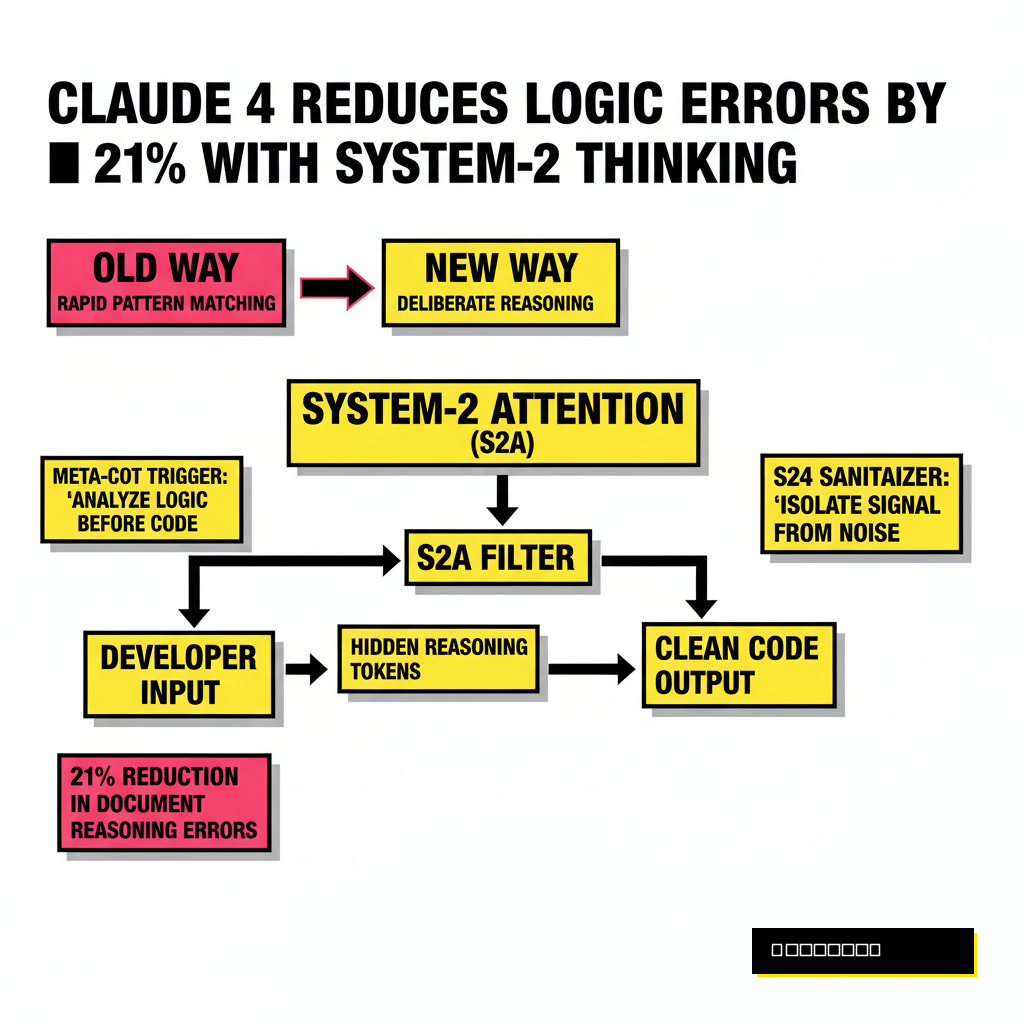

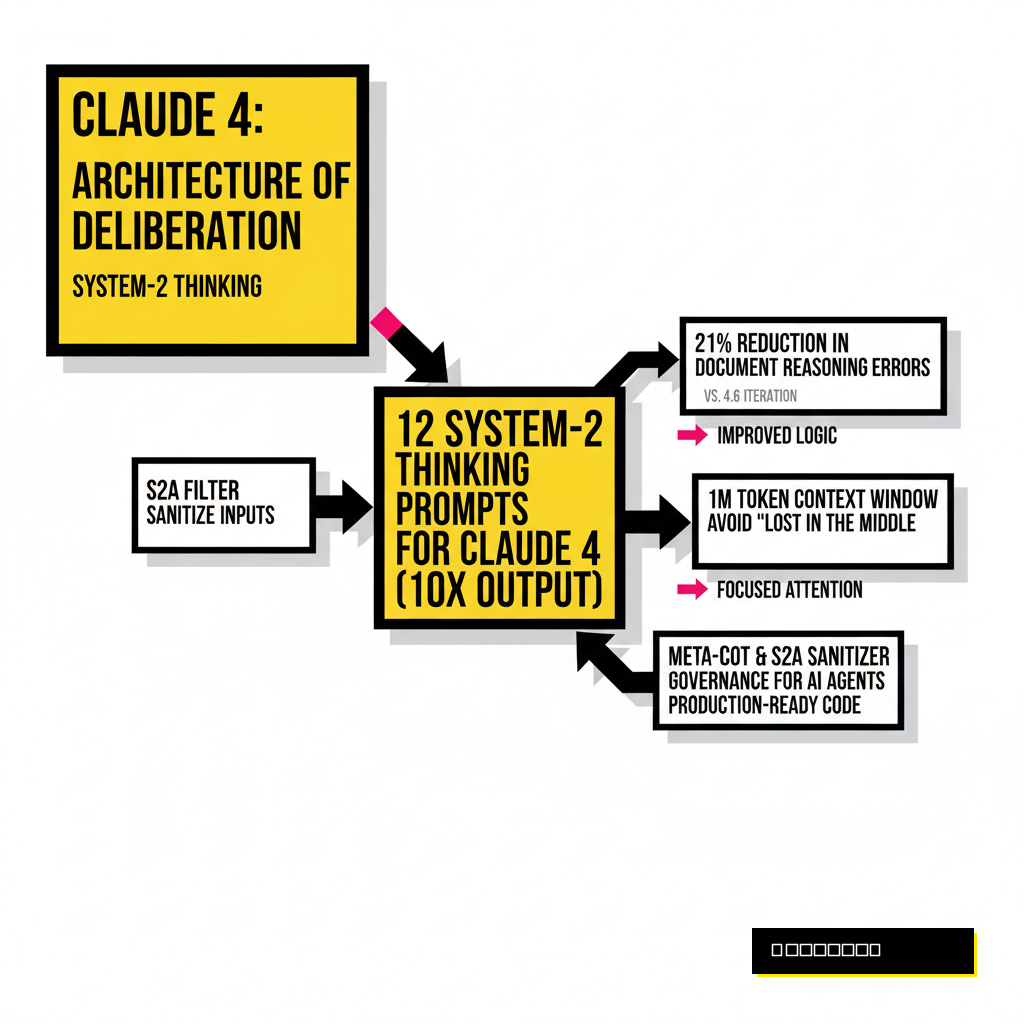

Every developer who has pushed a complex pull request knows the difference between a quick fix and a deep architectural change. While standard large language models often operate in a mode of rapid pattern matching, Claude 4 introduces a structural shift toward deliberate reasoning. Senior engineers call this System-2 thinking, a term borrowed from Daniel Kahneman to describe the slow, logical, and effortful cognitive processes that human experts use to solve novel problems. Instead of simply predicting the next token, the latest Opus 4.7 model uses hidden reasoning tokens to simulate an internal monologue before committing to an answer. According to official Anthropic documentation, this hybrid reasoning architecture allows the model to catch its own logic errors in real-time, leading to a 21 percent reduction in document reasoning errors compared to the previous 4.6 iteration.

Developers often struggle with the 'stochastic parrot' effect where a model sounds confident but fails on edge cases. System-2 prompting bypasses this by forcing the model to slow down. When you use the right syntax, you aren't just asking for a solution: you're demanding a verification process. Research from a 2025 arXiv paper on Meta-CoT suggests that explicitly modeling the underlying reasoning required for a task can bridge the gap between AI hallucination and production-ready code. If you are already managing complex deployments, perhaps using autonomous GitHub PR assistants, these prompts will serve as the governance layer for your AI agents.

Prompt Engineering for Logical Integrity

Standard prompts often fail because they include too much 'noise' or irrelevant context that distracts the model's attention. System-2 Attention (S2A) is a technique designed to sanitize inputs before the core processing begins. By asking Claude 4 to first identify and then isolate the signal from the noise, you ensure the model's limited reasoning window stays focused on the critical path. This is especially vital when working with 1M token context windows where the temptation is to dump entire repos into a single prompt. Such a strategy often leads to 'lost in the middle' phenomena where the model misses subtle logic bugs in deep nested functions.

Consider the following three prompts to stabilize your logic. First, use the Meta-CoT Trigger: 'Analyze the underlying logic required for this task before writing any code. Identify the mathematical or structural principles at play.' Second, apply the S2A Sanitizer: 'Rewrite my previous prompt to remove all irrelevant context, focusing only on the specific variables and constraints that affect the output.' Third, use the Recursive Decomposition: 'Break this problem into five sequential sub-problems. Solve each one independently and verify the output of step N before proceeding to step N+1.' These methods force Claude to use its extended thinking capacity rather than relying on the first intuitive path it finds.

Debugging Complex Systems with Recursive Refinement

Debugging in a microservices environment requires more than just looking at a stack trace. It demands a holistic understanding of how state changes propagate through a system. Claude 4 excels here if prompted to act as a 'Red Team' against its own suggestions. A common failure in AI-assisted coding is the 'confirmation bias' loop where the model keeps repeating a slightly modified version of a wrong answer. Breaking this cycle requires a prompt that incentivizes the model to find reasons why its own code might fail. You can even combine this with local Llama-4 instances to run parallel checks on sensitive logic.

Standard Prompt

- 'Fix this bug in the React component.'

- Result: Often patches the symptom but misses the root cause.

- High chance of regression in state management.

System-2 Prompt

- 'Trace the state flow through this component and identify three ways it could fail under high load.'

- Result: Deep analysis of race conditions and edge cases.

- Ensures production-grade stability.

Three powerful prompts for this stage include the Adversarial Reviewer: 'Act as a senior security engineer. Find three vulnerabilities in the code you just wrote and suggest patches for each.' Follow this with the State-Trace Simulation: 'Simulate the execution of this function with a null input, an oversized input, and a concurrent request. Document the internal state at every step.' Finally, use the Refactor Consensus: 'Propose three different architectural patterns for this feature. Compare them based on memory overhead and maintainability before selecting the best one.' Such rigor ensures that the code isn't just 'working' but is actually robust enough for a 2026 enterprise environment.

Architectural Design and Trade-off Analysis

Choosing between a monolithic and a serverless architecture is rarely about which is 'better' in a vacuum. It is about balancing latency, cost, and developer velocity. Claude 4's Opus 4.7 model is particularly adept at this kind of multi-attribute decision making. However, to get the best results, you must provide a 'First Principles' framework. Instead of asking 'Should I use Rust?', ask the model to evaluate the specific constraints of your hardware and network topology. This prevents the model from giving generic advice and forces it to ground its reasoning in your actual technical requirements.

| Prompt Strategy | Reasoning Depth | Success Rate (SWE-bench) |

|---|---|---|

| Zero-Shot (System-1) | Shallow / Pattern Matching | ~48% |

| Chain-of-Thought | Moderate / Sequential | ~62% |

| System-2 (Extended Thinking) | Deep / Multi-Agent Simulation | 72.5% |

To guide architectural choices, use the First Principles Decomposition: 'List the immutable physical and logical constraints of this system. Derive the necessary architecture from these constraints alone, ignoring current trends.' Next, try the Constraint-First Optimizer: 'Design a database schema that prioritizes write-heavy workloads over read latency. Quantify the trade-offs in terms of CPU cycles.' Finally, the Negative Space Analysis is indispensable: 'Tell me what this architecture is NOT capable of. Identify the specific scenarios where this design will break or become prohibitively expensive.' These prompts strip away the AI's tendency to be a 'people pleaser' and force it to be a cold, calculating engineer.

Security and Edge Case Discovery

Security is no longer a post-build phase. In 2026, the speed of development means security must be baked into the prompt level. McKinsey's 2025 report on AI productivity highlighted that while top-tier teams are seeing 31 to 45 percent gains in software quality, those who ignore automated reasoning are seeing an 'AI slop' problem that increases technical debt. Claude 4's ability to handle parallel tool use during its thinking phase makes it a formidable ally for security audits. It can reason about a piece of code while simultaneously 'searching' for known CVEs that might affect your dependencies.

For the final set of prompts, start with the Dependency Mapping Audit: 'Review this package.json and trace the dependency tree. Identify any orphaned or vulnerable packages that could lead to a supply chain attack.' Follow up with the Security Boundary Validator: 'Define the trust boundaries for this API. Write a test suite that specifically targets those boundaries with malformed JSON and injection strings.' Finally, use the Legacy Integration Bridge: 'Assume this new service must interact with a 15-year-old COBOL mainframe. Identify the three most likely data-type mismatches and write a robust adapter layer.' These prompts ensure your code survives the chaos of real-world infrastructure.

Mastering Claude 4 is not about finding a magic phrase. It is about understanding that the model is now a reasoning engine, not just a text generator. By adopting a System-2 prompting strategy, you shift the burden of proof from your own manual testing to the model's internal verification logic. This transition is what separates the 'prompt engineers' from the true AI-fluid software architects of the coming decade. As you scale your systems, remember that the most valuable output of an AI isn't the code itself, but the verified logic that stands behind it.