The Shift from Chatbots to Agentic Assistants in 2026

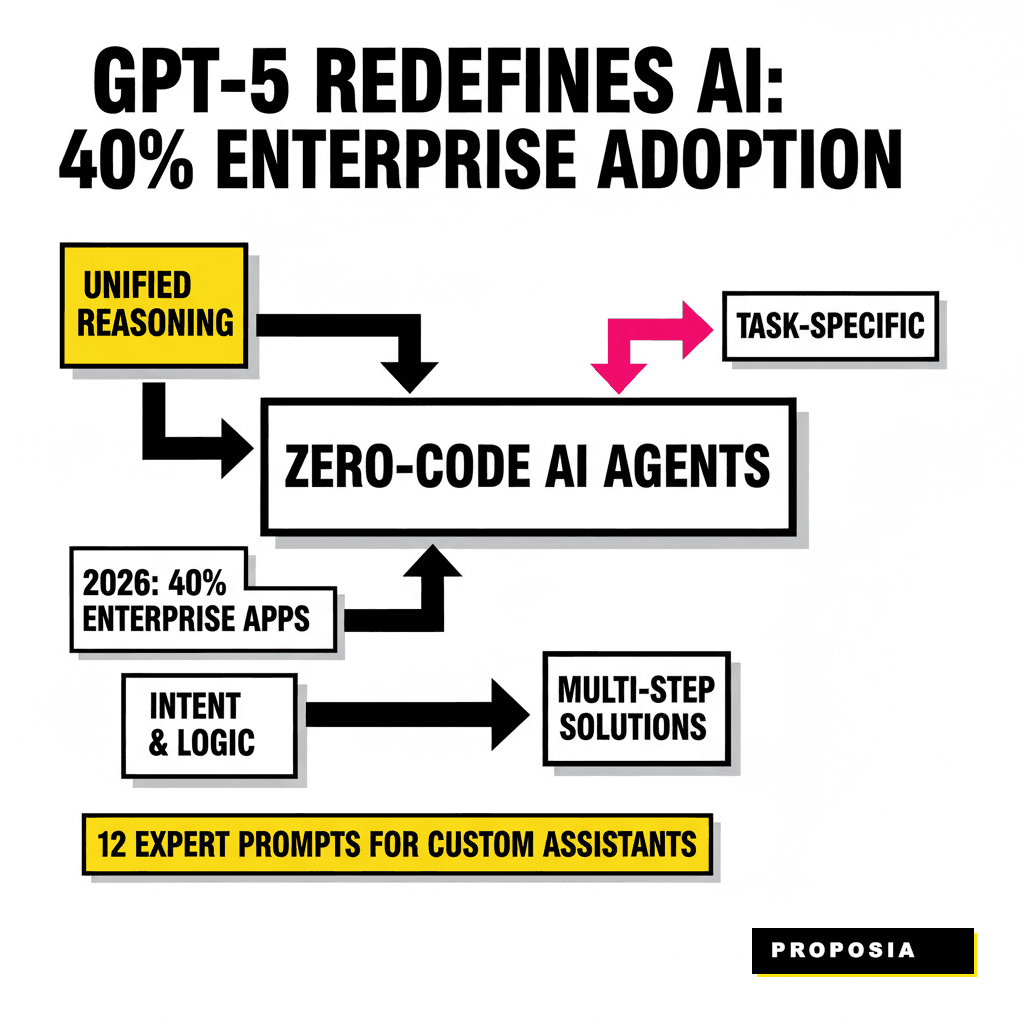

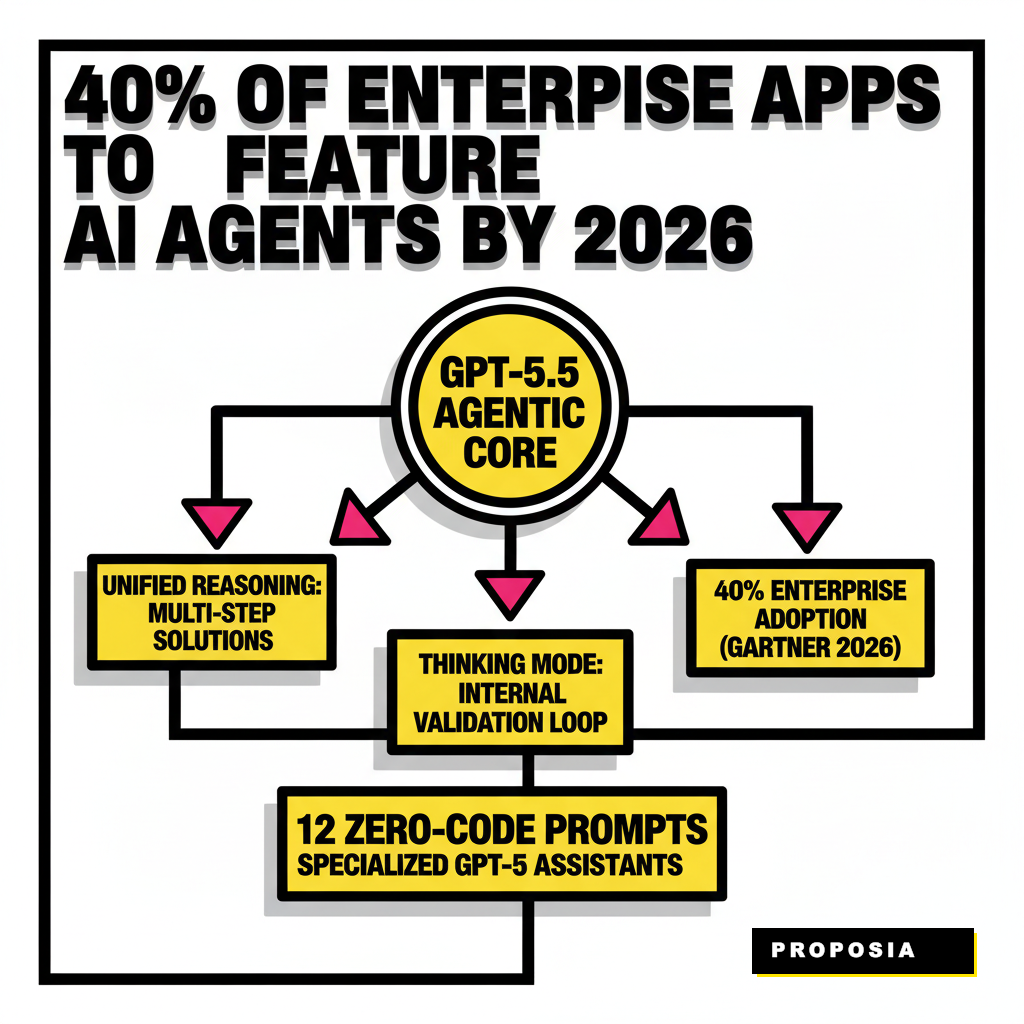

Artificial intelligence moved beyond simple chat interfaces this year. As of April 2026, the release of GPT-5.5 has redefined what a custom assistant can do without a single line of Python. These systems now utilize unified reasoning, meaning they no longer just predict the next word but actively plan multi-step solutions. According to a 2025 Gartner report, 40% of enterprise applications now feature task-specific AI agents, a massive jump from the experimental pilots of previous years.

Building a specialized assistant today requires a focus on intent and logic rather than syntax. GPT-5 excels at understanding the nuances of a user's goal, often anticipating needs through its updated memory architecture. Instead of broad queries, experts use highly structured prompts to ground the model in specific roles. This approach ensures the assistant remains within its operational boundaries while maximizing its reasoning capabilities.

Architecting Reasoning: The Blueprint for a GPT-5 Assistant

Specialized assistants function best when they follow a clear cognitive path. GPT-5 introduces a thinking mode that allows the model to pause and verify its own logic before outputting a result. This internal validation loop reduces hallucinations and improves the quality of complex outputs like legal audits or market forecasts. Users can trigger this behavior by embedding recursive instructions into their system prompts.

Successful builders treat their prompts as a set of operating instructions for a digital employee. You're defining the persona, the available tools, and the success criteria for every interaction. Integrating these assistants into your existing stack often involves 5 agentic workflows to automate your content calendar or other operational hubs. This structural clarity allows the AI to navigate through massive datasets without losing track of the primary objective.

Strategic Research and Synthesis Prompts

Research assistants in the GPT-5 era must handle massive context windows. Modern prompts focus on cross-referencing multiple sources while identifying gaps in the available data. These assistants don't just summarize; they synthesize disparate information into actionable intelligence. Using the thinking mode, the following prompts allow your assistant to act as a high-level analyst for your team.

- Recursive Research Agent: "Act as a Lead Researcher. For every query, search the web for three primary sources, identify conflicting viewpoints, and then search specifically for data that resolves those conflicts before presenting a final synthesis."

- Cross-Document Synthesis Engine: "You are a Document Auditor. Analyze the uploaded PDF files and identify three areas where the internal policies contradict the 2026 industry regulations. Provide a table of risks and suggested corrections."

- Market Intelligence Forecaster: "Operate as a Market Strategist. Analyze current tech spend trends from 2025 to 2026 and predict the saturation point for AI server demand. Use the recent 2026 compute crunch report to ground your projections."

- Strategic Red-Teamer: "Assume the role of a Critical Strategist. Review my proposed business plan and find five ways a competitor could exploit our supply chain weaknesses. Rank each threat by probability and impact."

- Sentiment & Intent Mapper: "You are a Customer Experience Analyst. Process the last 100 support transcripts and map the emotional journey of the customers. Identify the exact moment where frustration peaks and suggest a script change to mitigate it."

- Executive Summary Engine: "Act as a Chief of Staff. Monitor the real-time feed of company updates and generate a 3-point daily brief for the CEO. Highlight only items requiring immediate decision-making or financial approval."

Standard Prompting

- Provides direct answers based on training data.

- Often misses recent market shifts.

- Single-pass response without verification.

Reasoning-First Prompting

- Uses recursive logic to find and resolve conflicts.

- Integrates real-time web data and internal files.

- Verifies its own output before the user sees it.

Operational and Data Workflow Prompts

Data processing tasks have shifted from manual entry to automated orchestration. GPT-5 assistants can now operate software and move across different tools to finish a task. This capability is particularly useful for teams looking to scale without increasing headcount. According to McKinsey's 2025 State of AI report, 71% of organizations now use generative AI in at least one business function, often to bridge the gap between legacy data and modern insights.

These prompts empower your assistant to handle the heavy lifting of data analysis and reporting. By setting clear parameters on data privacy and sovereign AI principles, you ensure the assistant remains compliant. This is critical for businesses using Nvidia Rubin architectures to build sovereign AI moats. The goal is to move the human from the position of a 'doer' to a 'reviewer' of AI-generated work.

Technical and Compliance Specialization Prompts

Specialized assistants now cover technical domains that previously required dedicated engineering teams. From auditing codebases to ensuring regulatory compliance, GPT-5 acts as a force multiplier for technical leads. The latest models excel at agentic coding, solving complex GitHub issues end-to-end with a success rate of nearly 59%. This leap in capability allows beginners to manage sophisticated technical environments through natural language alone.

Compliance is another area where specialized assistants shine. As global regulations tighten, maintaining an audit trail becomes a full-time job. A custom assistant can monitor every interaction and flag potential violations in real-time. This is why many firms are now turning to 10 must-have tools for AI governance and compliance audits in 2026 to safeguard their operations. The prompts below provide a starting point for these high-stakes roles.

- Automated Workflow Orchestrator: "You are a Systems Architect. Review the current project milestones and use the available Zapier actions to create a sequence that updates the team on Slack when a GitHub pull request is approved."

- Synthetic Data Generator: "Act as a Data Scientist. Generate a synthetic dataset of 500 customer profiles including purchase history and support intent. Ensure the data follows a realistic bell curve distribution for ages 18-65 and contains no personally identifiable information."

- Code Auditor for Sovereign AI: "Assume the role of a Security Engineer. Review the provided code for any external API calls that might leak data outside of our sovereign cloud environment. Suggest local alternatives for each instance."

- Generative UI Design Consultant: "You are a UI/UX Specialist. Suggest a dynamic layout for a landing page based on the 9 generative UI frameworks for personalization. Focus on accessibility and dark-mode optimization."

- Compliance & Governance Monitor: "Act as a Compliance Officer. Monitor the incoming data stream and flag any response that mentions competitor pricing or makes a definitive financial promise. Provide a weekly report on flagged incidents."

- Ethical Safety Scripter: "You are an Ethics Lead. Draft a set of internal guardrails for this assistant that prevents it from providing medical advice while still allowing it to summarize general health trends from the CDC website."

| Assistant Role | Primary Tool | Expected Outcome |

|---|---|---|

| Workflow Orchestrator | API Actions | Zero-manual-touch task completion |

| Compliance Monitor | Thinking Mode | Real-time risk mitigation and logs |

| Design Consultant | Visual Search | Context-aware UI prototypes |

Governance and Scaling Your Custom AI Fleet

Managing a single assistant is simple, but scaling to a fleet of specialized agents requires a centralized governance strategy. Organizations in 2026 are moving away from fragmented AI use toward unified platforms that offer oversight. This shift ensures that every agent follows the same ethical and operational guidelines, regardless of its specific task. Without these controls, the risk of runaway costs or data leakage increases significantly.

Setting up a 'Master Controller' assistant can help manage this complexity. This primary agent oversees the outputs of other specialized assistants, acting as a final quality check before any data leaves the internal network. This hierarchical approach mirrors human management structures and provides a clear audit trail for regulators. Scaling responsibly means prioritizing safety and reliability over pure speed, ensuring your AI initiatives deliver long-term value.