The 2026 Compliance Cliff

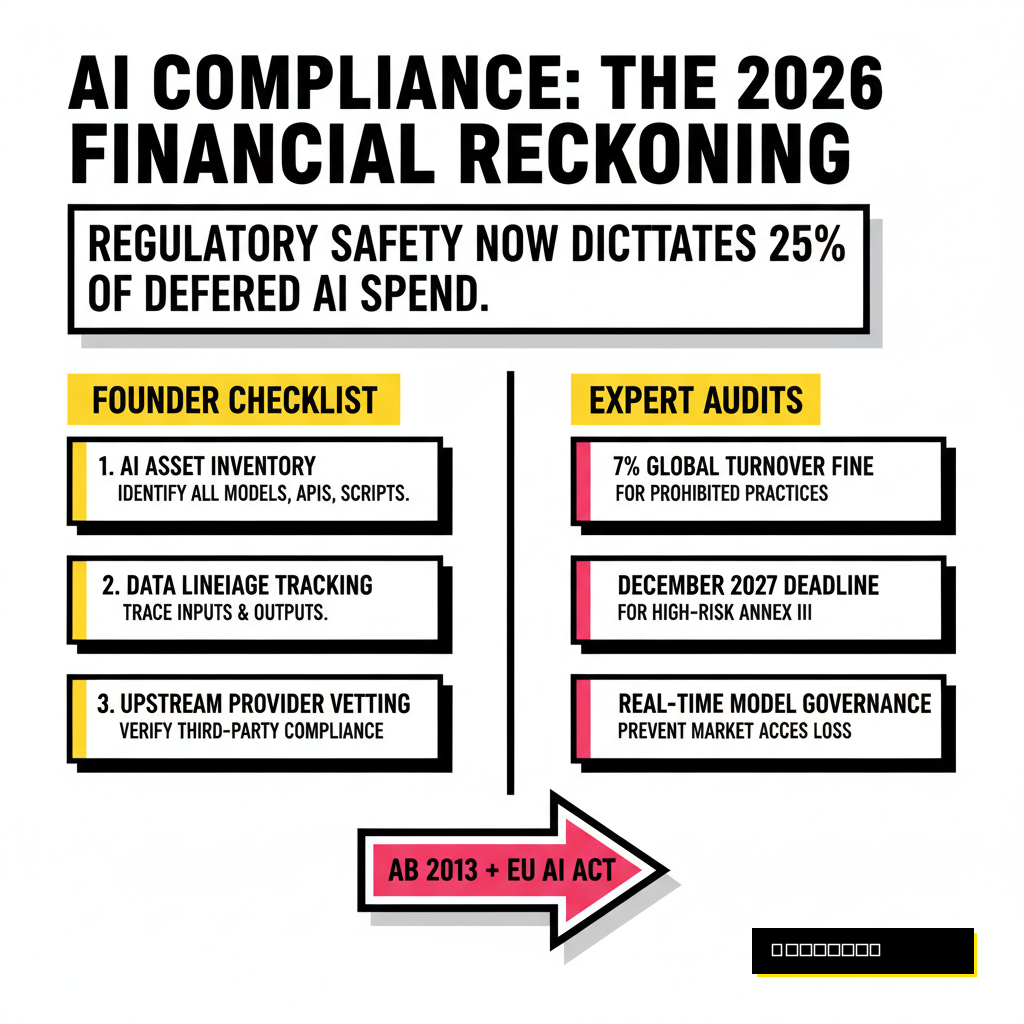

Founders operating in the current AI environment face a brutal reckoning. While 2024 and 2025 were years of frantic experimentation, 2026 has become the year of technical accountability. Financial rigor now dictates every deployment. According to a 2026 Forrester report, enterprises are deferring 25% of their planned AI spend to 2027 because they cannot prove immediate return on investment or guarantee regulatory safety. The days of shipping a wrapper and hoping for the best are over. You are now expected to treat your model weights with the same level of scrutiny as your bank account.

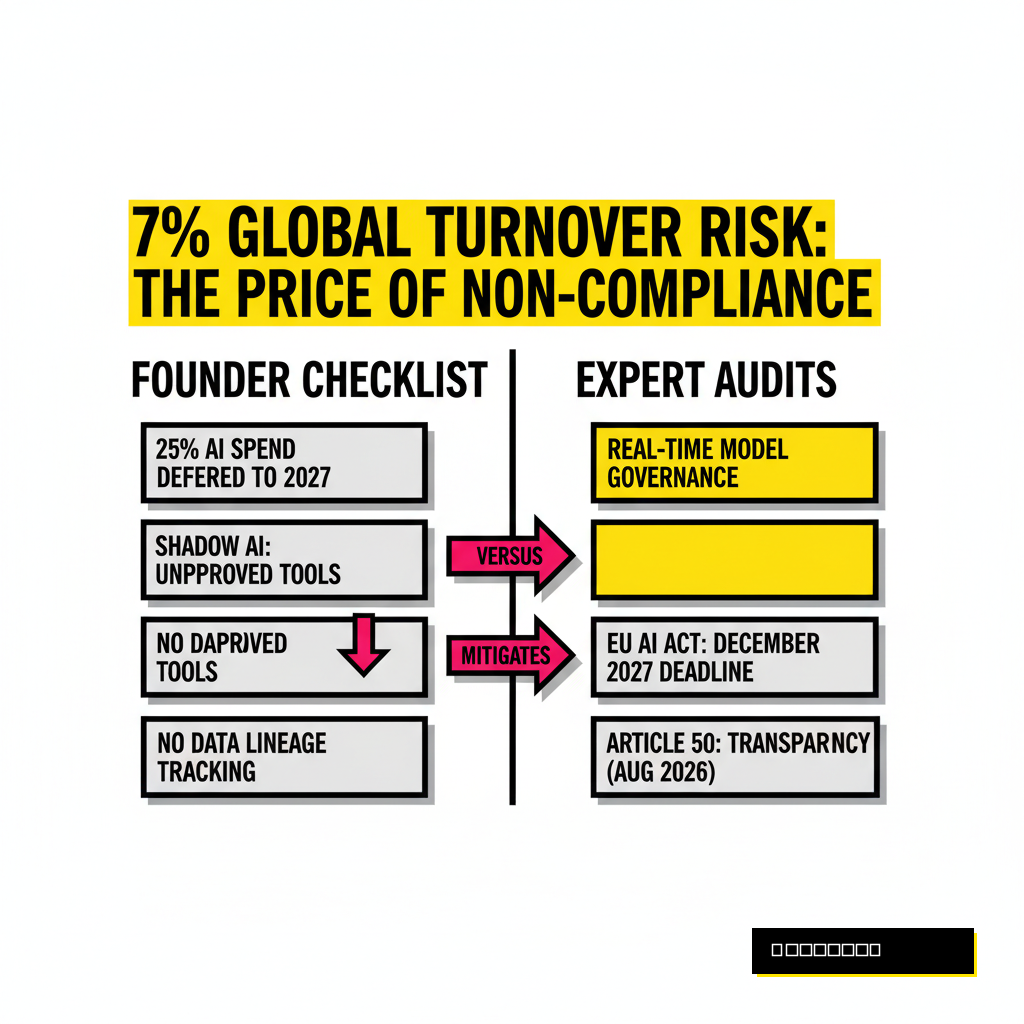

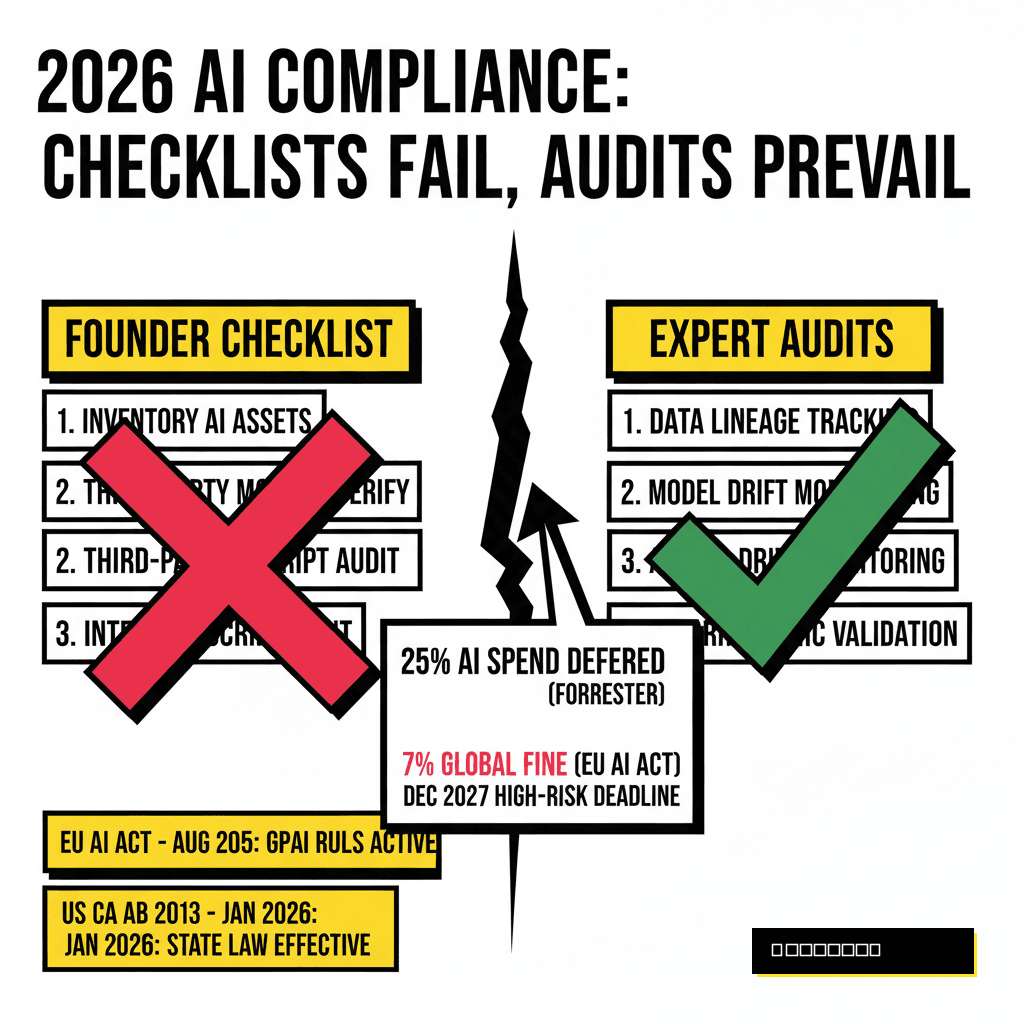

Business leaders often mistake compliance for a list of boxes to check before a product launch. This narrow view ignores the reality of the EU AI Act and emerging US state laws like California's AB 2013, which became effective on January 1, 2026. These regulations demand more than a signature. They require a living, breathing system of governance that tracks data lineage and model drift in real time. If you ignore these requirements, you risk more than just a fine. You risk a complete loss of market access and a collapse in enterprise trust.

The EU AI Act and the Omnibus Shift

Europe remains the global regulator of record, but the rules changed recently. In April 2026, the European Commission advanced the Digital Omnibus proposal, which significantly altered the implementation timeline for high-risk systems. While transparency requirements under Article 50 remain targeted for August 2026, the core obligations for stand-alone high-risk systems listed in Annex III have moved to December 2, 2027. This delay offers a temporary reprieve, yet it also raises the bar for what constitutes acceptable preparation during the transition.

General-purpose AI model providers have no such luxury. Their obligations became fully applicable in August 2025. If you are building on top of these models, you must verify that your upstream providers comply with the transparency and copyright rules already in force. National competent authorities are now active in every EU member state. They possess the power to levy fines up to 7% of global annual turnover for violations involving prohibited AI practices. Staying compliant requires a precise understanding of where your system sits on the risk spectrum.

A Founder’s Simple Checklist for Initial Triage

Early-stage startups cannot afford a million-dollar audit on day one. You need a pragmatic starting point that keeps the regulators at bay while you find product-market fit. Effective compliance starts with an honest inventory of your AI assets. You must identify every third-party model, API, and internal script that contributes to your output. Most organizations fail here because of shadow AI, where employees utilize unapproved tools without oversight. Establishing a clear policy on approved models is the first step toward safety.

Data transparency is the second pillar of basic hygiene. California's training data laws now require developers to disclose a high-level summary of the datasets used in their systems. You should document your data sources, cleaning processes, and any synthetic data generation methods used. This documentation serves as your first line of defense during a preliminary inquiry. To minimize your compliance surface area, consider exploring our 6 Lightweight LLMs guide, which highlights models that reduce external data exposure.

The Simple Checklist

- Maintain a full AI asset and model inventory

- Document data lineage and cleaning steps

- Implement user-facing AI disclosures

- Establish a clear shadow AI usage policy

- Adopt basic bias testing for outputs

Expert Audit

- Conduct adversarial red teaming sessions

- Perform automated drift and bias monitoring

- Verify third-party model supply chain security

- Complete ISO/IEC 42001 certification

- Establish formal algorithmic accountability

Why Algorithmic Auditing is the New Due Diligence

Checklists provide a false sense of security for high-scale applications. When you move from a pilot to an enterprise-grade system, the technical stakes escalate. Advanced algorithmic auditing is no longer a luxury for big tech. It is a mandatory requirement for any system affecting employment, credit, or critical infrastructure. NIST recently released a concept note on April 7, 2026, regarding a new profile for Trustworthy AI in Critical Infrastructure, signaling a push for even more rigorous engineering standards.

Audit costs reflect this complexity. According to recent industry benchmarks, an enterprise-grade compliant AI system can cost between $250,000 and $1,000,000 to develop and validate correctly. Specialized talent drives these numbers. A dedicated AI audit service often maintains a monthly payroll exceeding $56,000 just to support the necessary PhD-level expertise. These experts analyze your model for adversarial robustness, ensuring that malicious inputs cannot force your system into harmful behavior. If you are handling sensitive data, our Local LLM Handbook explains how to implement these controls at the local level to maintain maximum privacy.

Bridging Strategy with Technical Reality

Success in 2026 requires moving away from manual spreadsheets. You cannot manage a modern AI stack with static documents that are outdated the moment they are saved. Automated governance tools are now the standard for high-performing teams. These platforms provide real-time observability into model performance and compliance status. They detect bias as it happens and alert your team before a regulatory threshold is crossed. This shift from reactive to proactive management defines the winners in the current market.

Transparency is your most valuable asset during a procurement cycle. Enterprise buyers are increasingly demanding a "Model Fact Sheet" or a "Nutrition Label" for AI before they sign a contract. These labels must include details on training data, known limitations, and performance benchmarks across diverse demographics. Providing this data upfront accelerates the sales cycle and positions your company as a mature partner. Organizations that ignore this requirement will find themselves locked out of the lucrative enterprise and public sector markets, which now prioritize sovereign and documented AI solutions.

| Feature | Manual Governance | Automated Observability |

|---|---|---|

| Audit Frequency | Annual / Bi-annual | Continuous / Real-time |

| Bias Detection | Sample-based manual review | Algorithmic drift monitoring |

| Data Lineage | Static documentation | Automated tracking pipelines |

| Cost Profile | High per-event cost | Predictable subscription model |

Moving Beyond Box-Ticking

Compliance shouldn't be viewed as a burden. It is a fundamental component of product quality. In the same way that we expect software to be secure and bridges to be stable, we must expect AI to be transparent and accountable. Companies that lead with high standards today will build the foundations for the next decade of innovation. The current regulatory pressure is merely a catalyst for a more professional and reliable AI industry. You have the opportunity to define what excellence looks like in this new era.

Founders must choose their path carefully. You can wait for the regulators to knock on your door, or you can build a culture of safety that attracts premium clients and top-tier talent. The checklist gets you through the door, but the audit keeps the lights on. Invest in your governance stack as early as possible. Your future valuation depends on it.