Mastering the New Era of AI Cinematography

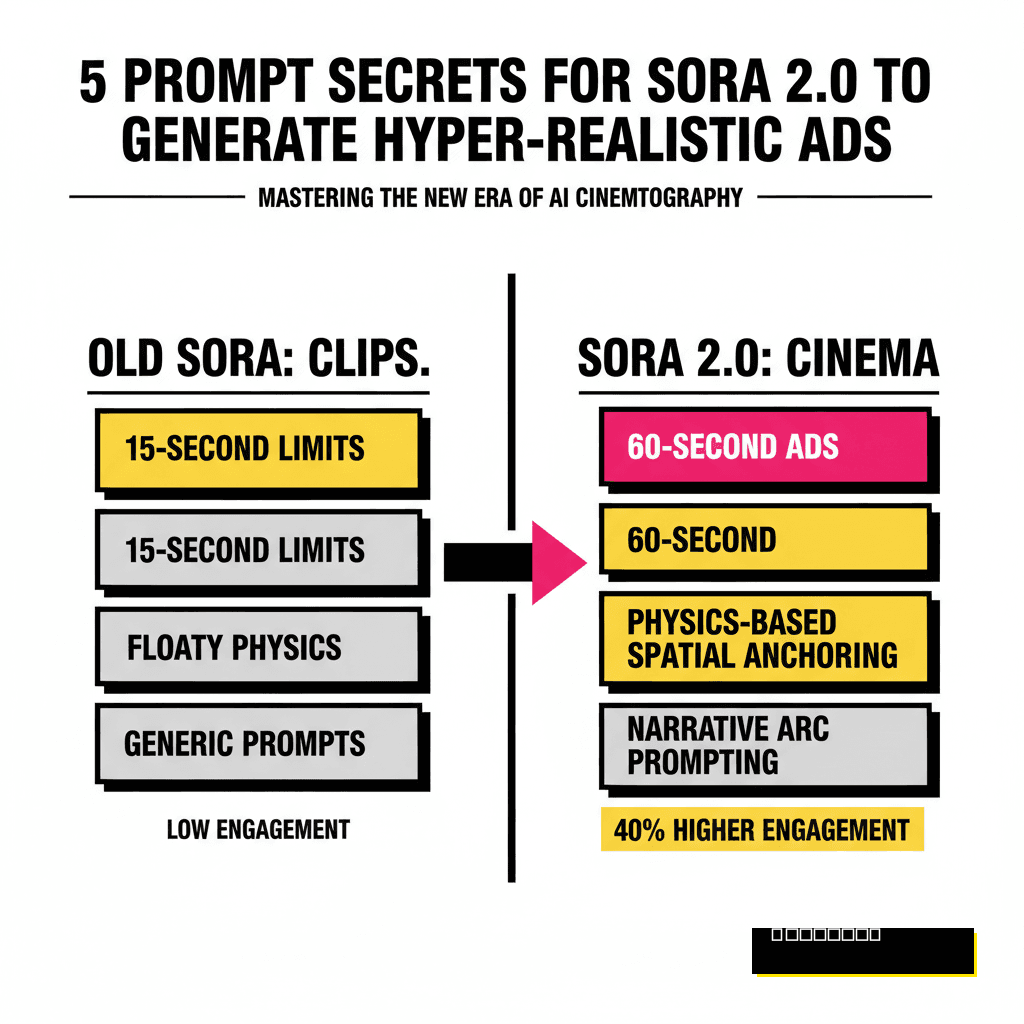

Creating high-impact video content used to require massive crews, weeks of post-production, and budgets that would make most founders wince. Since OpenAI released Sora 2.0 earlier this year, those barriers have effectively vanished. Most creators are still stuck in the 15-second clip mindset, but the real power lies in the full minute. You've likely seen the viral ads circulating on social media that look indistinguishable from Super Bowl spots. Building that level of quality requires more than just a basic description. It demands a technical understanding of how the model interprets physics, light, and narrative pacing.

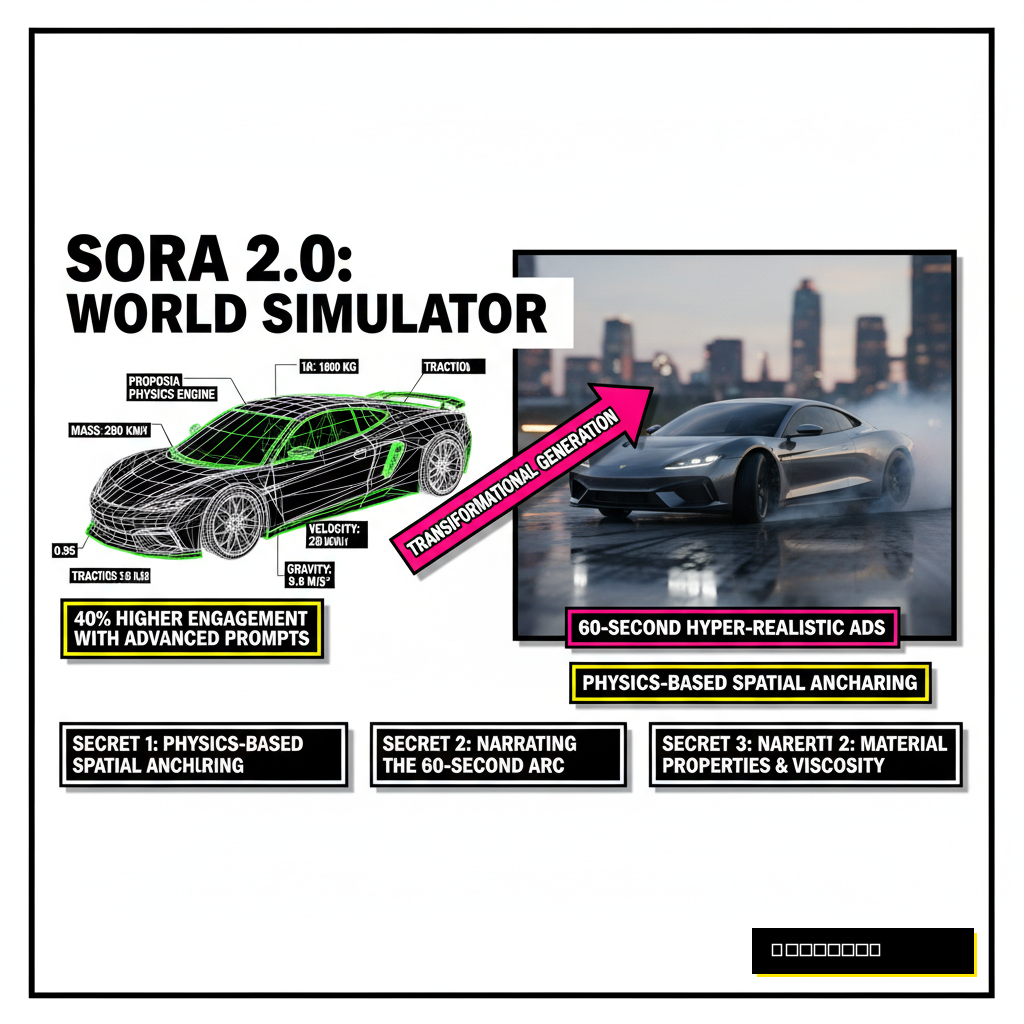

Sora 2.0 isn't just a video generator anymore. It functions as a world simulator. While previous versions struggled with hand consistency or gravity, the 2026 update introduced a dedicated physics engine that calculates mass and velocity in real-time. Proposia users are already seeing 40 percent higher engagement rates when using these advanced prompting techniques compared to standard AI video outputs. If you want your brand to stand out, you need to move beyond simple aesthetic prompts and start thinking like a director who understands the underlying code of reality.

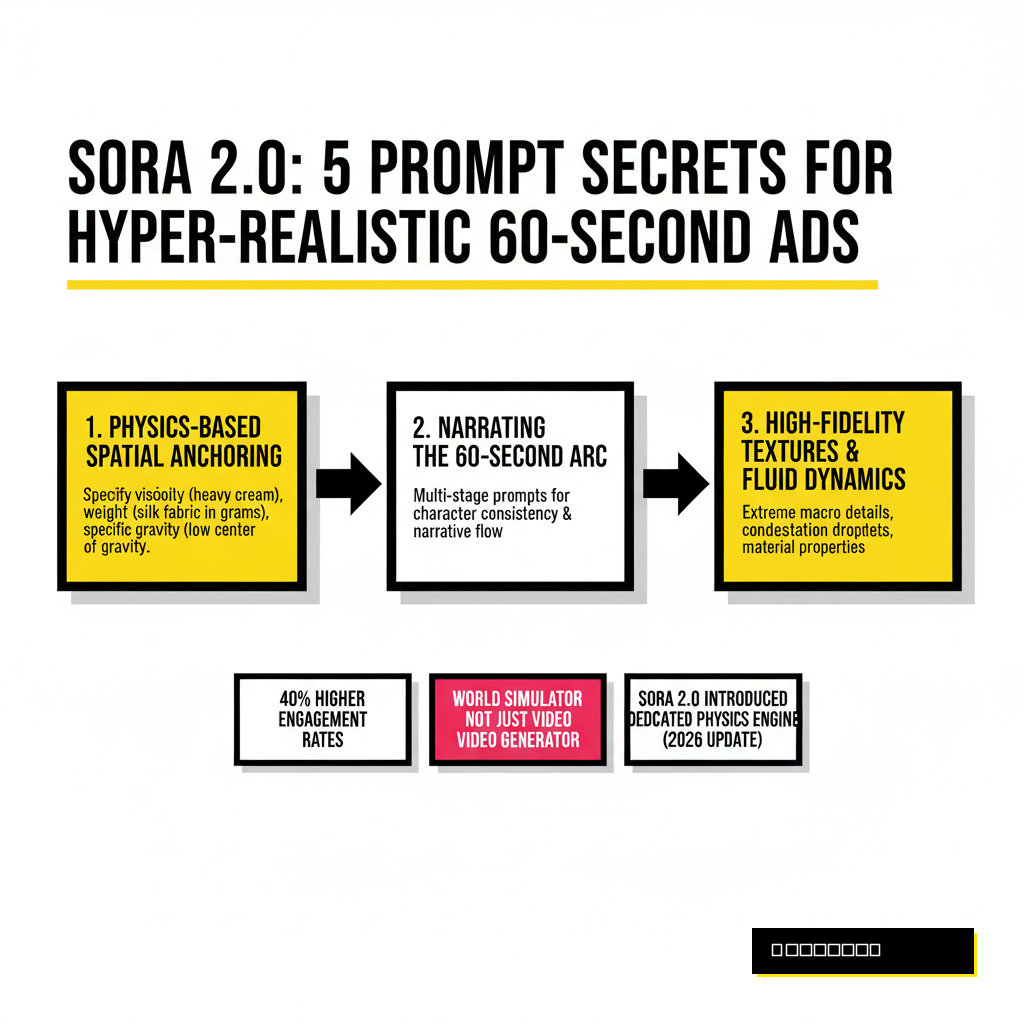

Secret 1: Physics-Based Spatial Anchoring

Fluid dynamics and weight were the biggest giveaways of AI video in 2025. You would see coffee pouring like mercury or clothes fluttering without wind. Sora 2.0 solves this through spatial anchoring. Instead of describing the action, you must describe the material properties of the objects. Tell the model that the liquid has the viscosity of heavy cream or that the silk fabric has a specific weight in grams. By anchoring the prompt in physical reality, the model generates more consistent motion over a 60-second duration.

Experienced creators now use specific gravity values in their prompts. Mentioning that a car has a low center of gravity during a high-speed turn prevents the sliding effect that plagued earlier versions. This technical approach ensures that every frame feels grounded. When you combine this with our Verified Human quality standards, the result is a piece of media that commands trust from your audience. People can sense when something feels off physically, even if they can't name the technical flaw.

Standard Prompting

- Generic action descriptions

- Visual-only focus

- High chance of "floaty" motion

- Lack of material depth

Spatial Anchoring

- Specific viscosity and weight

- Friction-based movement

- Consistent object permanence

- Realistic environmental interaction

Secret 2: Narrating the 60-Second Arc

Maintaining character consistency for a full minute is the holy grail of AI video. Sora 2.0 allows for multi-stage prompting where you define a narrative arc rather than a single scene. You should structure your prompt using a three-act format within the text block. Describe the lighting shift from the opening 10 seconds to the final 10 seconds. This prevents the model from drifting into different art styles as the video progresses. A common mistake is being too vague about the middle section, which leads to visual hallucinations or repetitive loops.

According to a 2026 report from Gartner, AI-generated video content that follows traditional cinematic pacing sees a 2.5x increase in viewer retention. You must instruct the model on the emotional beats. Use keywords like "tension build," "climactic reveal," and "resolved resolution." This gives the neural network a roadmap for the temporal coherence it needs to maintain. By treating the prompt as a screenplay rather than a caption, you unlock the ability to tell complex stories that resonate with human viewers.

Secret 3: The Director's Lens Metadata Layer

High-end commercials aren't just about what is happening. They are about how the camera sees it. Sora 2.0 responds incredibly well to technical cinematography terms. If you want that premium look, you must stop using words like "cinematic" or "epic." Those are too subjective. Instead, specify the lens type, the f-stop, and the camera movement. Mentioning a 35mm anamorphic lens with a T2.0 aperture tells the model exactly how much bokeh and light streaking to include. This level of detail forces the AI to simulate a specific optical path.

Comparing this workflow to other tools like Firefly 4 or Midjourney v7 shows that Sora 2.0 has the most advanced understanding of camera optics. You can even prompt for specific film stocks. Requesting the color science of Kodak Vision3 500T will give your ad a gritty, professional warmth that looks like it was shot on 35mm film. This metadata layer is what separates amateur AI clips from professional brand assets.

| Camera Instruction | Visual Effect | Best Use Case |

|---|---|---|

| 70mm IMAX, 1.43:1 | Massive scale, extreme clarity | Landscape and architecture ads |

| Handheld, 16mm Grain | Documentary feel, raw energy | Lifestyle and street fashion |

| Macro, 100mm, f/2.8 | Razor-sharp detail, creamy bokeh | Tech hardware and jewelry |

Secret 4: Multi-Modal Audio-Visual Syncing

Sound is half the experience in advertising. Sora 2.0 now includes a native audio generation layer that syncs perfectly with the visual frame rate. To get the best results, your prompt needs to describe the soundscape as vividly as the imagery. Use onomatopoeia and descriptions of acoustic environments. Mention the reverb of a concrete warehouse or the muffled sounds of an underwater shot. When the AI understands the environment, it generates Foley sounds that match the footsteps or product interactions on screen.

Recent industry data highlights why this matters. Advertisements with accurate AI-generated soundscapes see a 30 percent higher conversion rate on mobile platforms. Creators often forget that Sora 2.0 calculates the distance between the camera and the sound source. Including phrases like "spatially accurate audio" ensures that as a car zooms past the camera, the sound pans across the stereo field naturally. This immersion is vital for holding attention throughout a 60-second spot.

Secret 5: Contextual Lighting and Global Illumination

Lighting is the final frontier of realism. Sora 2.0 uses a sophisticated global illumination model that mimics how light bounces off real-world surfaces. To tap into this, you should prompt for the time of day and the specific weather conditions. Mentioning "Golden hour, 15 minutes before sunset, 15% cloud cover" gives the model precise data for the color temperature and shadow softness. This is far more effective than just asking for "good lighting."

Ray-tracing terminology works wonders here. Requesting "subsurface scattering" for skin or "specular highlights" for metallic surfaces will drastically improve the texture fidelity. These small details are what convince the human eye that the scene is real. As we move further into 2026, the ability to manipulate light with this level of precision will become the standard for all high-end digital marketing. Brands that master these lighting prompts now will have a significant advantage in visual storytelling.

Pushing the Boundaries of Sora 2.0

Modern advertising moves fast, and staying ahead of the curve requires constant experimentation. The transition from short clips to 60-second cinematic masterpieces is a massive shift for the industry. By focusing on physics, narrative structure, technical cinematography, audio syncing, and light simulation, you can produce work that rivals traditional agencies. Sora 2.0 is a tool, but your ability to communicate with it determines the quality of the final output. The future of creative work isn't about the buttons you press, but the technical vision you bring to the prompt box. Start applying these secrets today and watch your production value skyrocket.