Mastering the New Crawler Protocol

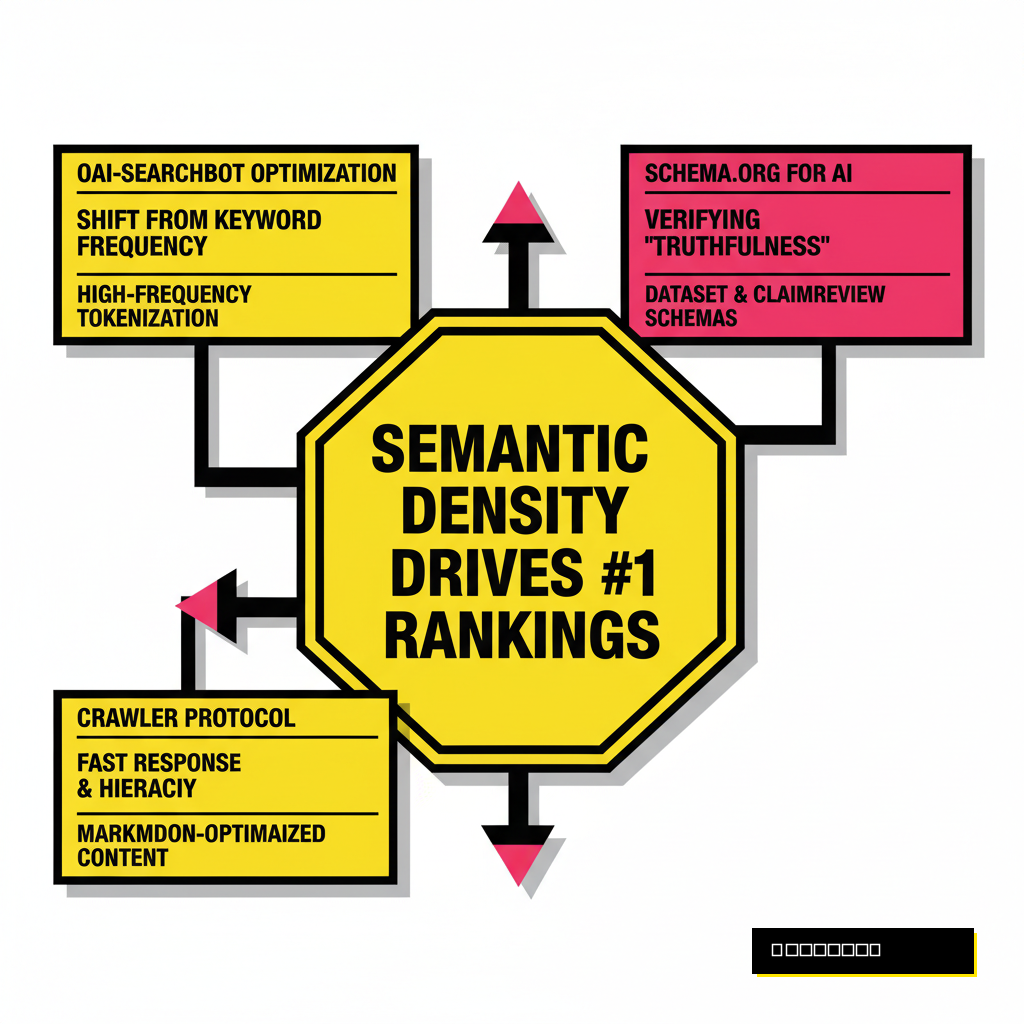

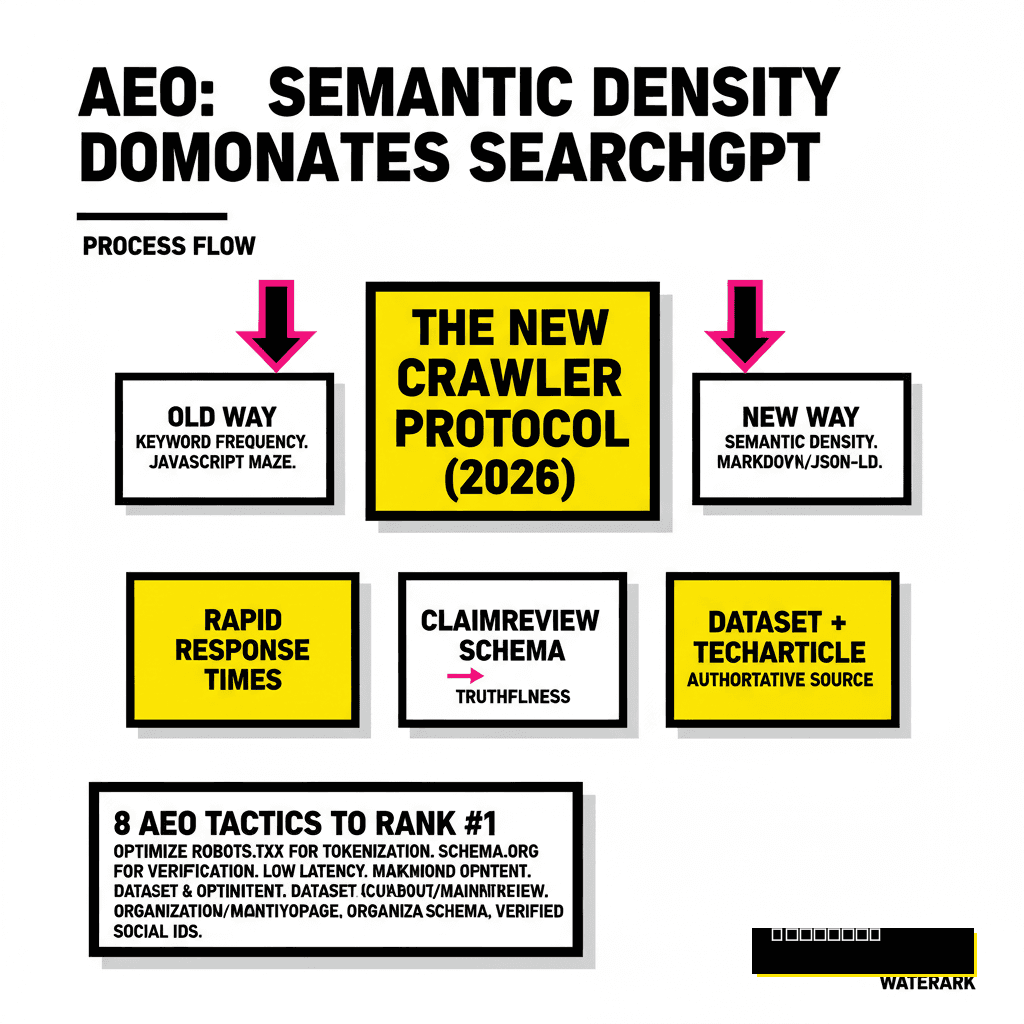

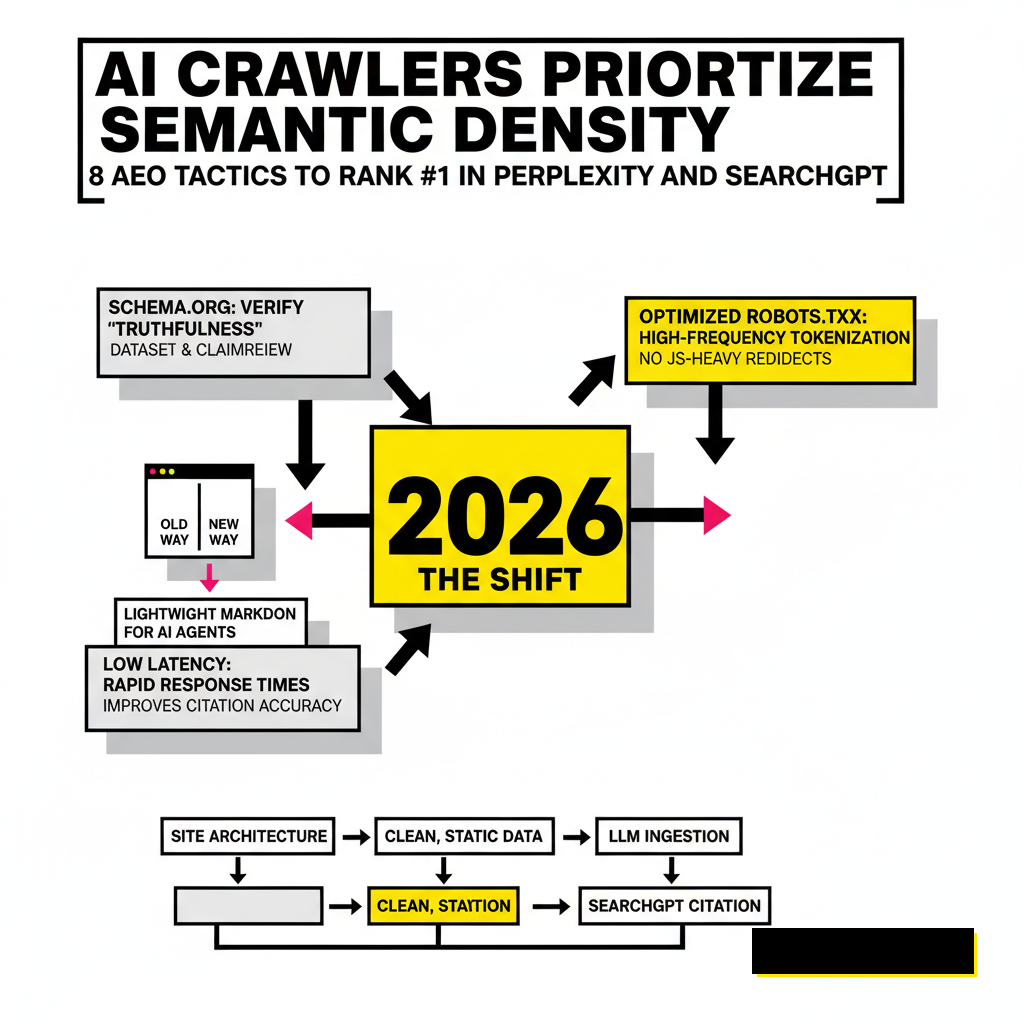

Search engines no longer just index pages, they ingest knowledge. For developers, the shift from traditional Googlebot optimization to OAI-SearchBot and PerplexityBot management is the most significant technical hurdle of 2026. These crawlers prioritize semantic density over keyword frequency. You need to ensure your robots.txt isn't just open, but optimized for high-frequency tokenization. If your site architecture is a maze of JavaScript-heavy redirects, these bots will likely skip your core logic in favor of cleaner, static data sources.

OpenAI's latest documentation for OAI-SearchBot emphasizes the need for rapid response times and clear content hierarchies. High latency during a crawl can lead to incomplete data ingestion, which directly impacts how SearchGPT synthesizes your site’s information. You should monitor your server logs to identify when these bots are hitting your API endpoints versus your HTML pages. Proposia users often find that serving a lightweight, markdown-optimized version of their content specifically for AI agents significantly improves citation accuracy.

Schema Markup for Generative Intelligence

Structured data has evolved far beyond simple breadcrumbs or product ratings. In 2026, AI engines use Schema.org attributes to verify the "truthfulness" of a claim. Developers must implement the latest Dataset and ClaimReview schemas to ensure that LLMs don't hallucinate your technical specs. When SearchGPT looks for a specific data point, it cross-references the JSON-LD on your page with its internal training weights. Discrepancies here lead to a total loss of ranking.

Specific properties like knowsAbout and mainEntityOfPage help establish your site as an authoritative source for niche topics. If you're building tools for creators, integrating these tags is as vital as the code itself. We've seen a massive correlation between high-fidelity schema and the "Sources" panel in Perplexity Pro. Ensuring your Organization schema includes verified social identifiers and developer documentation links provides a secondary layer of trust that modern AI models crave.

| Schema Type | AEO Purpose | Impact on SearchGPT |

|---|---|---|

| Speakable | Audio/Voice Synthesis | High for Voice-based Queries |

| Dataset | Raw Data Retrieval | Essential for Analytical Prompts |

| TechArticle | Logic & Functionality | Primary Source for Dev Queries |

The Mechanics of Citation Velocity

Citation velocity refers to the speed and frequency at which your brand or domain is cited across different LLM sessions. Unlike backlinks, which are static, citations in Perplexity and SearchGPT are dynamic and context-dependent. Modern AI engines track how often users click through to your source after a summary is generated. A high click-through rate (CTR) from the AI interface signals to the model that your content provided the necessary depth the summary lacked.

According to recent industry data from early 2026, domains with a citation velocity increase of 20% month-over-month see a corresponding 15% rise in "top-of-summary" placements. You can influence this by creating "citation-bait"—highly specific, data-heavy sentences that are easy for an LLM to extract. Avoid fluff. Instead, provide the exact numbers or steps an AI would need to answer a user's complex prompt. This strategy is particularly effective when combined with multimodal support automation, where clear documentation serves both human users and AI agents.

Building for Retrieval-Augmented Generation

Retrieval-Augmented Generation (RAG) is the backbone of SearchGPT. When a user asks a question, the engine searches the web, finds relevant chunks of text, and feeds them into the prompt. Your goal is to make your content easy to "chunk." Long, rambling paragraphs are difficult for RAG systems to vectorize accurately. Breaking your content into clear, thematic blocks with H3 headers allows the vector database to store your information with higher semantic precision.

Developers should also consider providing a /well-known/ai-plugin.json or similar manifest even if they aren't building a full plugin. This file can point AI engines toward a dedicated API for real-time data. Perplexity's Publishers Program has shown that sites offering direct data feeds receive preferential treatment in complex reasoning tasks. By providing a structured endpoint, you bypass the inaccuracies of standard web scraping.

Factuality as a Ranking Factor

Hallucination is the enemy of OpenAI and Perplexity. Consequently, these engines have built-in "truth scores" for domains. If your site consistently provides data that contradicts the consensus of high-authority sources like Wikipedia, PubMed, or official government databases, your AEO rankings will plummet. You must cite your sources within your own content. This creates a verification chain that AI models can easily follow.

Using a tool like Credo AI for compliance can help ensure your data meets the rigorous standards of the 2026 EU AI Act, which many search engines now use as a benchmark for source reliability. Your technical content should avoid speculative language. Use phrases like "Our data indicates" or "According to the X specification" rather than "We believe" or "It might be." This directness helps the LLM's reward model identify your content as factual rather than opinionated.

Low-Factuality Content

- Uses subjective adjectives

- Lacks specific data points

- No external source verification

- Vague technical descriptions

High-Factuality Content

- Includes hard metrics and dates

- Links to primary documentation

- Uses standard industry nomenclature

- Provides clear, step-by-step logic

Conversational Intent Mapping

Keyword research is dead; prompt research is the new standard. People don't search for "best laptop 2026" in SearchGPT. They ask, "I'm a developer who compiles large C++ projects and travels frequently, what's the best laptop for me?" Your content must answer these multi-layered queries. This requires a shift in how you structure your H2s and H3s. Instead of targeting short-tail keywords, target the specific problems and constraints your audience faces.

Analyze your internal site search data to see the full sentences users are typing. This is a goldmine for AEO. If you find users asking "How do I integrate Proposia with my existing CI/CD?", that exact question should be a header in your documentation. By mirroring the natural language of your users, you increase the likelihood that an LLM will select your text as the definitive answer for a similar prompt. We call this "Prompt-Response Alignment."

Influence Through Fine-Tuning Data Sets

While you cannot directly edit an LLM, you can influence the datasets used for its next fine-tuning cycle. Major AI labs use Common Crawl, GitHub, and specialized industry forums to retrain their models. Ensuring your brand is mentioned positively in these "neighborhoods" is a long-term AEO play. If your open-source libraries are widely used and discussed on Stack Overflow or Reddit, the model's internal weights will naturally associate your brand with authority in that space.

Monitor your presence in the "RefinedWeb" or "C4" datasets if you have the technical resources. Seeing how your site is represented in these massive crawls allows you to adjust your content strategy. If you notice a model consistently misrepresenting your API's capabilities, it usually stems from outdated documentation lingering in the training set. Pruning old content and using 301 redirects to point to updated, high-quality information ensures that the next time the model is updated, it picks up the correct data.

Multimodal AEO for 2026

Search is no longer text-only. SearchGPT and Perplexity now integrate video, images, and even code execution results directly into the chat interface. Optimizing for this requires a multimodal approach. Your images should have descriptive, context-rich alt text that explains the *concept* of the image, not just the content. Instead of "chart showing growth," use "line graph demonstrating a 30% increase in API throughput over Q3 2026."

Video content is equally important. AI engines now "watch" videos by transcribing audio and analyzing keyframes. Providing a full, time-stamped transcript and high-quality metadata for your video tutorials allows SearchGPT to feature your video as a primary source for "how-to" queries. This is the new frontier of AEO. If you aren't optimizing your visual assets for machine vision, you're leaving a massive portion of the traffic on the table. Focus on clarity and high-contrast visuals that are easy for an AI to parse.