The Shift Toward Multimodal Customer Support

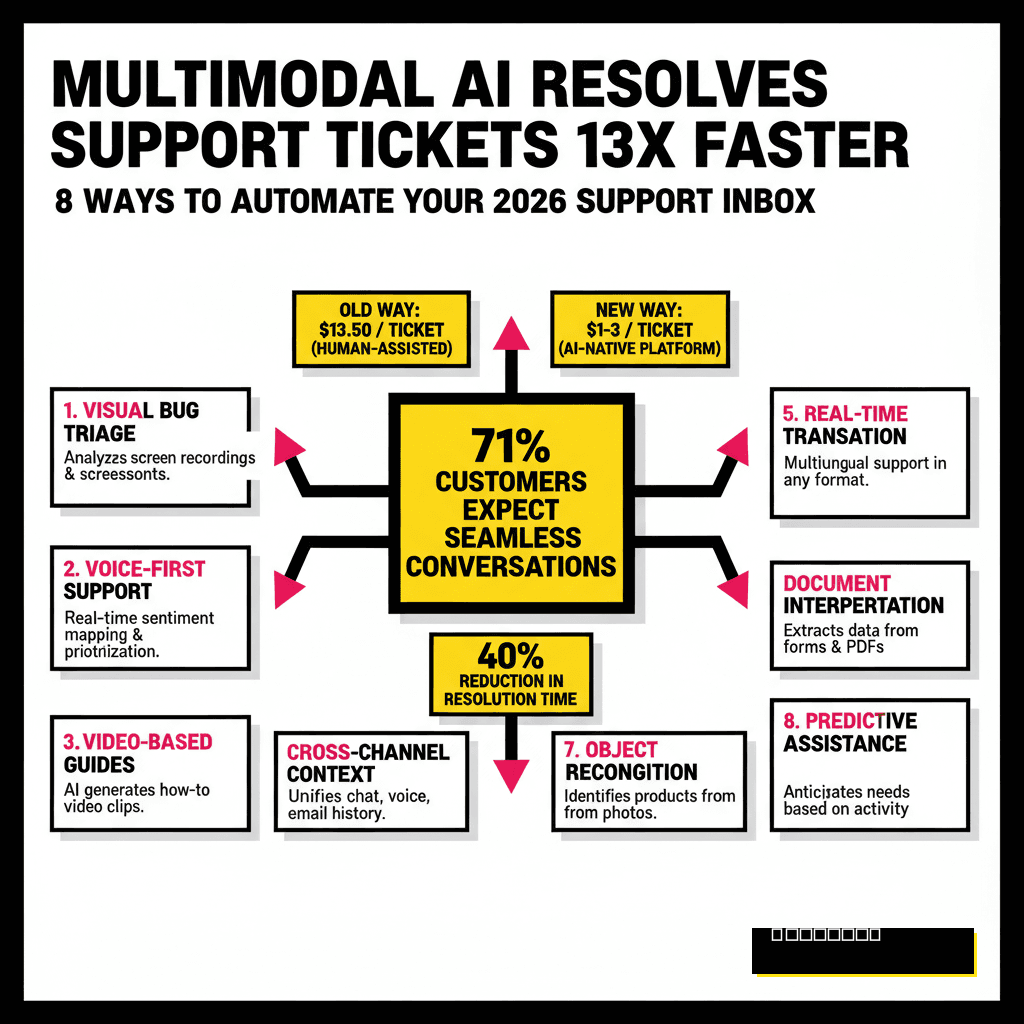

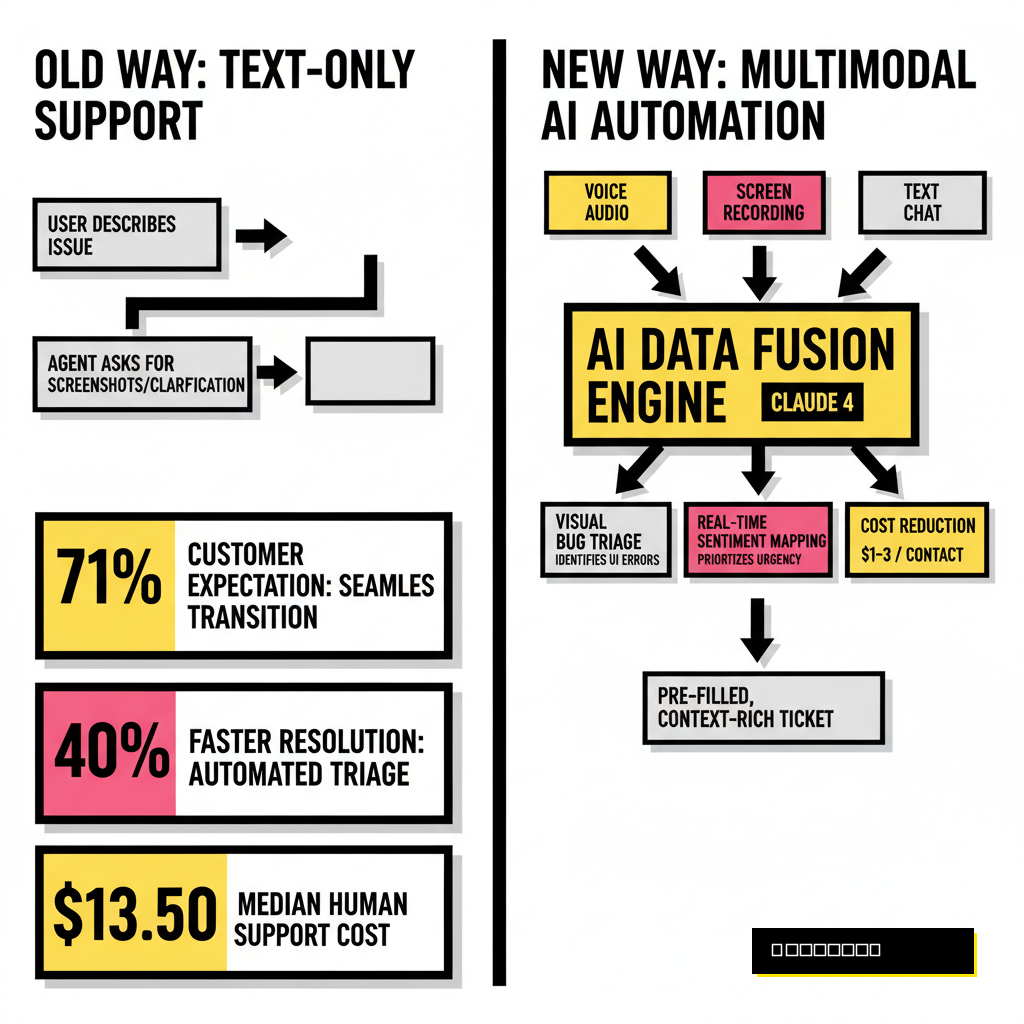

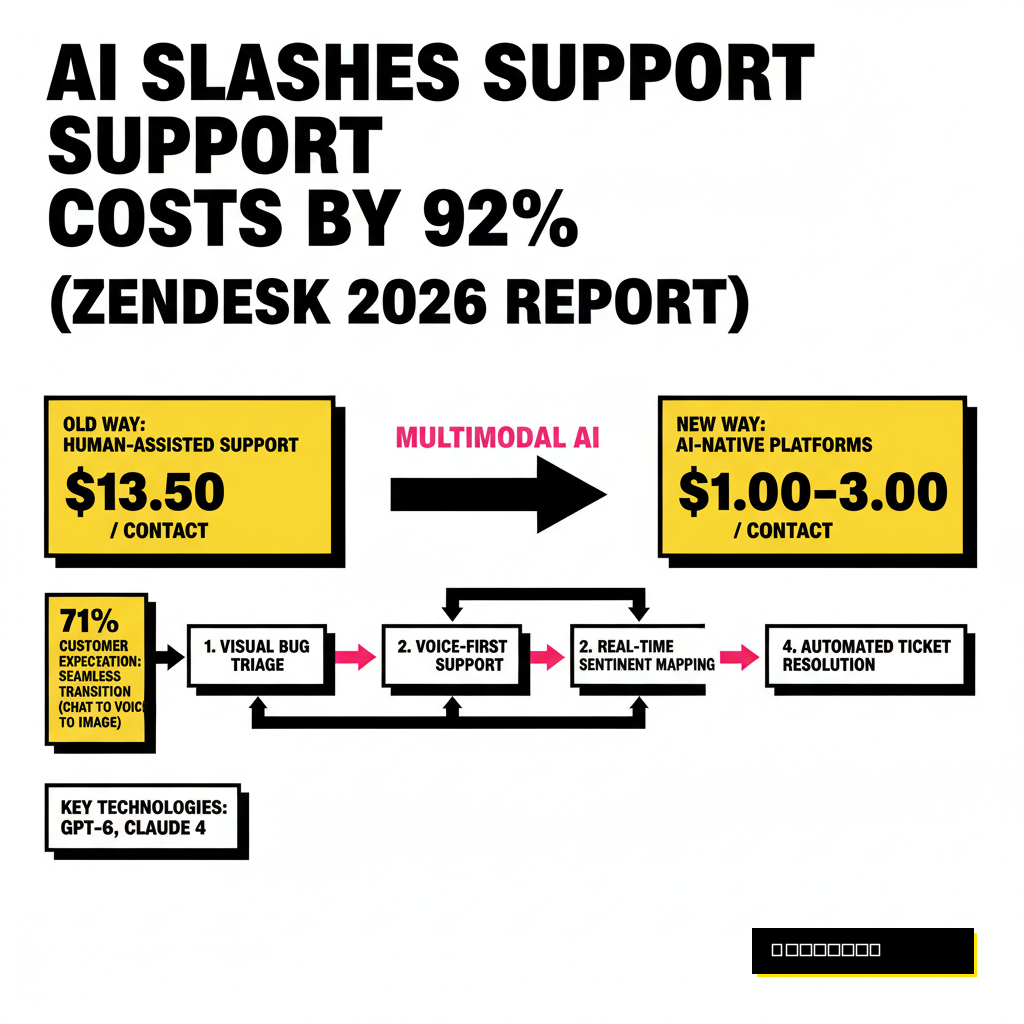

Text-only support desks belong in 2024. If your team still spends hours asking for screenshots or clarifying voice notes, your overhead is higher than it needs to be. Multimodal AI has changed the expectations of the modern consumer. According to Zendesk's 2026 CX Trends Report, 71% of customers now expect a single conversation to transition from chat to voice to image sharing without restarting the thread. This capability is no longer a luxury: it is the baseline for efficiency.

Multimodal models like GPT-6 and Claude 4 process diverse inputs simultaneously. Instead of reading a description of a problem, these systems look at the evidence. They hear the frustration in a caller's voice and see the error code in a blurry photo. Business leaders who adopt these tools early are seeing dramatic shifts in their unit economics. Gartner recently found that AI-native platforms resolve issues at a cost of $1 to $3 per contact, compared to the $13.50 median for human-assisted support.

1. Visual Bug Triage and Screen Recording Analysis

Users are notoriously bad at describing technical glitches. They send vague messages like "the button doesn't work" without providing context. Multimodal AI solves this by analyzing screen recordings and screenshots directly. When a user uploads a video of a failing checkout flow, the AI identifies the exact UI element causing the friction. It extracts the console errors visible in the recording and matches them against your documentation.

Technical teams can then receive a pre-filled ticket with the exact reproduction steps already written. This process mirrors the efficiency found in our guide on 9 GPT-6 Prompt Templates for Micro-Service Refactoring, where structured AI logic handles the heavy lifting of code analysis. By the time a human engineer sees the ticket, the root cause is often already identified. Automated triage of this nature reduces the back-and-forth that typically inflates resolution times by 40%.

2. Voice-First Support and Real-Time Sentiment Mapping

Voice is making a massive comeback as the preferred channel for complex issues. Modern AI agents like Intercom's Fin Voice now handle natural, real-time conversations that sound indistinguishable from human speech. These systems do more than just transcribe: they analyze the acoustic properties of the call. If a customer is shouting or speaking with a high-pitched, stressed tone, the AI detects this immediately.

Sentiment mapping allows the system to prioritize tickets based on emotional urgency rather than just chronological order. A calm inquiry about a delivery date might wait, but a distressed caller dealing with a frozen bank account gets moved to the front of the queue. McKinsey reports that AI deployments of this type can reduce total human interactions by nearly 50%. Your agents only step in when the AI recognizes that a situation requires high-level empathy or complex negotiation.

3. Automated Identity Verification and Secure Access

Security is the biggest bottleneck in modern support. Forcing a customer to wait for a manual ID check is a recipe for high churn. Multimodal AI now handles liveness detection and document verification in seconds. A customer holds their ID up to their webcam, and the AI compares the photo to a real-time video feed to ensure it is not a deepfake. This technology has become essential as deepfake-enabled fraud surpassed $25 billion in losses last year.

Compliance is another area where these tools shine. If you are operating in the European market, you must align your verification methods with local regulations. Our analysis on Credo AI vs Vanta: Choosing Your 2026 EU AI Act Strategy highlights how critical it is to have automated governance in place. Multimodal AI can flag biometric data that violates privacy laws before it ever reaches your permanent database. This protection saves your company from massive fines while providing a frictionless experience for the user.

Manual Verification

- Agents wait for high-res email uploads

- 40-60% user abandonment rate

- High risk of human oversight in fraud

- Costly 24/7 human staffing needed

AI Multimodal Verification

- Real-time liveness detection via webcam

- Verification completed in under 30 seconds

- Detects deepfakes with 99.8% accuracy

- Zero-data architecture for compliance

4. Physical Product Troubleshooting via Computer Vision

Hardware companies face a unique challenge: they cannot "see" what the customer sees. Multimodal AI changes this by turning every customer's smartphone into a diagnostic tool. If a coffee machine is leaking, the customer points their camera at the device. The AI identifies the make and model, spots the specific valve that is misaligned, and overlays an AR instruction on the screen to show how to tighten it.

Support platforms are rapidly integrating these vision capabilities. Intercom's Fin Vision allows agents to diagnose problems from photos with the same ease as reading a text message. Instead of shipping products back for inspection, you can resolve 30% more hardware issues remotely. This reduction in logistics costs directly impacts your bottom line. Companies using computer vision in support report a significant rise in first-contact resolution rates for physical goods.

| Platform | Vision Capability | Best Use Case |

|---|---|---|

| Intercom Fin | Native Image Recognition | SaaS Screenshots & E-commerce |

| Zendesk AI | Multimodal Ticket Objects | Enterprise Omnichannel |

| Salesforce Agentforce | Atlas Reasoning Engine | Complex Field Service |

5. Scaling Global Support with Live Audio Translation

Hiring native speakers for twenty different markets is expensive and slow. Multimodal AI now offers near-zero latency audio translation. A customer in Japan can call your support line and speak in Japanese, while your agent in London hears the query in English in real time. The AI maintains the original tone and inflection of the speaker, ensuring that the human connection remains intact.

Expansion into new territories no longer requires a massive local hiring surge. You can centralize your support operations while providing a localized experience. This technology also works for chat and video. AI agents now translate text inside images, such as foreign language error messages or receipts, instantly. By removing the language barrier, you broaden your market reach without doubling your headcount. Global businesses are currently using this to maintain 24/7 coverage across all time zones with a single unified team.

6. Receipt and Invoice Processing for Returns

Return processing is often the most tedious part of the inbox. Customers upload crumpled receipts, screenshots of bank statements, or blurry photos of shipping labels. Multimodal AI extracts data from these images with 98% accuracy. It automatically verifies the purchase date, price, and item SKU against your database. If the return falls within your policy, the AI generates the shipping label and sends it to the customer without human intervention.

Efficiency of this scale allows your agents to focus on high-value tasks. Instead of manual data entry, they manage the exceptions and edge cases. Automation in the returns process can save an e-commerce brand thousands of dollars in labor costs every month. The software doesn't just read the text: it understands the structure of the document. It knows the difference between a tax ID and a total amount, even on non-standardized invoices from international vendors.

7. Video Sentiment Analysis for VIP Escalation

High-value clients expect high-touch service. When a VIP customer initiates a video call, multimodal AI monitors the interaction in the background. It looks for micro-expressions that indicate dissatisfaction or confusion. If the customer's facial expressions don't match their verbal agreement, the AI nudges the agent with a suggested pivot or an immediate discount offer.

Real-time coaching for agents is the secret to high CSAT scores. The AI acts as a second pair of eyes, catching details that a human might miss during a busy shift. It can even suggest when to move a conversation from text to video if it senses that a written explanation is failing. This proactive approach prevents small misunderstandings from turning into major churn events. Your best customers feel heard and understood because the technology is actually paying attention to their non-verbal cues.

8. Automated Documentation Generation from Screen Shares

Every time an agent solves a unique problem via screen share, that knowledge is usually lost. Multimodal AI changes this by recording the session and automatically generating a new help center article. It captures the steps taken, summarizes the solution, and even creates annotated screenshots from the video. This creates a self-healing knowledge base that grows more comprehensive with every interaction.

Documentation is often the first thing to fall behind in a fast-growing company. By automating the creation of these resources, you ensure that future customers can find the answer themselves. Gartner notes that memory-rich AI is a top trend for 2026, as systems begin to retain and apply context across thousands of previous interactions. Turning a one-off support call into a permanent resource is the ultimate way to scale your expertise without increasing your workload. Your support inbox becomes a library of solutions rather than a pile of repetitive problems.

Success in 2026 requires a departure from the text-only mindset. The winners are those who embrace the full spectrum of human communication: sight, sound, and text. By deploying multimodal AI, you aren't just saving money: you are building a more responsive, intelligent, and human support experience. Start by identifying your most visual or audio-heavy tickets and let the machines do the heavy lifting.