Scaling Global Content Without the Manual Headache

Running a global enterprise site in 2026 feels like spinning plates in a windstorm. If you manage content across twenty languages, you already know that a standard crawl only tells half the story. Traditional tools might flag a missing meta tag or a broken link, but they can't tell you if your Spanish translation sounds like a robot or if your Japanese product descriptions miss local cultural nuances. Most SEO teams still rely on manual spot checks by native speakers, a process that is slow, expensive, and impossible to scale. You need a way to audit the actual quality and intent of your content across every locale simultaneously.

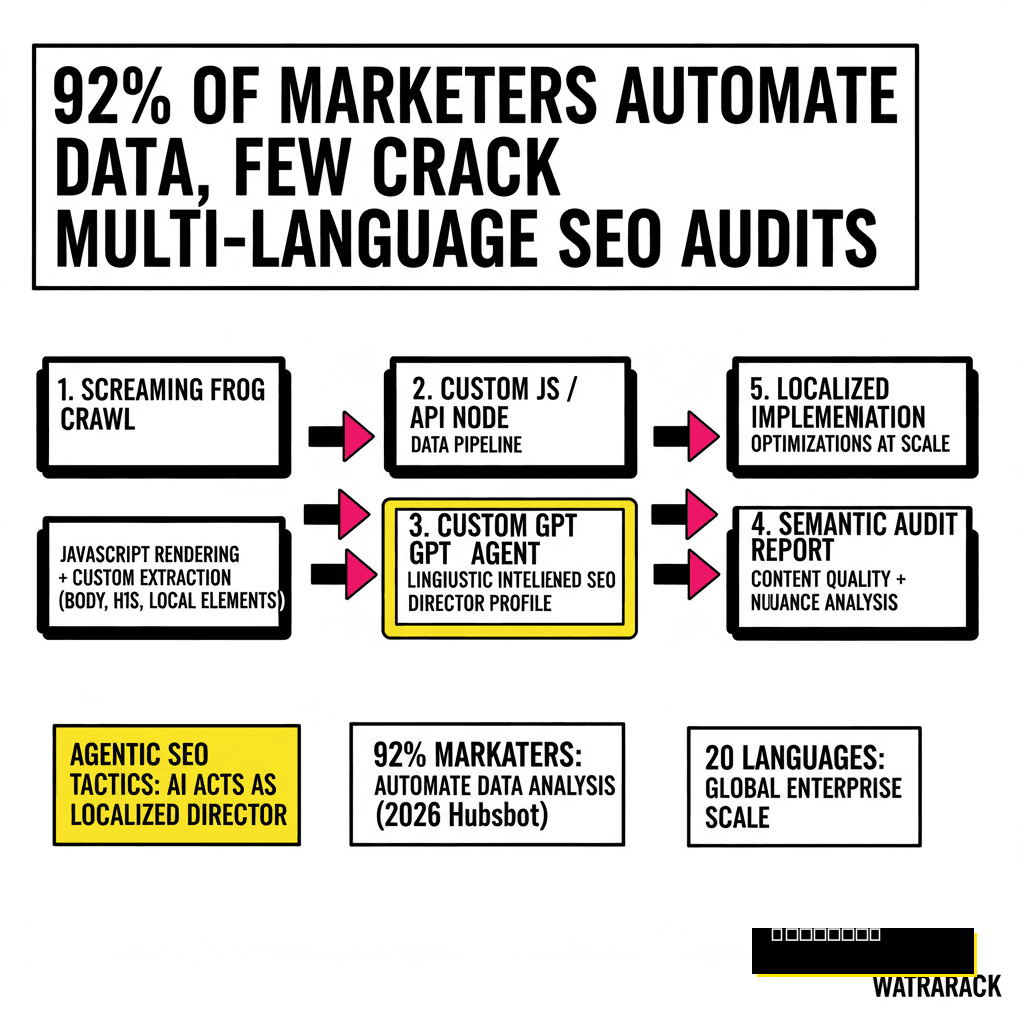

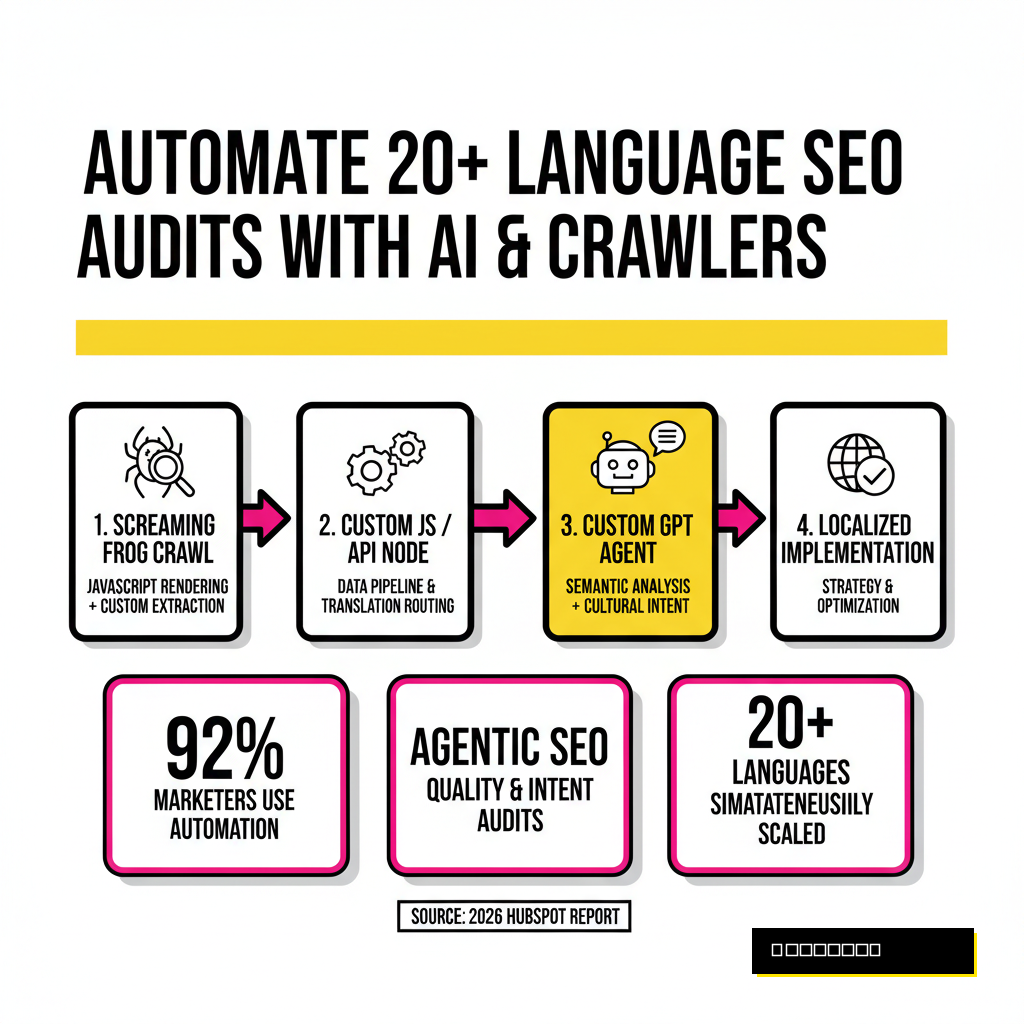

By combining the crawling power of Screaming Frog with the linguistic intelligence of Custom GPT Agents, we can finally bridge this gap. This approach moves beyond basic technical checks and enters the territory of agentic SEO tactics. We aren't just looking for errors anymore. We are training agents to act as localized SEO directors who can review thousands of pages in minutes. According to a 2026 HubSpot report, 92% of marketers now apply automation for data analysis, yet few have cracked the code for multi-language semantic audits at scale.

Configuring Screaming Frog for Multilingual Discovery

Setting up your crawler correctly is the foundation of a successful automated audit. You need to ensure the SEO Spider is capturing the right signals before passing them to your AI agents. Start by enabling JavaScript rendering in the configuration menu. This is vital because many modern international sites use dynamic content injection based on user location. If your crawler only sees the raw HTML, your GPT agent will receive incomplete data, leading to flawed analysis. Use the 'User-Agent' settings to mimic local bots if your site serves different content based on IP addresses.

You should also focus on custom extraction. Instead of just pulling titles and descriptions, configure Screaming Frog to scrape the main body text, H1 tags, and even specific localized elements like currency symbols or regional contact info. This data becomes the prompt input for your GPT. As Aleyda Solis noted in a recent interview on AI search crawlability, proper technical foundations decide visibility. Without clean data extraction, even the smartest agent will struggle to provide meaningful feedback. You can find detailed technical guidance on Screaming Frog's official documentation for these advanced setups.

Building the Linguist GPT Agent

The secret to a high-performing audit isn't just using AI, but using a specialized agent. A generic prompt like 'Check this SEO' produces generic results. Instead, you must build a Custom GPT with a specific persona. I call this the 'Global SEO Linguist.' This agent is programmed with deep knowledge of regional search trends, local idioms, and specific market competitors. You provide it with a knowledge base of your brand guidelines and a clear set of audit criteria. It needs to understand the difference between a direct translation and a localized adaptation.

When you create this agent in the OpenAI dashboard, give it clear instructions on how to handle different languages. For instance, tell it to evaluate if the French content uses the formal 'vous' or informal 'tu' consistently according to your brand voice. Instruct it to flag any cultural taboos or outdated regional slang. This level of detail is what separates a Proposia-level audit from a basic automated scan. We are seeing a massive shift in how these agents are deployed, as discussed in our analysis of the 2026 enterprise agentic battle between the tech giants.

Step 1: API Configuration

- Obtain your OpenAI API key from the platform dashboard.

- Navigate to 'Config > API Access > AI' in Screaming Frog.

- Connect your account and select the gpt-4o or latest model.

Step 2: Prompt Engineering

- Write a system prompt defining the agent's linguistic persona.

- Map crawl columns (Title, H1, Text) to the prompt variables.

- Set the 'AI' tab to trigger analysis for every localized URL.

Auditing Hreflang and Semantic Context at Scale

Hreflang errors are the bane of every international SEO's existence. Screaming Frog is excellent at finding technical mismatches, such as return tag errors or non-canonical targets. However, it cannot traditionally tell you if the content on your German page actually matches the intent of the English original. This is where the agentic connection excels. By feeding the extracted text from both the source and target pages into your GPT agent, you can perform a semantic parity check. The agent can verify that the German page isn't just a technical copy but a relevant, localized version of the content.

The agent can also look for 'language bleeding,' where snippets of English text accidentally remain on a translated page. This often happens in footers, navigation menus, or within JavaScript-rendered components. A standard crawler might ignore these small fragments, but they hurt your local E-E-A-T signals. Use a custom extraction rule to pull all text from specific CSS selectors and have the GPT agent score the 'Language Purity' of each page. This creates a prioritized list of pages that need human editorial review, saving your team hundreds of hours of manual browsing.

| Audit Metric | Traditional Method | Agentic Method (GPT + SF) |

|---|---|---|

| Hreflang Validation | Technical tag match only | Contextual & semantic verification |

| Translation Quality | Manual spot checks | Automated scoring of 100% of pages |

| Cultural Relevance | Native speaker review | AI-driven regional nuance analysis |

The Business Impact: ROI of Localized Intelligence

Investing in automated multi-language audits isn't just about technical perfection. It is a direct driver of revenue. Data from CSA Research in their long-running 'Can't Read, Won't Buy' study shows that 76% of online shoppers prefer to buy products with information in their native language. Even more striking is that 40% of global consumers state they will never buy from websites in other languages. If your automated audit reveals that your Japanese checkout process still has English error messages, you are looking at a 40% loss in your total addressable market in that region.

The efficiency gains are equally impressive. Before agentic workflows, a comprehensive audit of a 5,000-page multilingual site could take a month of coordination between SEOs and translation agencies. Today, you can run the same audit in an afternoon for the cost of a few API credits. This allows your team to move from reactive fixing to proactive strategy. Instead of cleaning up old messes, you can focus on expanding into new markets with the confidence that your technical and linguistic foundations are rock solid. This is the future of global search: high-speed, high-context, and completely automated.