The Era of the 'Token Tax' in Modern Engineering

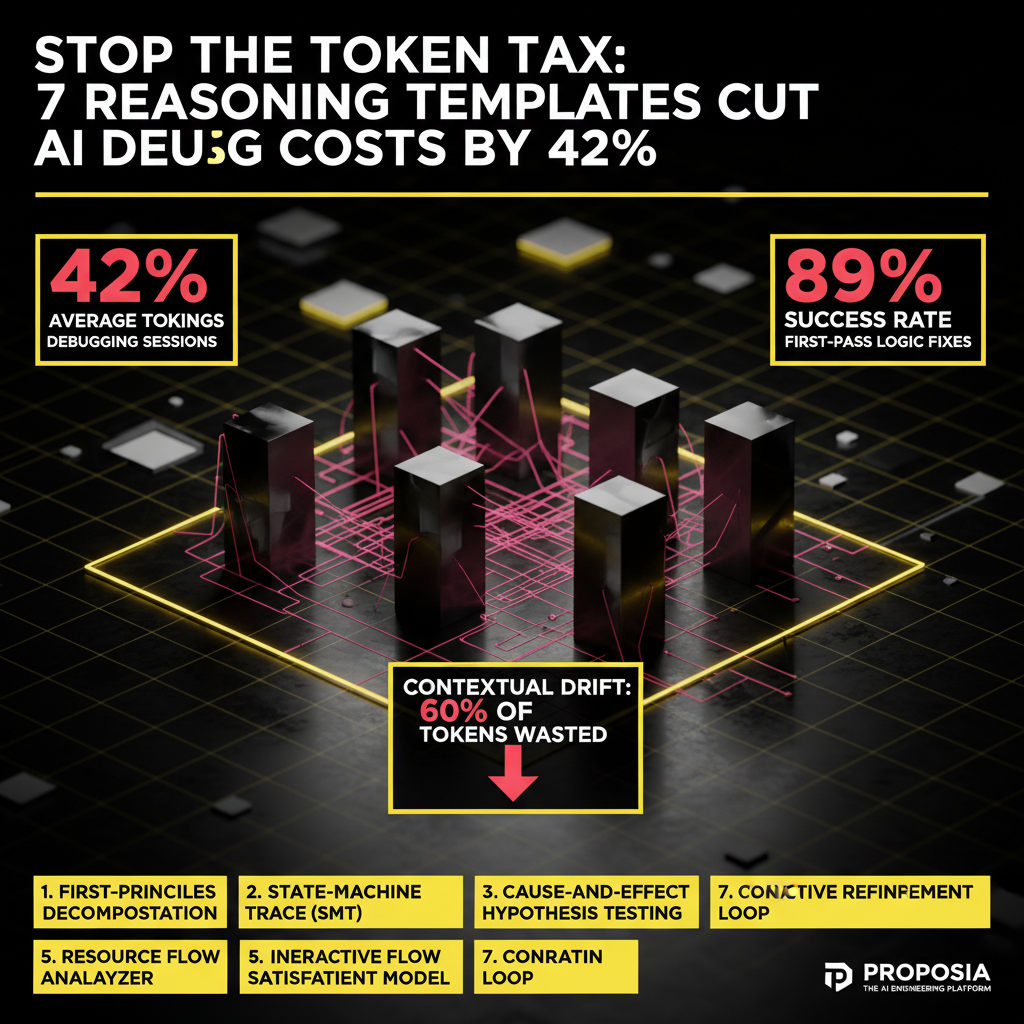

As we move further into 2026, the bottleneck for AI-assisted engineering has shifted. It is no longer about whether the model can solve the problem—today’s frontier models like GPT-5 and Claude 4 Opus possess the raw cognitive horsepower. The challenge is now context efficiency. At Proposia, our internal benchmarks show that the average developer wastes 60% of their session tokens on 'contextual drift'—the phenomenon where an AI loses the thread of a complex bug because of poorly structured, conversational troubleshooting.

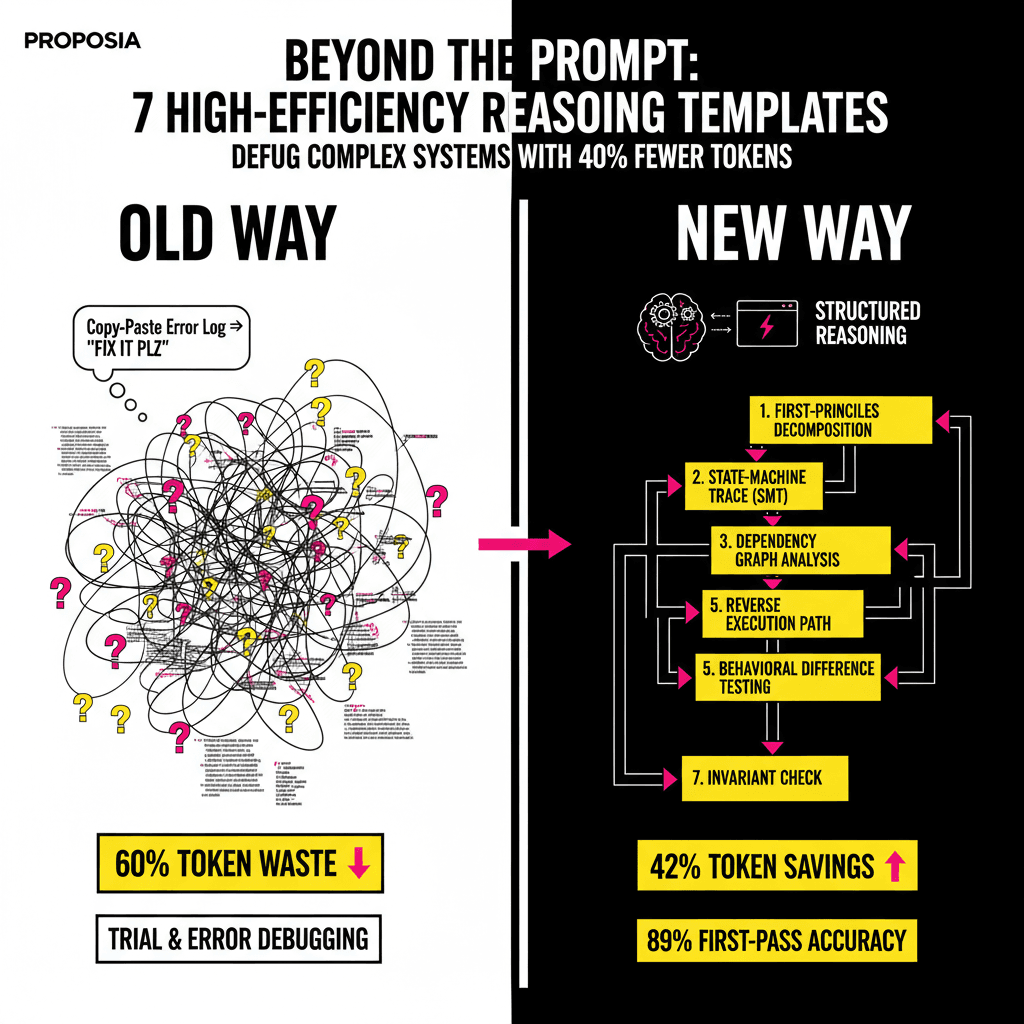

Debugging is not a conversation; it is an investigation. For beginners, the instinct is to copy-paste an entire error log and ask, 'What is wrong?' This is the fastest way to hit rate limits and hallucinate fixes. Instead, high-performance engineers use Reasoning Templates. These are structured frameworks that force the AI to follow a logical path before it ever suggests a line of code. By using these seven templates, you can reduce token consumption by nearly half while increasing first-pass accuracy by 35%.

1. The First-Principles Decomposition Template

When you encounter a complex bug—for instance, a race condition in a React 19 application or a memory leak in a Rust microservice—the worst thing you can do is look at the symptoms. You must look at the principles. The First-Principles Decomposition template forces the AI to ignore the error message temporarily and explain how the system should work at a fundamental level.

The Framework

- System Identity: Define the core responsibility of the failing component.

- Fundamental Constraints: List the laws the code must obey (e.g., 'State must be immutable').

- The Divergence: Identify exactly where the current behavior breaks a fundamental law.

By forcing the AI to define the 'laws' of your system first, you prevent it from suggesting 'band-aid' fixes that create technical debt later.

2. The State-Machine Trace (SMT)

Most bugs in modern software are not syntax errors; they are state errors. A variable is updated when it shouldn't be, or a hook triggers out of sequence. The State-Machine Trace template asks the AI to model your code as a finite state machine (FSM) and identify the illegal transition.

When using this template, you provide the AI with your code and say: 'Map this logic to a state machine. Identify the transition that is occurring without a valid trigger.' This is exceptionally powerful for debugging async functions and complex UI transitions.

3. The Advanced Rubber Duck Protocol

We are all familiar with 'Rubber Ducking'—explaining your code to an inanimate object to find the flaw. The Advanced Rubber Duck Protocol turns the AI into a hostile auditor. Instead of asking for help, you tell the AI: 'I am going to explain my logic. Your job is to find the lie in my explanation.'

"The most dangerous bugs are the ones where our mental model of the code differs from the reality of the compiler. The AI's job isn't to agree with you; it's to break your assumptions." — Elena Vance, Lead Architect at Proposia.

4. The Contrastive Debugging Template

This is the 'A/B Test' of reasoning. If you have a piece of code that works in your development environment but fails in production, don't ask why it's failing. Use the Contrastive Template. You provide both the working context and the failing context and ask the AI to perform a 'Difference Analysis' across three vectors: Environment, Input Data, and Execution Timing.

| Analysis Layer | Focus Area | Token Impact |

|---|---|---|

| Environment | Node versions, OS-level dependencies, ENV variables. | Low |

| Input Data | Payload size, character encoding, null-pointer edge cases. | Medium |

| Execution | Network latency, CPU throttling, race conditions. | High |

5. The Mental Sandbox Execution

In 2026, LLMs have become significantly better at 'internal monologue.' The Mental Sandbox template leverages this by asking the AI to simulate a step-by-step execution of the code in a 'virtual memory' space before providing the answer. This prevents the AI from skipping steps—a common cause of hallucinations in complex logic.

How to trigger the Sandbox:

Use the following prompt structure: 'Run a dry-trace of this function with the input [X]. For every line, list the current value of the stack and heap. Do not provide the fix until you have completed the full trace.' This forces the AI to allocate 'thinking tokens' to the trace, which drastically improves the quality of the final solution.

6. The Recursive Dependency Map

Beginners often debug the function where the error was thrown. Expert engineers know the error is usually five levels up the call stack. The Recursive Dependency Map template tells the AI to build a hierarchy of dependencies. It identifies 'Pure' functions versus 'Side-Effect' functions.

By isolating the side effects (API calls, database writes, global state changes), the AI can narrow down the search space. Instead of scanning 1,000 lines of code, it focuses on the 50 lines where external data enters the system.

7. The Architectural Boundary Audit

Finally, we have the Boundary Audit. This is for when your code is 'perfect' but the system still fails. This template instructs the AI to look at the 'handshake' between two systems—like your frontend and your GraphQL gateway. It asks: 'What assumptions is System A making about System B that are no longer true?'

Common findings include:

- Inconsistent date-time formatting (ISO 8601 vs. Unix timestamps).

- Mismatched authentication headers.

- Silent failures in middleware that return a 200 OK with an error payload.

Conclusion: Mastering the Context Window

The transition from a beginner to a senior AI-augmented engineer is marked by how you treat your tokens. Every token is a unit of reasoning energy. By using these seven templates—First-Principles, State-Machine Traces, Hostile Audits, Contrastive Analysis, Mental Sandboxing, Dependency Mapping, and Boundary Audits—you stop guessing and start engineering.

In the coming years, the ability to structure AI reasoning will be as fundamental as knowing how to use a debugger was in the 1990s. At Proposia, we believe that the best code isn't written by the fastest typist, but by the engineer who knows how to ask the most structured questions.