Capturing Reality: NeRF vs. Gaussian Splatting

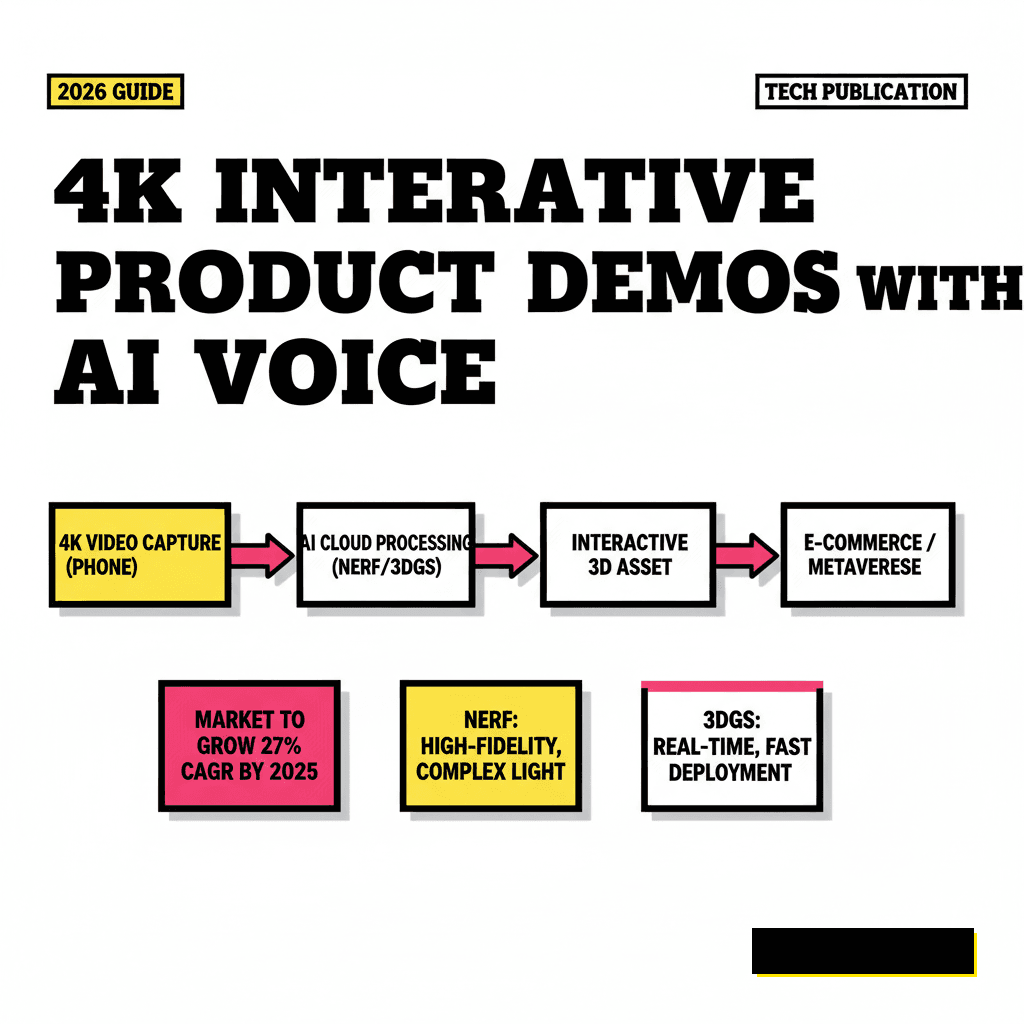

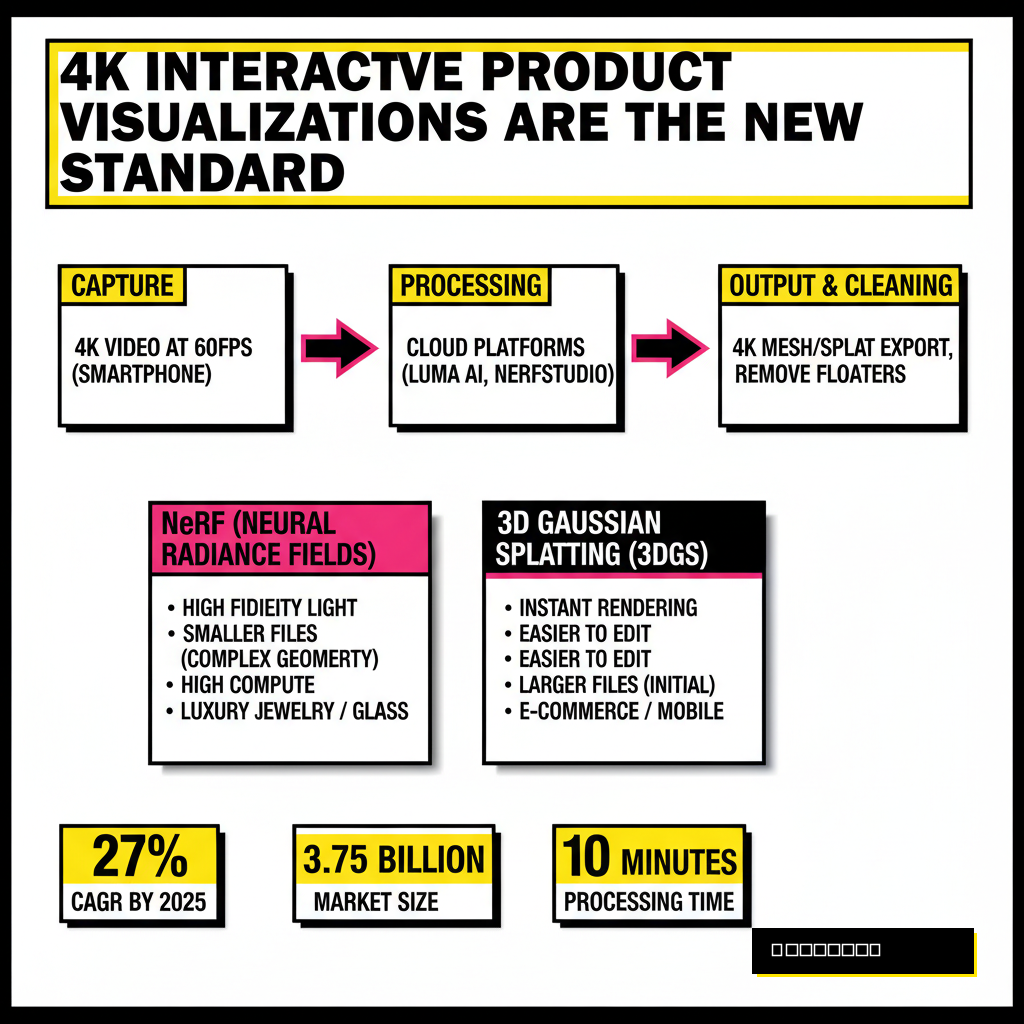

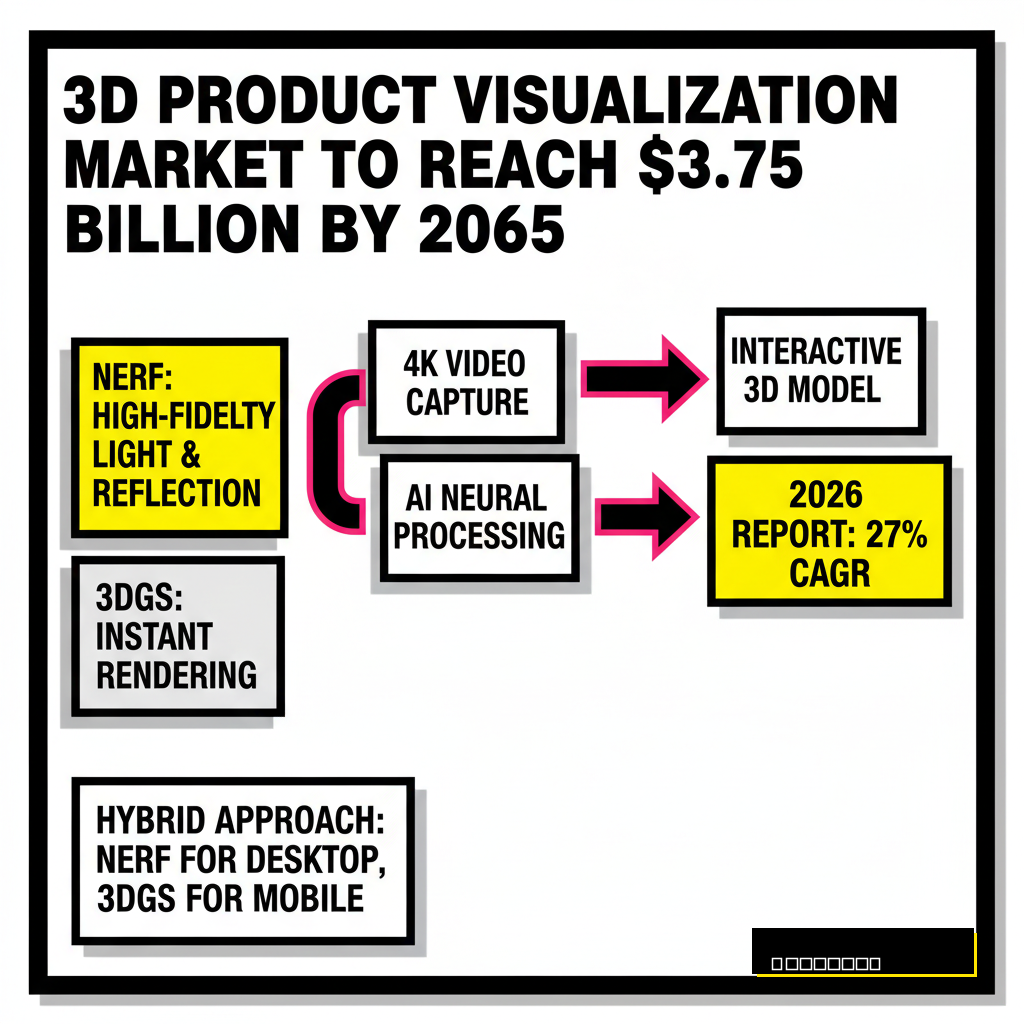

Static product photos feel like relics from a different era. Most creators now realize that high-end commerce requires more than just a gallery of 2D images. You need to provide an experience where customers can rotate, zoom, and inspect every stitch of a product in 4K resolution. Two main technologies dominate this space in 2026: Neural Radiance Fields (NeRF) and 3D Gaussian Splatting (3DGS). While NeRF excels at capturing complex light reflections and transparent materials, Gaussian Splatting has become the go-to for real-time web performance. Choosing between them depends on whether you value absolute visual fidelity or frame-rate stability on mobile devices.

NeRF (Neural Radiance Fields)

- High-fidelity light and reflection handling

- Smaller file sizes for complex geometry

- Requires more compute power for real-time playback

- Best for high-end luxury jewelry and glass

3D Gaussian Splatting (3DGS)

- Instant rendering on almost any hardware

- Easier to edit and manipulate individual points

- Larger initial file downloads

- Best for rapid e-commerce deployment

I have found that the most successful creators often use a hybrid approach. They capture the raw data using a high-quality video pass and then decide on the export format based on the target platform. If you are targeting users on high-end desktop browsers, NeRF provides that extra layer of 'magic' in the lighting. For mobile-first social commerce, 3DGS ensures the experience never stutters. According to a 2026 report from Business Research Insights, the 3D product visualization market is projected to reach $3.75 billion by 2035, growing at a 27% CAGR. You are entering a market that is no longer niche; it is the new standard for digital retail.

The Step-by-Step 4K Reconstruction Pipeline

Building a 4K demo starts with the capture phase. You don't need a $50,000 camera rig anymore. A standard smartphone capable of shooting 4K at 60fps is usually enough, provided your lighting is consistent. Avoid harsh shadows by using a softbox or shooting on an overcast day. Once you have your video, the heavy lifting moves to the cloud. Platforms like Luma AI or open-source frameworks like Nerfstudio process these frames into a spatial volume. This process essentially teaches a neural network how light behaves in your specific scene.

Processing times have dropped significantly over the last year. What used to take hours of GPU time can now be finished in under ten minutes on mid-range hardware. After the training completes, you'll receive a file that contains the geometry and the radiance data. This is where you perform 'cleaning.' You must remove floaters, which are those weird artifacts that sometimes hover around the edges of a scan. Many creators use AI-powered browser tools to help automate these repetitive cleanup tasks before moving to the integration phase. Clean data is the difference between a demo that looks like a professional scan and one that looks like a glitchy video game from 2010.

Scripting and Syncing AI Voiceovers

Adding a narrator transforms a cool visual into a professional sales tool. Instead of making users read text descriptions, let a high-quality AI voice guide them through the product features. In 2026, voice synthesis has reached a point where distinguishing between a human and a machine is nearly impossible. You can use platforms like ElevenLabs to generate a custom voice that matches your brand personality. Whether you want a confident tech expert or a friendly lifestyle coach, the emotional range is all there in the settings.

| Platform | Best For | Key Feature |

|---|---|---|

| ElevenLabs | Lifelike Narration | Emotional depth control |

| Play.ht | Consistent Branding | High-speed API access |

| Murf AI | Studio Quality | Built-in video syncing |

Synchronization is the secret sauce here. You don't just play an audio file on loop. You want the voiceover to trigger based on user interaction. For example, when a user zooms in on the camera lens of a smartphone demo, the AI should explain the aperture settings. Using a simple state-management system in your web code allows the audio to follow the user's curiosity. This level of responsiveness makes the product feel premium. Experts at Cisco suggest that by 2026, systems will handle most of this complexity, allowing creators to focus purely on the storytelling aspect rather than the technical plumbing.

Building the Interactive Web Layer

Once you have your 3D assets and your audio, you need a stage. WebGL and libraries like Spline or Three.js provide the framework for hosting these demos directly in a browser. You want to avoid requiring users to download a separate app. Friction is the enemy of conversion. By embedding the 4K NeRF model into a standard web page, you ensure that anyone with a link can experience it. This is particularly effective for agentic SEO strategies, where AI search engines prioritize rich, interactive content over flat text.

Interactive hotspots are your best friend. These are small, clickable areas on the 3D model that trigger specific actions. I like to use them for 'exploding' views where the product parts separate to show internal components. When a user clicks a hotspot, the AI voiceover should provide context. This creates a self-guided tour of the product. Many creators are now using NVIDIA's latest research into real-time neural rendering to keep these interactions fluid. According to NVIDIA Research, reducing 'floaters' in radiance fields has become significantly easier with new fine-tuning-free diffusion models released early this year. This means your 4K demos will look cleaner with less manual effort.

Performance Benchmarks for 2026 Commerce

Interactive 3D is only effective if it loads quickly. No one will wait thirty seconds for a product demo to initialize. You must optimize your assets for the web. This involves compressing the neural weights of your NeRF model or using Level of Detail (LOD) techniques for Gaussian Splats. By serving lower-resolution versions of the model during the initial load and then 'up-rezzing' to 4K once the user interacts, you maintain a fast perceived load time. These metrics are critical for modern e-commerce success.

Data from Neuralens AI suggests that products with complete and well-structured attributes, including interactive 3D, see significantly higher click-through rates. If you are a creator, you should be tracking these metrics. Keep an eye on your 'Time to Interactive' (TTI) and your bounce rates. If users are leaving before the model loads, your assets are too heavy. Use modern compression algorithms like Draco for meshes or specialized splat compressors to keep your file sizes manageable without sacrificing that 4K clarity. The goal is to make the experience feel native to the web, not like an experimental plugin.

The Future of Spatial Product Storytelling

We are moving toward a world where shopping is predictive and contextual. Dani Nadel, President of Feedvisor, noted that shopping in 2026 will turn into an 'ask and act' experience rather than 'search and scroll.' Your 4K interactive demos will eventually be served by AI agents that understand exactly what a customer needs to see. Imagine an agent that says, 'I see you're worried about the size of this couch; let's look at it in 3D inside a virtual living room.' This is why building high-quality spatial assets now is so important. You are creating the library of data that these future agents will use to sell your products.

Don't be intimidated by the technical requirements. The tools are getting easier to use every month. Start by capturing a simple object and processing it through a cloud NeRF service. Add a basic voiceover and see how it changes the feel of the presentation. You will quickly realize that the emotional impact of a 3D experience is far greater than any 2D video could ever be. As we look ahead, the integration of these demos into social platforms and decentralized marketplaces will only accelerate. The creators who master these spatial tools today will be the ones leading the digital economy tomorrow.