The August 2nd Deadline: Why 2026 is the Year of Reckoning

The clock is no longer ticking. It is ringing. As of today, April 25, 2026, we are less than four months away from the full enforcement of the EU AI Act's Annex III requirements. For any business operating high-risk AI systems in the European market, August 2, 2026, represents a point of no return. This isn't just another bureaucratic hurdle. It is a fundamental shift in how enterprise software is built, deployed, and defended. Organizations that ignored the warnings in 2024 are now finding themselves in a frantic scramble for compliance talent that simply does not exist in sufficient numbers.

A recent 2026 report from Boston Consulting Group indicates that senior executives are now spending approximately 1.7% of their total revenue on AI initiatives. This is a massive jump from just 0.8% in 2025. A significant portion of that capital is flowing directly into governance. Most companies are realizing that throwing human lawyers at the problem is a recipe for bankruptcy. The complexity of modern neural networks makes manual auditing nearly impossible at scale. You cannot ask a human legal team to verify the training data lineage of a model with billions of parameters. It is like asking a librarian to check every grain of sand on a beach for a specific microscopic engraving.

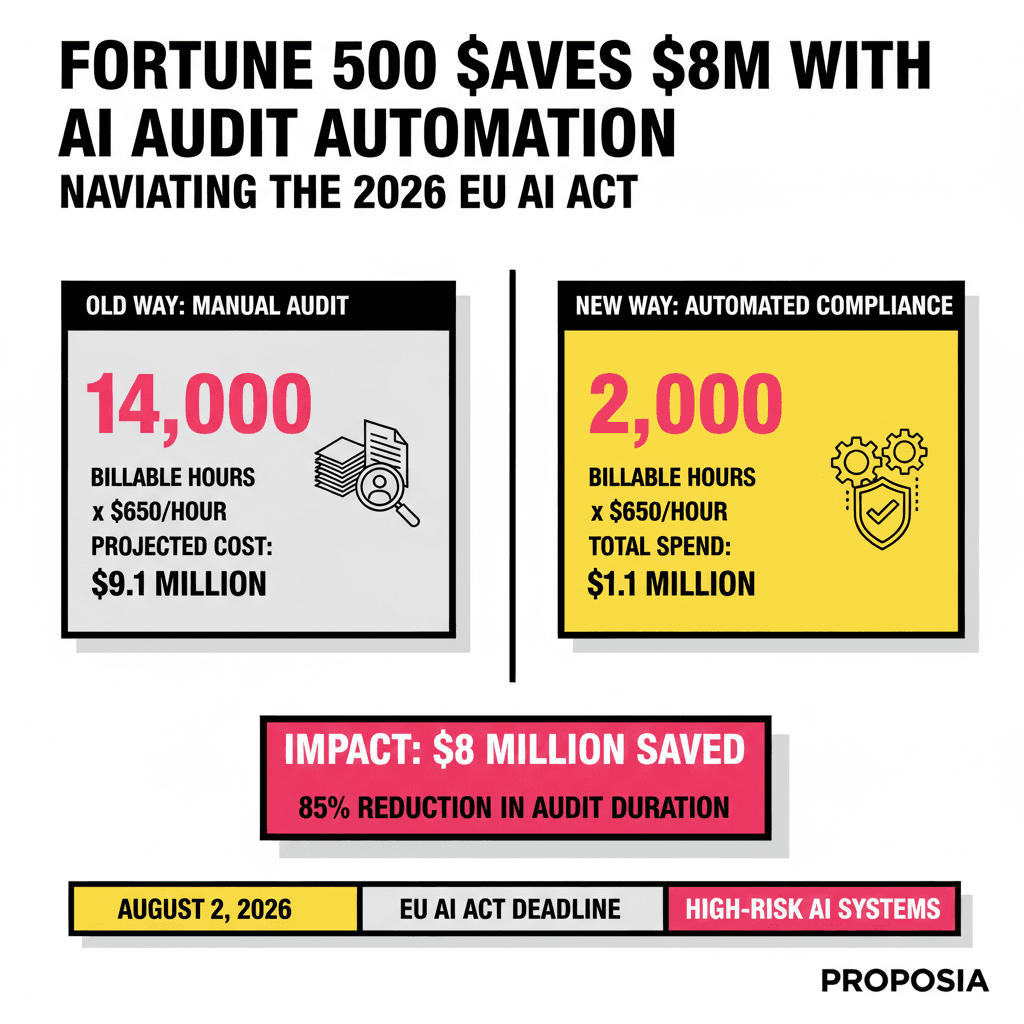

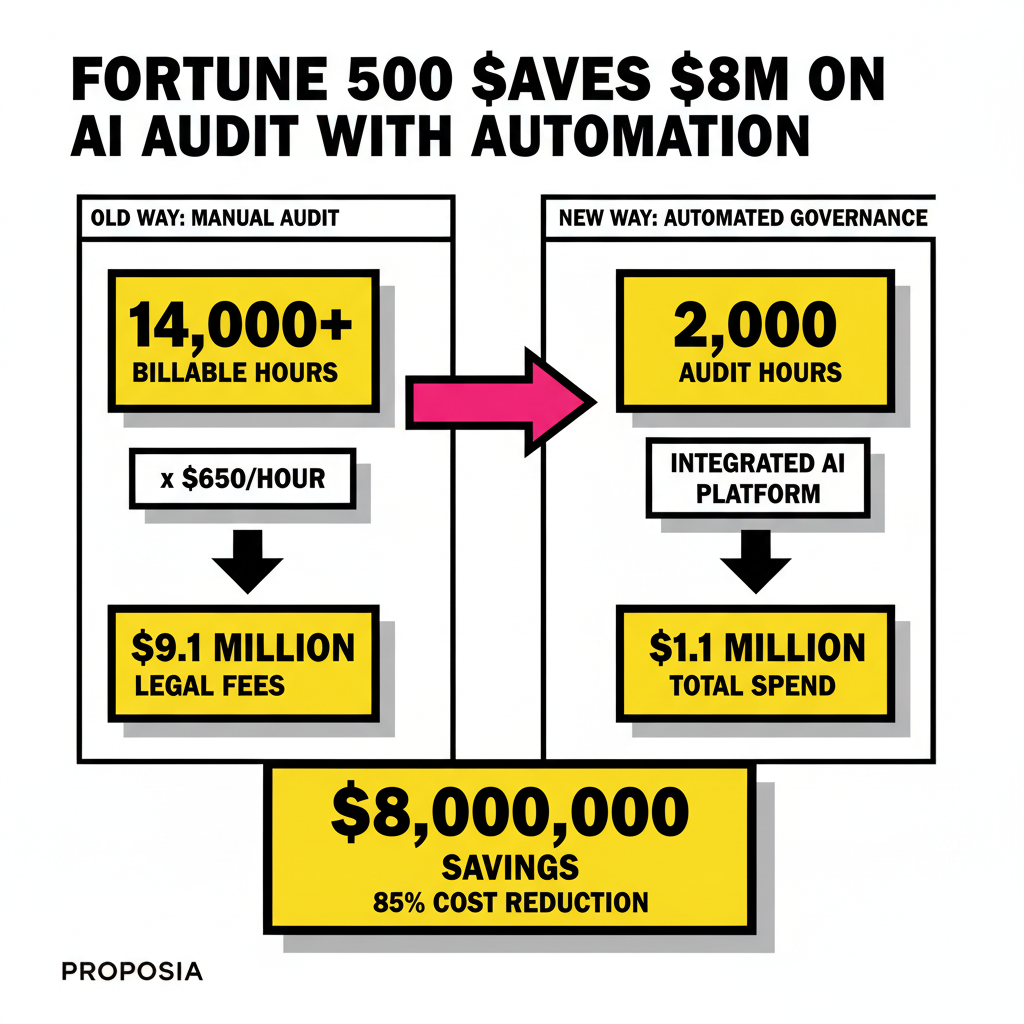

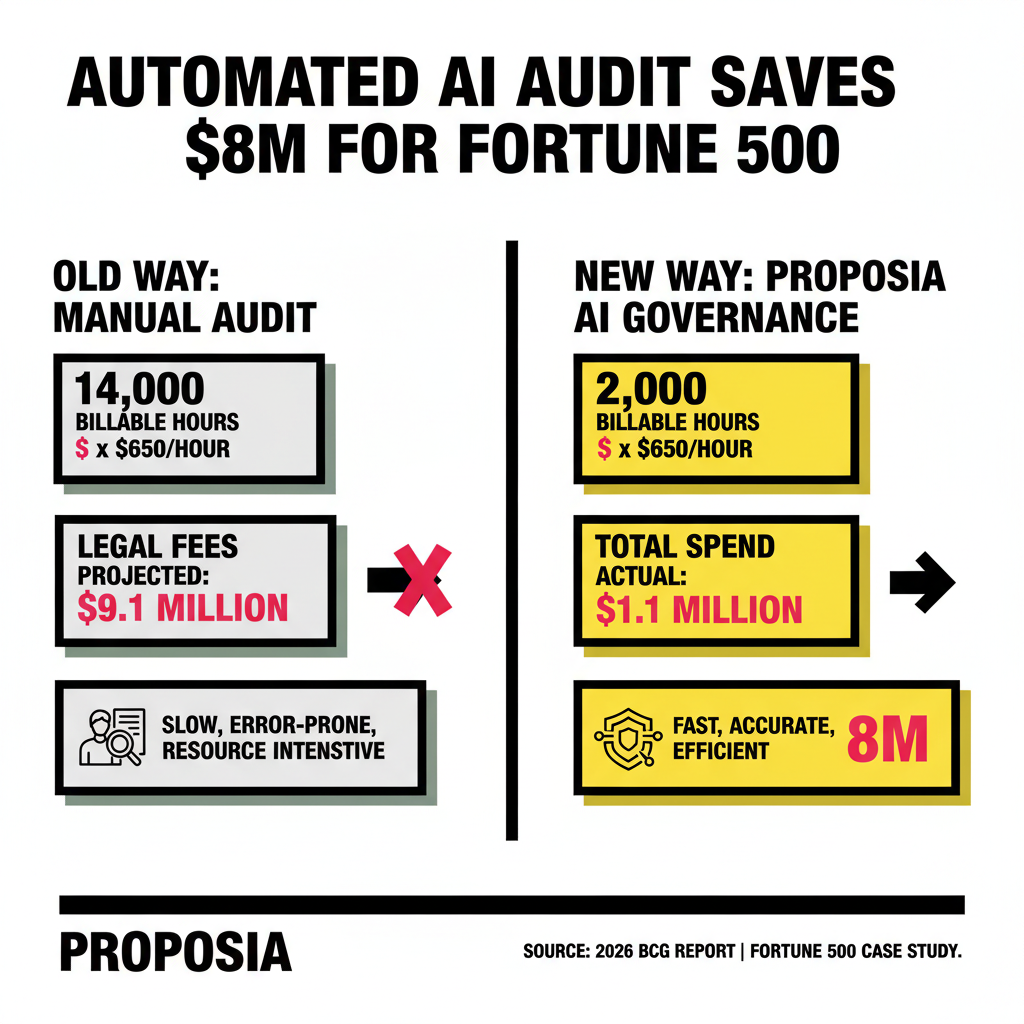

The $8 Million Case Study: Automation vs. The Billable Hour

Last year, a Fortune 500 financial services firm faced a crisis. Their proprietary credit-scoring model, which processes millions of applications annually, was classified as a high-risk system under the new regulatory framework. Initial estimates from their outside counsel suggested that a full manual audit would require over 14,000 billable hours. At an average rate of $650 per hour, the legal bill alone was projected to hit $9.1 million. This figure did not even include the internal operational costs of pulling engineers away from product development to hunt for documentation.

The leadership team chose a different path. Instead of hiring a small army of lawyers, they deployed an automated AI governance layer. This system integrated directly with their development pipelines, scanning every model update for bias, hallucination risks, and data privacy violations in real-time. By the time the formal audit arrived, the platform had already generated 98% of the required technical documentation. The final legal review took less than 2,000 hours. The total spend, including the software license and specialized consulting, came in under $1.1 million. They saved $8 million while achieving a level of transparency that manual reviews could never match.

The Architecture of Compliance: Moving Beyond Checklists

Effective AI governance in 2026 requires more than a simple spreadsheet of risks. Modern systems utilize RAG 2.0 and Knowledge Graphs to maintain a living map of how data flows through an organization. This deep contextual intelligence allows the compliance system to understand not just what a model does, but why it made a specific decision. When a regulator asks for the reasoning behind a rejected loan application, the system can point to the exact node in the knowledge graph that triggered the flag. This level of traceability is the new gold standard for high-risk deployments.

Traditional GRC (Governance, Risk, and Compliance) tools are failing because they treat AI like a static asset. AI is dynamic. It learns, it drifts, and it decays. A static audit is obsolete the moment the model receives a new batch of fine-tuning data. Automated platforms solve this by implementing continuous monitoring. Every inference is checked against a set of guardrails. If the model starts to show signs of bias or begins to hallucinate sensitive information, the system can automatically throttle the output or alert a human supervisor. This proactive stance is what separates the market leaders from the companies currently facing €35 million fines.

Why Manual Audits are a Financial Liability

Relying on manual processes for AI compliance is no longer just inefficient. It is a fiduciary risk. The sheer volume of regulatory updates is overwhelming. In 2025 alone, financial services saw 157 major AI-related regulatory updates globally. No human legal team can stay current with that pace of change while also performing deep technical audits. Automated systems, however, update their rulebooks instantly. They act as an always-on shield, ensuring that even as laws shift in California, Brussels, or Singapore, the underlying technology remains within the lines.

Consider the cost of a compliance failure. Under the EU AI Act, penalties can reach up to 7% of global annual turnover. For a Fortune 500 company, this is a multi-billion dollar threat. When you weigh the cost of an automated governance platform against the potential for a total wipeout of your annual profit, the ROI becomes undeniable. We are seeing a massive shift in how CFOs view these tools. They are no longer seen as an IT expense. They are being treated as essential insurance policies for the digital age.

| Compliance Factor | Manual Audit | Automated Governance |

|---|---|---|

| Average Prep Time | 6-9 Months | Continuous / Real-time |

| Legal Fee Burden | High ($500k - $10M+) | Low ($50k - $250k) |

| Error Rate | High (Sampling only) | Near Zero (100% Coverage) |

The Proposia Advantage: Future-Proofing AI Governance

The transition from experimental AI to mission-critical AI requires a change in mindset. You cannot treat high-risk models like the internal chatbots of 2023. These systems are now the backbone of your business operations. Whether you are using AI engineers like Devin or Cursor to build these tools or buying off-the-shelf solutions, the responsibility for their behavior lies with you. The regulators will not accept "the AI was a black box" as a valid defense in a courtroom.

Proposia provides the visibility required to turn that black box into a glass one. Our platform doesn't just check boxes. It builds a defensive moat around your intellectual property. By automating the evidence collection and risk mitigation process, we allow your teams to focus on innovation rather than litigation. The companies that will win in 2026 and beyond are those that view compliance not as a chore, but as a competitive advantage. If you can prove your AI is safer, more ethical, and more reliable than your competitor's, you won't just avoid fines. You will win the market.

"Effective AI governance is the difference between a company that scales and a company that ends up as a cautionary tale in a law school textbook."

The August deadline is approaching fast. Every day spent on manual documentation is a day lost to your competition. The $8 million saved by our Fortune 500 partner wasn't just a win for their balance sheet. It was a victory for their future. They proved that with the right technology, you can move fast and stay safe. It is time for the rest of the business world to follow suit.

Sourcing Log

- Statistic: Global spending on AI governance and compliance projected to reach $2.54 billion in 2026 - SQ Magazine (April 2026)

- Fact: EU AI Act Annex III high-risk compliance deadline is August 2, 2026 - Legal Nodes (April 2026)

- Statistic: Companies expect to spend 1.7% of revenue on AI in 2026, up from 0.8% in 2025 - CFO.com / Boston Consulting Group (January 2026)

- Statistic: IBM reports companies using AI for compliance see up to 30% cost savings in audits - NanoMatrix Secure (May 2025)

- Statistic: Legal fees represent ~30% of total AI compliance budgets for large enterprises - SQ Magazine (April 2026)