The Countdown to June 2026: From Innovation to Enforcement

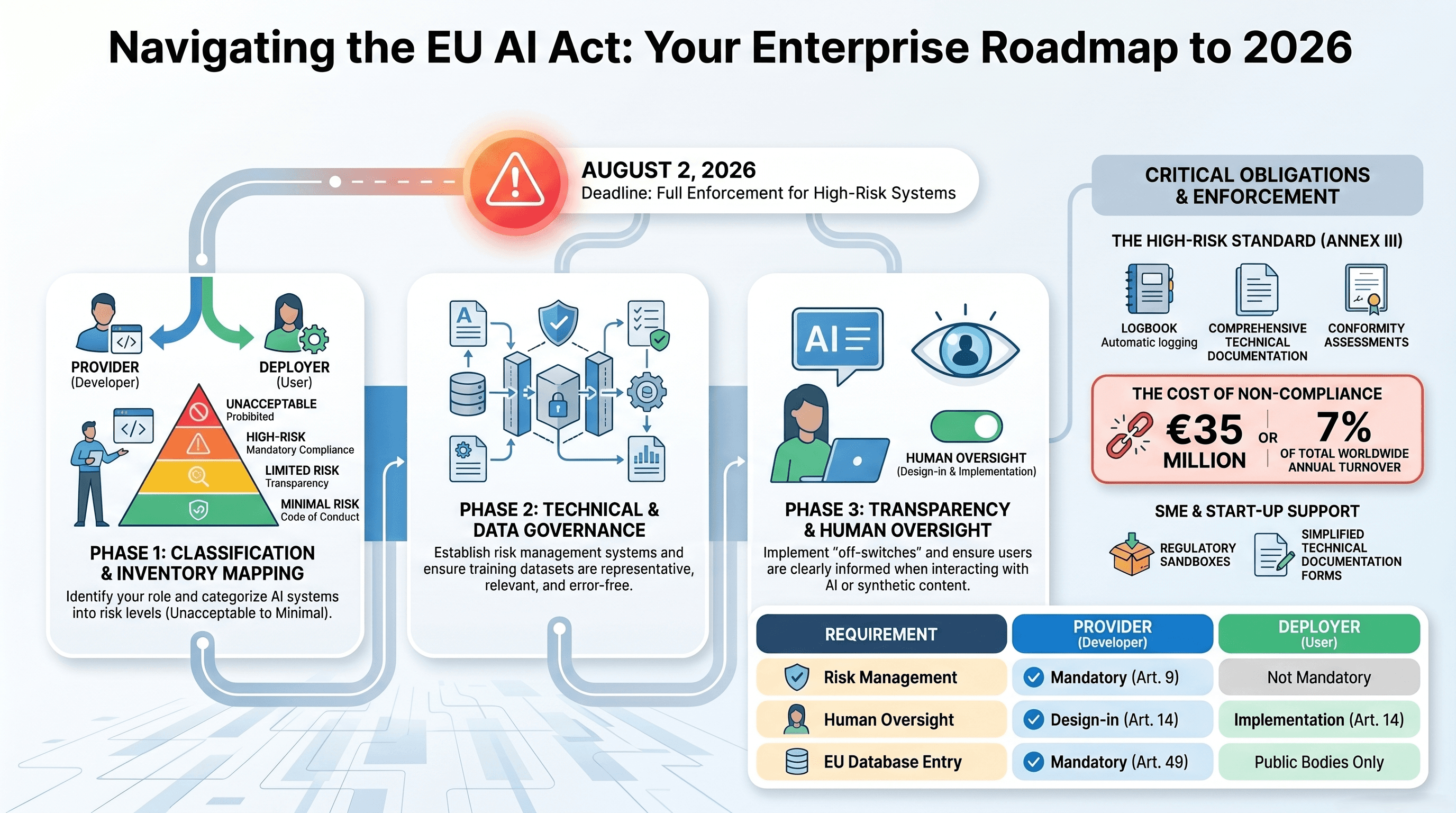

As of April 19, 2026, the European AI ecosystem stands at a critical juncture. The grace period for the European Union Artificial Intelligence Act (EU AI Act) is rapidly closing. While the prohibitions on 'unacceptable risk' systems and the transparency mandates for General-Purpose AI (GPAI) models are already in full effect, the June 2026 deadline marks the final implementation phase for High-Risk AI systems. For startups, this is no longer a legal abstraction—it is a technical bottleneck that defines market access.

The EU AI Office, now fully staffed and operational in Brussels, has begun issuing its first round of inquiries. We are seeing a shift from the 'Move Fast and Break Things' era toward a 'Build Fast and Prove Safety' paradigm. For developers and CTOs, this transition involves more than just updating Terms of Service; it requires a fundamental re-engineering of the machine learning lifecycle (MLLC). If your system falls under the high-risk categories defined in Annex III—spanning critical infrastructure, education, employment, and law enforcement—your window for technical alignment is effectively down to eight weeks.

Classifying the Stack: Are You High-Risk?

Before diving into the engineering requirements, startups must conduct a rigorous classification audit. The EU AI Act does not regulate the technology in a vacuum, but rather its application and context. Under the finalized 2026 guidelines, high-risk systems generally fall into two categories:

- Safety Components: AI used as a safety component of products already covered by EU harmonized legislation (e.g., medical devices, automotive, aviation).

- Specific Use Cases (Annex III): Systems used in biometrics, critical infrastructure management, educational admissions, recruitment (HR-tech), credit scoring, and predictive policing.

If your startup is building a specialized LLM for automated resume screening or a computer vision model for industrial safety, you are likely in the crosshairs.

"The June 2026 deadline is the 'GDPR moment' for AI. Startups that haven't integrated automated logging and bias mitigation into their CI/CD pipelines by now are essentially building products they cannot legally sell in the Single Market." — Dr. Elena Vance, Lead Auditor at Proposia Intelligence.

The Technical Debt of Compliance: Article 10 and Data Governance

Article 10 of the AI Act is perhaps the most demanding for data scientists. It mandates that high-risk systems be trained, validated, and tested on data that is relevant, representative, and to the best extent possible, free of errors. In a world of noisy web-scraped data, this is a high bar.

Implementing Bias Detection and Mitigation

By June 2026, manual spot-checks for bias are insufficient. Startups are now expected to utilize automated frameworks like Fairlearn or AIF360 integrated directly into their training loops. You must be able to demonstrate 'disparate impact' analysis across protected characteristics. This requires a shift from standard loss functions to constrained optimization techniques that penalize biased outcomes without sacrificing significant model utility.

Data Provenance and Lineage

The Act requires comprehensive documentation of data sources and preparation processes. Tools like DVC (Data Version Control) and MLflow are no longer optional 'nice-to-haves.' To meet the June deadline, your technical documentation must provide a clear lineage from raw data ingestion to the final weights. This includes documenting labeling instructions, data cleaning heuristics, and the rationale behind specific feature engineering choices.

The Transparency Mandate: Technical Documentation and Annex IV

Article 11 and Annex IV outline the requirements for technical documentation. If an EU regulator requests your technical file, a simple README.md will result in immediate non-compliance. Your documentation must include:

- A detailed description of the architecture: This includes the model's design specifications, its computational requirements, and the logic of its algorithms.

- Performance Benchmarks: You must move beyond simple accuracy metrics. Regulators are looking for robustness scores, F1-scores across different demographic slices, and performance under adversarial stress tests.

- Risk Assessment: A living document detailing the 'foreseeable' risks and the technical mitigations implemented.

Startups like Mistral AI and Aleph Alpha have set the standard here by publishing extensive transparency reports that go beyond what is required for non-EU competitors. Following their lead, your engineering team should adopt Model Cards for every deployment, providing a standardized summary of the model's capabilities and limitations.

Engineering for Human Oversight: Article 14

One of the most unique aspects of the EU AI Act is the requirement for Human Oversight (Article 14). This is not just a UI/UX concern; it is a backend requirement. The system must be designed in a way that allows human users to understand the output and, if necessary, override it.

Explainability (XAI) as a Requirement

For high-risk systems, 'Black Box' models are a liability. Implementing SHAP (SHapley Additive exPlanations) or LIME is now a baseline requirement for providing local and global explainability. If an AI-driven credit scoring system denies a loan, the developer must ensure the system can output the specific features that led to that decision in a human-readable format. This requires an additional layer of interpretability logic that must be latency-optimized for production environments.

Logging and Traceability (Article 12)

High-risk systems must automatically record events ('logs') during their operation. This isn't just for debugging; it's for legal accountability. Your infrastructure must support:

- Recording the period of use.

- Logging the input data used for each inference.

- Tracking the outputs and any human interventions or overrides.

General-Purpose AI (GPAI) and Systemic Risk

While the focus of the June 2026 deadline is on high-risk applications, startups building on top of GPAI models (like GPT-4, Claude 3.5, or Llama 3) must be aware of the Systemic Risk classification. If you are fine-tuning a model with a cumulative computing power of more than 10^25 FLOPs, you face additional obligations.

Even if you are just an API consumer, you are responsible for the 'downstream' compliance. You must ensure that the foundational model provider (e.g., OpenAI or Anthropic) has provided the necessary technical documentation that allows you to fulfill your obligations as a 'provider' or 'deployer' under the Act. In 2026, we are seeing a 'Compliance-as-a-Service' market emerge, where companies like Hugging Face provide automated compliance checks for models hosted on their platform.

Key Takeaways for AI Developers

- Audit Your Use Case: Immediately determine if you fall under Annex III. High-risk status changes your entire development roadmap.

- Automate Your Documentation: Use tools like Sphinx or MkDocs integrated with your ML metadata store to keep your technical files current.

- Implement Robust Logging: Ensure every inference is traceable and that human overrides are flagged in your database.

- Focus on XAI: Prioritize explainability in your model selection. A slightly less accurate but explainable model is often better for compliance than a complex ensemble.

- Budget for Compliance: Compliance is an engineering cost. Expect to dedicate 15-20% of your development cycles to monitoring, auditing, and documentation.

What This Means: The Future of 'Regulated Innovation'

The June 2026 deadline is not the end of AI innovation in Europe; it is the beginning of its professionalization. The startups that thrive will be those that view compliance not as a hurdle, but as a competitive advantage. In a market saturated with unverified 'wrapper' apps, a CE Marked high-risk AI system signals a level of reliability and safety that enterprise clients—especially in the public sector and healthcare—will demand.

Looking forward, the influence of the EU AI Act will likely mirror the 'Brussels Effect' seen with GDPR. We expect the United States and Canada to adopt similar framework-based regulations by 2027. By mastering these technical requirements now, European startups are effectively preparing themselves for a global market where 'Safe AI' is the only AI allowed to operate at scale. The cliff is coming, but for those with the right technical infrastructure, it’s a launchpad, not a fall.