The Shift from Training to National Sovereignty

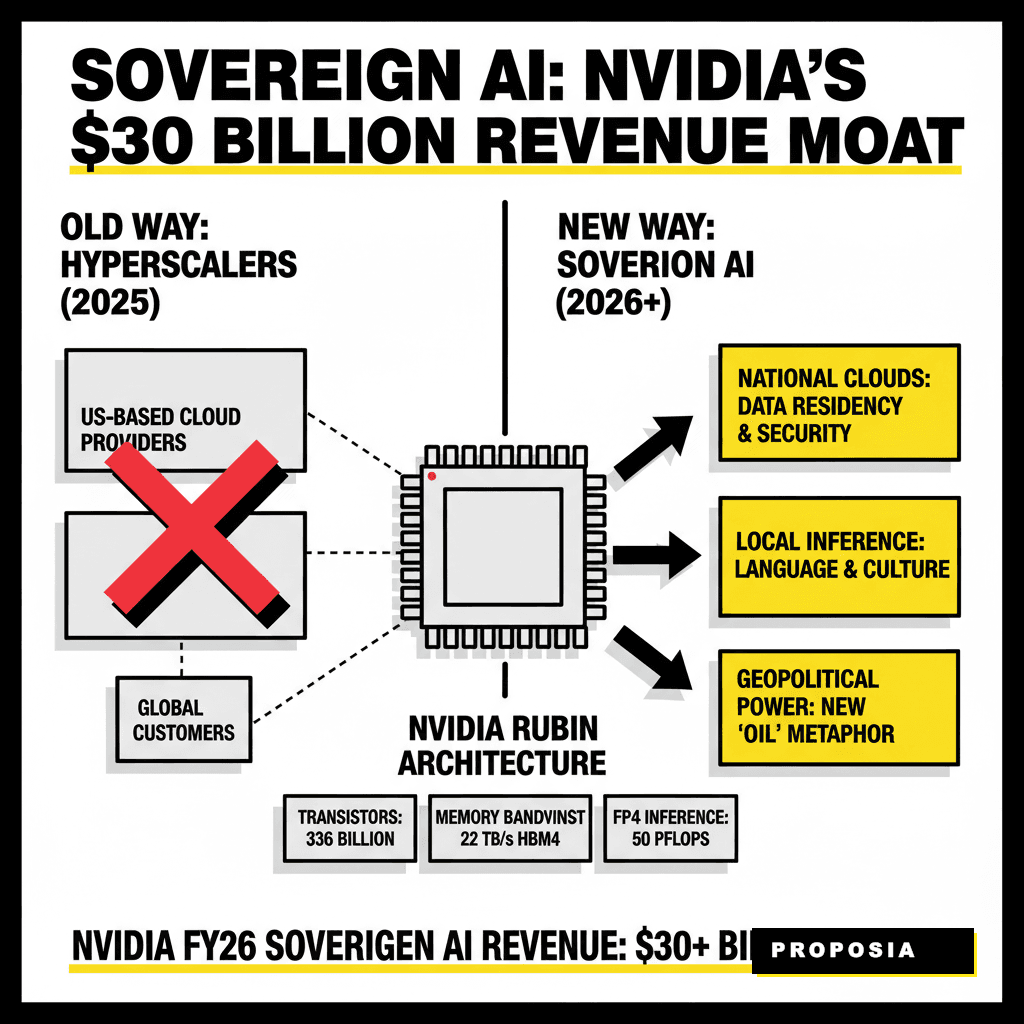

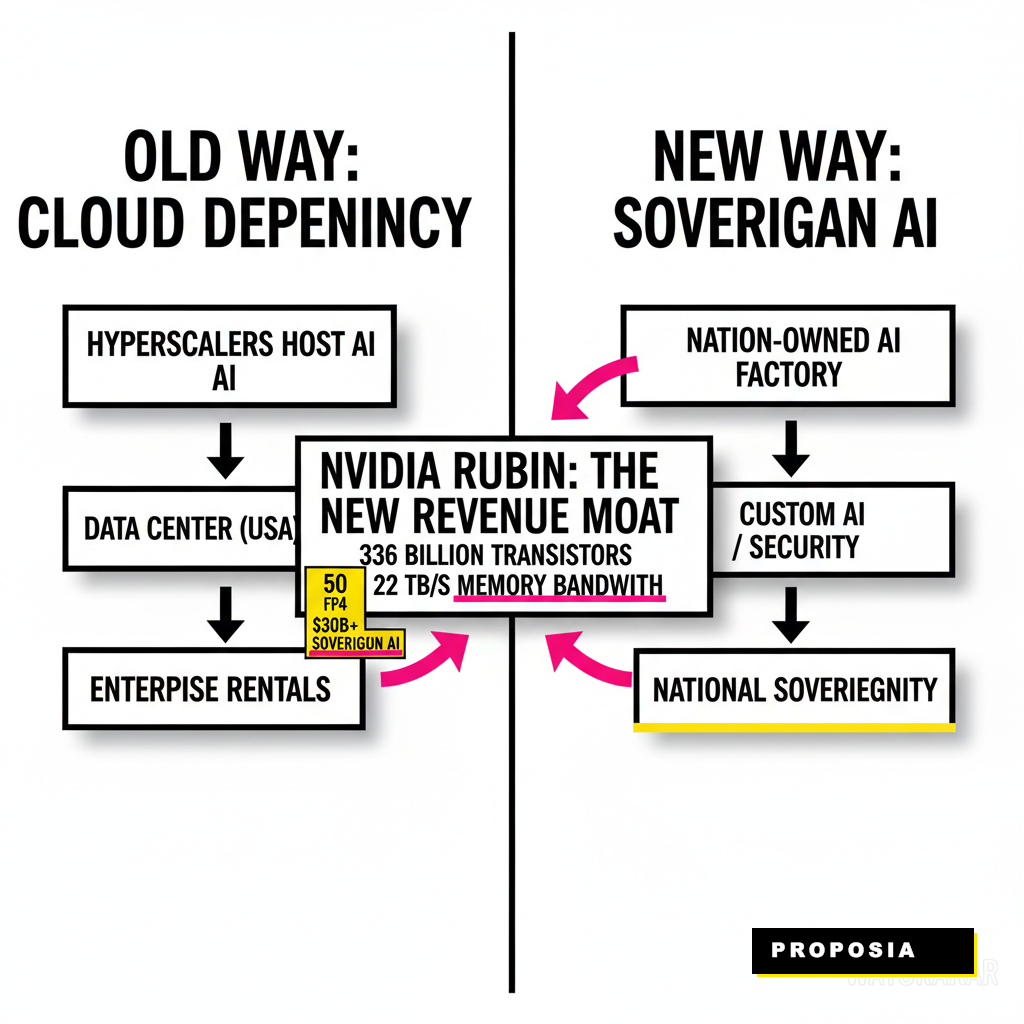

Jensen Huang didn't just announce a chip; he announced a geopolitical shift. While the Blackwell architecture dominated 2025, the arrival of the Rubin platform marks a transition from general-purpose AI to nationalized intelligence. Governments now view compute as the new oil. You're seeing a move away from reliance on a few US-based hyperscalers toward infrastructure owned and operated within domestic borders.

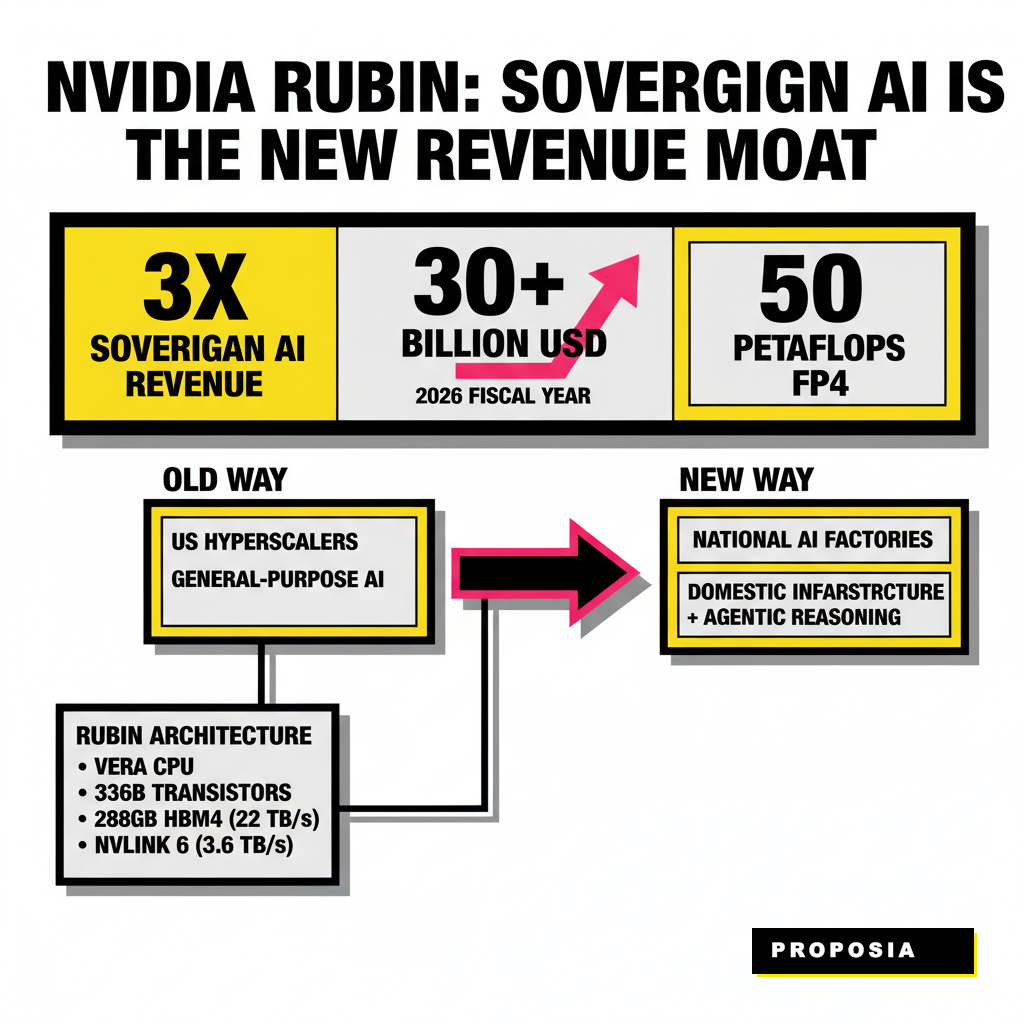

Nvidia reported that its sovereign AI revenue tripled in fiscal year 2026, exceeding $30 billion. This surge isn't a fluke. It represents a fundamental re-architecting of the global computing stack. Every nation now wants its own AI factory to protect its language, culture, and security data. Rubin is the engine designed specifically for this era of agentic reasoning and local inference.

Inside the Rubin Architecture: A Technical Moat

Rubin represents a massive leap in compute density and memory bandwidth. The platform utilizes the new Vera CPU paired with the Rubin GPU, creating a tightly coupled system that eliminates traditional bottlenecks. According to technical documentation released at GTC 2026, the Rubin GPU features 336 billion transistors, a significant increase from Blackwell's 208 billion. This density allows for 50 petaFLOPS of FP4 inference performance per chip.

Memory is the real star here. Each GPU uses 288GB of HBM4 memory, delivering 22 TB/s of bandwidth. That is nearly three times the bandwidth of the previous generation. Faster memory means models can reason through complex tasks without waiting for data to move between nodes. High-speed interconnects like NVLink 6 provide 3.6 TB/s of bidirectional bandwidth, ensuring that a rack of 72 GPUs operates as a single, massive brain.

| Specification | Blackwell (2025) | Rubin (2026) |

|---|---|---|

| Transistor Count | 208 Billion | 336 Billion |

| Memory Type | HBM3e | HBM4 |

| Memory Bandwidth | 8 TB/s | 22 TB/s |

| FP4 Inference | 20 PFLOPS | 50 PFLOPS |

Why Sovereign AI Contracts are Stickier than Cloud Sales

Enterprise sales are often subject to quarterly budget whims. In contrast, sovereign AI contracts are multi-year infrastructure commitments backed by national treasuries. These projects resemble the construction of power grids or highway systems. Once a nation builds its domestic AI cloud on the CUDA ecosystem, the switching costs become prohibitive. This creates a revenue moat that protects Nvidia from the cyclical nature of the tech sector.

Nations are not just buying chips; they are buying autonomy. Export controls and supply chain uncertainties have made dependence on foreign clouds look risky for many world leaders. By owning the silicon and the data centers locally, governments ensure their digital future remains under their own control. Jensen Huang emphasized this at Davos 2026, stating that AI has become as essential to national development as electricity.

Global Momentum: From London to Riyadh

The race for sovereign compute is already visible on every continent. The United Kingdom has launched an £18 billion infrastructure program, which includes the Stargate UK project aimed at deploying 60,000 GPUs by the end of 2026. This initiative focuses on building domestic models that understand the nuances of British law and public service. Similarly, Saudi Arabia's HUMAIN project is part of a $100 billion investment to turn the kingdom into a global AI hub.

Japan and France are following a similar playbook. Japan is investing billions to build domestic compute clusters that support its robotics and manufacturing sectors. France is focusing on cultural sovereignty, ensuring that its large language models are trained on French data and values. These nations are not just customers; they are building the foundation for their next century of economic growth.

Strategic Takeaways for the C-Suite

Business leaders must recognize that the AI landscape is fragmenting. The era of a single, global AI model is ending. Companies operating in multiple regions will soon need to navigate a patchwork of sovereign clouds, each with its own data residency and compliance rules. Preparing for this reality requires a flexible infrastructure strategy that can span across different national environments.

Understanding these shifts is critical for avoiding costly mistakes. We've seen how navigating the 2026 AI audit can save millions in compliance fees. Companies must also invest in RAG 2.0 and knowledge graphs to ensure their proprietary data remains useful across different sovereign platforms. The future belongs to those who can integrate their intelligence into these local factories without losing their global competitive edge.

Nvidia's Rubin architecture is the physical manifestation of this new world order. By providing the hardware that makes sovereign AI economically viable, the company has secured its position for years to come. For businesses, the message is clear: the moat is no longer just about who has the best model, but who owns the ground it runs on.

Sourcing Log

- Statistic: Nvidia reported FY2026 revenue of $215.9 billion, a 65% increase year-over-year. - AlphaStreet

- Statistic: Sovereign AI revenue tripled in FY2026, exceeding $30 billion. - Forbes

- Fact: Nvidia Rubin GPU features 336 billion transistors and 288GB of HBM4 memory. - Barrack AI

- Fact: The UK government is pursuing an £18 billion infrastructure program, including the Stargate UK project. - Business 2.0 News

- Quote: "AI is no longer a single breakthrough or application – it is essential infrastructure." – Jensen Huang, March 2026. - Colorado AI News