The Death of the Chatbot, the Birth of the Agent

As we navigate the second quarter of 2026, the industry has reached a definitive consensus: the era of the monolithic, single-purpose chatbot is over. For developers and enterprise architects, the focus has shifted from simple retrieval-augmented generation (RAG) to the complex orchestration of Multi-Agent Systems (MAS). In the Microsoft ecosystem, this evolution is centered around the rapid maturation of Copilot Studio and its integration with the broader Power Platform.

We are no longer building interfaces that simply 'talk' to users; we are building ecosystems of autonomous workers that 'talk' to each other, execute logic, and manage state across fragmented enterprise data silos. This article explores the technical nuances of orchestrating these workflows, the architectural patterns that drive efficiency, and the governance frameworks required to keep autonomous agents from drifting off-course.

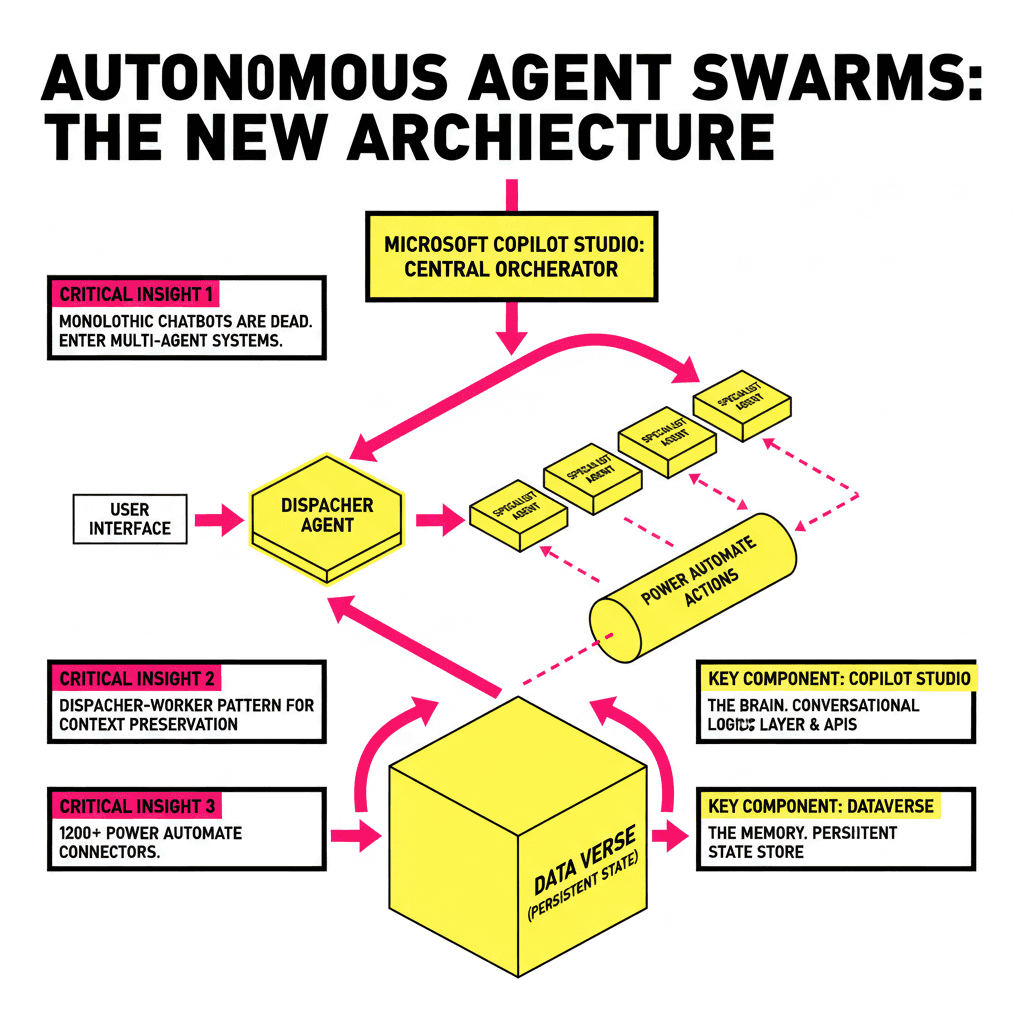

The Architecture of Multi-Agent Orchestration

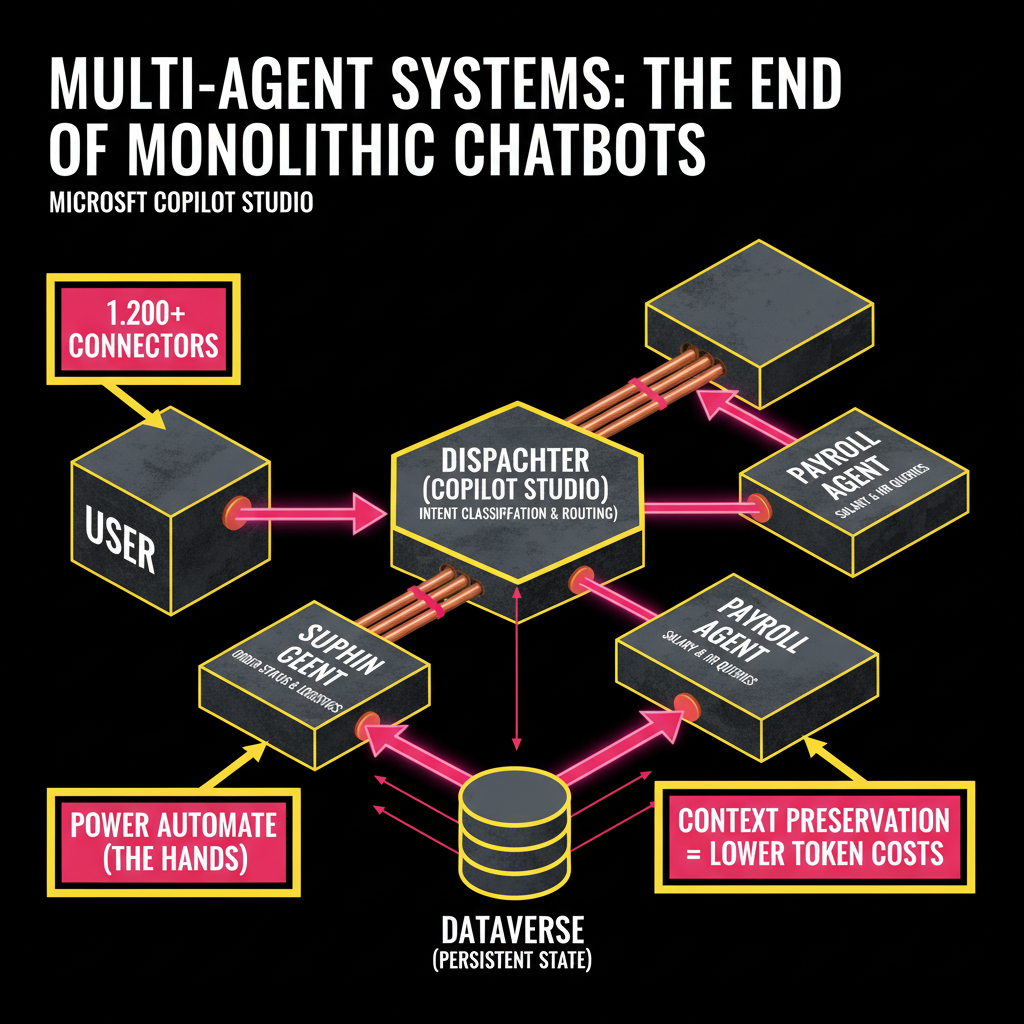

In a multi-agent setup within Copilot Studio, the primary challenge is the Dispatcher-Worker pattern. Instead of a single agent attempting to hold the context for an entire HR, Finance, and IT support manual, we deploy a 'Dispatcher' agent. Its sole responsibility is intent classification and routing to 'Specialist' agents.

These specialists are built as independent Copilots or specialized topics with dedicated 'Actions' powered by Power Automate flows. The beauty of this modularity is context preservation. By isolating the 'Supply Chain Agent' from the 'Payroll Agent,' we reduce the noise in the LLM's prompt window, leading to higher accuracy and significantly lower token costs.

Key Components in the 2026 Stack

- Copilot Studio (The Brain): Handles the conversational logic, agent-to-agent handoffs, and natural language understanding (NLU).

- Power Automate (The Hands): Acts as the 'Action' layer, connecting agents to 1,200+ pre-built connectors or custom APIs.

- Dataverse (The Memory): Serves as the persistent state store. Unlike the ephemeral memory of an LLM session, Dataverse allows agents to 'remember' a user's preference across different sessions and agents.

- Azure AI Agent Service: For developers needing deeper programmatic control, this service allows the hosting of custom Python-based agents (using frameworks like AutoGen) that can be called directly by Copilot Studio.

Technical Deep Dive: Implementing 'State' and 'Hand-off'

One of the most complex aspects of multi-agent workflows is the transfer of Variable State. When Agent A finishes a task and hands it to Agent B, the user shouldn't have to repeat themselves. In Copilot Studio, we achieve this using Global Variables and Output Parameters.

For example, if an 'Insurance Claims Agent' identifies a policy number, that variable must be passed as an input parameter to the 'Adjuster Agent.' In 2026, we utilize Semantic Kernel integration to automate this. Semantic Kernel allows us to define 'Planners' that automatically decide which tools and agents are necessary to fulfill a complex request, effectively acting as an 'Auto-Router' for variables.

The Role of Dataverse in Long-term Memory

Traditional RAG is great for static knowledge, but multi-agent systems require Long-term Memory (LTM). By using Dataverse as a vector store (leveraging its native vector search capabilities released in late 2024), agents can store summaries of previous interactions. Before a session begins, the Dispatcher Agent performs a vector search against the user's ID to retrieve a 'Context Summary,' which is then injected into the System Prompt of the worker agents.

| Feature | Single-Agent (Legacy) | Multi-Agent (Modern) |

|---|---|---|

| Domain Knowledge | Broad but shallow; prone to confusion. | Deep and specialized; segmented by topic. |

| Scalability | Hard to update without retraining. | Modular; add/remove agents without downtime. |

| Error Handling | Fails globally on complex tasks. | Localized failure; fallback to Dispatcher. |

Governance and the 'Human-in-the-loop' (HITL)

As we grant agents more autonomy—such as the ability to trigger payments or modify database records—governance becomes the primary bottleneck. Microsoft has addressed this with Copilot Guardrails. Developers can now implement 'Approval Gates' within Power Automate flows that are triggered by agent actions.

"Autonomy without accountability is just automated chaos. In the Power Platform, the 'Human-in-the-loop' is no longer a safety net; it is a core architectural component of the multi-agent workflow." — Elena Rodriguez, Lead AI Architect at Proposia.

When an agent determines it needs to execute a high-risk action (e.g., refunding >$500), it pauses its state, sends a notification via Teams to a human supervisor, and resumes only once an 'Approve' signal is received. This asynchronous wait-state is handled natively by Power Automate's approval engine, ensuring the agent doesn't consume compute resources while waiting.

The Developer's Path Forward

Building in this new paradigm requires a shift in mindset. Developers must move away from writing procedural code and toward Declarative Orchestration. Your job is no longer to tell the system *how* to do something, but to define the *boundaries* and *tools* available to the agents.

Key skills for the 2026 developer include:

- Prompt Engineering for Inter-Agent Communication: Learning how to format outputs from one agent so they are perfectly parsed by the next.

- Vector Database Management: Understanding how to index and retrieve context from Dataverse or Azure AI Search.

- Logic App / Power Automate Optimization: Reducing latency in the 'Action' layer to ensure the agent feels responsive.

The transition from chatbots to multi-agent workflows represents the most significant leap in enterprise productivity since the move to the cloud. By leveraging Copilot Studio as the orchestrator, organizations can finally realize the promise of AI: not just as a tool that answers questions, but as a digital workforce that gets things done.